“Deepfake” techniques today are capable of producing artificial intelligence-generated videos of real people doing fictional things or fictional people doing real things. Their applications are being invented along the way ever since the success of GANs. The deep learning community is still partially clueless about the outcomes of the existing malicious content. That is why, industry experts have been collaborating to create awareness amongst the community. As a machine learning practitioner, one can do their own part by availing the resources on deep fake.

Here we look at a few available options that can help tackle the deep fakes:

Video DeepFake Detection With Google’s DeepFake Dataset

Last week, Google in collaboration with Jigsaw released a dataset of deepfakes. The researchers worked with consenting actors to record hundreds of videos in the process of preparation of the dataset. Using publicly available deepfake generation methods, many deepfakes were created from these videos. The resulting videos, real and fake, comprise our contribution, which was created to directly support deepfake detection efforts. This dataset is now available, free to the research community, for use in developing synthetic video detection methods.

Crunching Facial Datasets

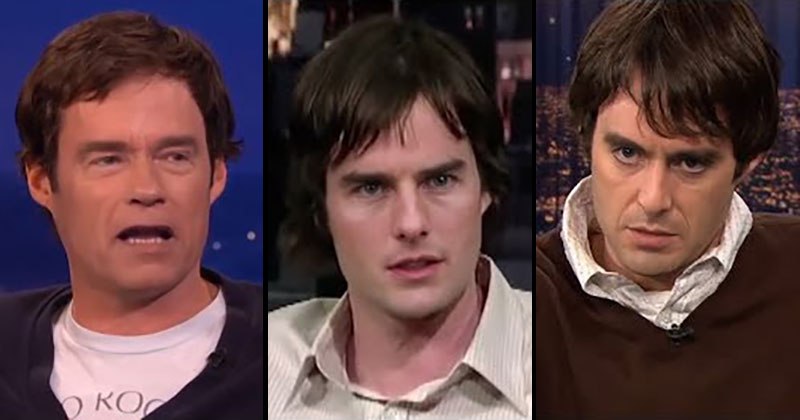

There are a bunch of datasets that have been generated manually over the years for various image recognition tasks. Now with the rise of deepfakes, datasets containing faces of famous people who are usually prone to attacks can be of great use.

Here are few:

- Microsoft’s 1 million dataset: The 1 million celebrities are obtained from Freebase based on their occurrence frequencies (popularities) on the web. Grounding the face recognition task to a knowledge base has many advantages. First, each people entity on Freebase is unique and clearly defined without disambiguation, making it possible to define such a large scale face recognition task. Second, each entity naturally has multiple properties (e.g. gender, date of birth, occupation), providing rich and valuable information for data collecting, cleaning, and multiple task learning.

- The ‘Celebrity Together’ dataset: The ‘Celebrity Together’ dataset has 194,000 images containing 546k faces in total, covering 2622 labeled celebrities (same identities as VGGFace Dataset). 59% faces correspond to these 2622 celebrities, and the rest faces are considered as ‘unknown’ people. The images in this dataset were obtained using Google Image Search and verified by human annotation.

- The IMDB-WIKI dataset: Contains list of the most popular 100,000 actors as listed on the IMDb website and from their profiles date of birth, name, gender and all images related to that person.

Forgery Localisation using LSTMs

This approach involves the use of a high-confidence manipulation localisation architecture that utilises resampling features, long short-term memory (LSTM) cells, and an encoder-decoder network to segment out manipulated regions from non-manipulated ones. Resampling features are used to capture artifacts, such as JPEG quality loss, upsampling, downsampling, rotation, and shearing. This network exploits larger receptive fields (spatial maps) and frequency-domain correlation to analyse the discriminative characteristics between the manipulated and non-manipulated regions by incorporating the encoder and LSTM network.

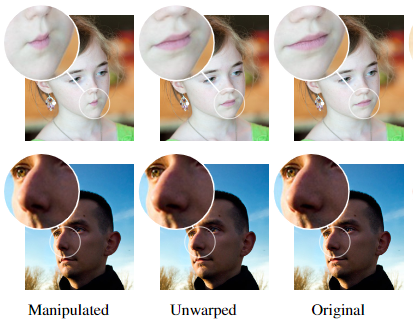

Using Adobe Forensics

To keep the malicious actors at bay, Adobe Research team in collaboration with UC Berkeley developed a system to detect photoshopped faces. Face-Aware Liquify tool in Photoshop is scripted directly, which abstracts facial manipulations into a high level, such as “increase nose width” and “decrease eye distance”.

Contributing To Facebook’s DFDC Challenge

To thwart unwanted consequences of Deepfake, Facebook, the Partnership on AI, Microsoft, and academics from Cornell Tech, MIT, University of Oxford, UC Berkeley, University of Maryland, College Park, and University at Albany-SUNY are coming together to build the Deepfake Detection Challenge (DFDC).

Keeping An Eye On GANs Performance

Generative Adversarial Networks (GANs) have found prominence over the last few years. From deep fakes to generating faces of people that don’t exist, GANs have been deployed for quite unpopular yet alarming applications. Here are a few metrics that can be used to validate GANs:

Several promising new methods for spotting and mitigating the harmful effects of Deep Fakes are coming on stream, including procedures for adding ‘digital fingerprints’ to video footage to help verify its authenticity. As with any complex problem, it needs a joint effort from the technical community, government agencies, media, platform companies, and online users to combat their negative impact.