AI is quickly changing the way human being and our accompanying computers perceive the world. As data collection methods become more and more comprehensive, controlled and regulated, organisations are tempted to experiment with AI to enhance user experiences. Aiming to provide a personalised way of perceiving applications, accessibility features for the differently abled, or just for entertainment purposes, AI has taken its place as one of the catalysts of the next app revolution.

AI is quickly changing the way human being and our accompanying computers perceive the world. As data collection methods become more and more comprehensive, controlled and regulated, organisations are tempted to experiment with AI to enhance user experiences. Aiming to provide a personalised way of perceiving applications, accessibility features for the differently abled, or just for entertainment purposes, AI has taken its place as one of the catalysts of the next app revolution.

Join us as we take a deeper dive into the various ways AI inference is used on-device to enhance user experience.

Apple’s FaceID

Perhaps one of the most famous uses of AI in modern smartphones, Apple’s FaceID technology was released with the iPhone X. It features industry standard facial recognition technology using IR sensors to enable a secure method of login for iPhone users. To achieve the power for this, Apple also created an onboard inference module, elevating the state of smartphone AI processing.

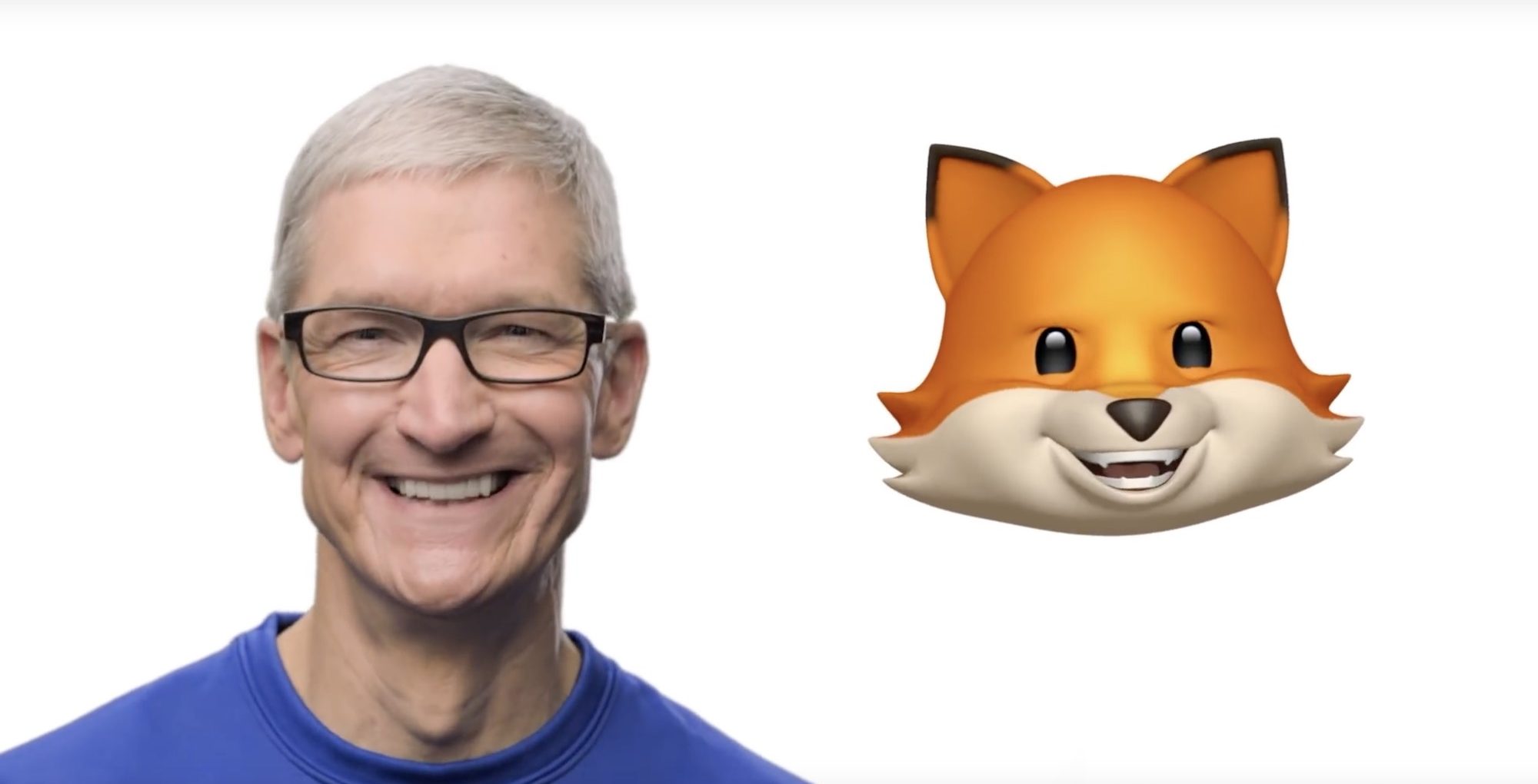

Animoji

Similar technology was used for the implementation of the Animoji feature on the ‘X’ series phones. This implements facial point tracking using the same IR sensors and on-device inference processing. The feature allows the user to map the features of their face onto an animated emoji, allowing them to manipulate features of the animation by simply mirroring it in their own face.

Seeing Eye by Microsoft

This application published by Microsoft is meant for users who have visual impairments or cannot see. It provides image recognition features, and is able to recognise objects in the frame and read them a description of what the camera is looking at. This is also characteristic of Microsoft, as they have undertaken an initiative known as AI For Good that is focused on developing applications for individuals with accessibility concerns.

Google Assistant

Even as other companies are innovating in providing AI apps, Google remains at the top of the game. The level of dedication to AI features on their phones is seen by the continual improvement of their Google Assistant app, to the point where it can pick up calls for its users and speak like a human. The Assistant provides a bevy of other features and depends on on-device inference to answer the user’s vocal queries.

Gmail

Google’s old email client and one of their crown jewels recently got a fresh new coat of paint due to its revamp focused on AI features. Google is using their vast troves of data to enhance user experience. Google uses ML not only for complex screening and categorization of emails, but also a predictive text engine. This was created for use in their Smart Compose feature, which allows quick replies to be sent to emails using AI.

Google Lens

Google Lens is a product that functions in the image recognition market, but integrates tightly with Google’s web search services. This is due to the fact that the Lens app scans a product, identifies it, and returns Google search results for it. This makes it similar to Google’s reverse image search, except in real-time and with more cognitive features.

AR Face Masks

AR Face Masks were all the rage when Snapchat began implementing them on their app. These features, which are now integrated into both Instagram and Facebook, allow for the tracking of a users’ facial features and drawing a mask on them. This results in a natural looking picture, although with the face of a dog drawn on top. This has also extended to automatically tracking elements in videos, allowing for text to be pinned to a specific object.

AI-based Portrait Modes, Beauty Filters, and Camera Post-Processing

This feature has now made an appearance on nearly every new phone, and utilises on-device inference to enhance the quality of images taken by the camera. This also includes applying portrait mode and beauty mode filters through facial recognition and artifical blurring and enhancement.

This list shows us that AI and ML have entered into our daily lives without us realizing. It is introducing convenience and personalization to our procedures and aims to offload some of the work that humans have to do on our own.

Microsoft Office 365

Microsoft’s newest offering of Office products integrate AI at the basis in Word, Powerpoint and Excel. Word is using AI for the grammar check and for smart formatting on tools. PowerPoint uses AI for theming the presentation and Excel can import photos as editable tables using image recognition software. These will enable AI to be more widely adopted in the workplace.