NVIDIA Develops Automatic Speech Recognition Model for Telugu

Telugu is one of the country’s most commonly spoken languages, with more than 75 million speakers in southern India. In the US, Telugu population was

Telugu is one of the country’s most commonly spoken languages, with more than 75 million speakers in southern India. In the US, Telugu population was

Integrating AI to complement human intelligence in critical functions like sales and marketing is becoming a norm. Conversational AI has evolved from being a way

Facebook AI Research (FAIR) recently trained a single acoustic model for multiple languages with the aim of improving automatic speech recognition (ASR) performance on low-resource

Mozilla is riding on its open-source initiatives and is continuously working on becoming a foundation for developers to innovate in machine learning landscape. The firm

Speech recognition is the process of decoding human voices and is a part of machine learning. Organisations are implementing Automatic Speech Recognition (ASR) technology to

Last year, Apple witnessed several controversies regarding its speech recognition technology. To provide quality control in the company’s voice assistant Siri, Apple asked its contractors

Under the CC BY 4.0 license, Parakeet distinguishes itself through its extensive training on a vast dataset of 64,000 hours of audio.

NVIDIA’s Avatar Cloud Engine (ACE) update brings advanced animation and speech features to AI avatars, enabling realistic expressions and conversations.

At the annual conference of the International Speech Communication Association, Meta presented more than 20 papers primarily focusing on NLP

We’ve picked out the best of 20+ research papers Google will be presenting at the event

A critical first step towards supporting 1,000 languages.

The model’s encoder is pre-trained on a vast unlabeled multilingual dataset of 12 million hours that covers over 300 languages.

Researchers are making great progress in the field of speech separation and recognition using various methods, but the solution and the biggest challenge still is inferring sounds as separate sources of speech instead of a single speaker.

The company’s open-sourced models and inference code serve as a foundation for building useful applications and boost further research on robust speech processing.

The Speech-to-text API is available in all Google Cloud regions and can be accessed by all GCP users.

XLS-R substantively improves upon previous multilingual models by training on nearly ten times more public data in more than twice as many languages.

Facial recognition technology is being leveraged way beyond unlocking our phones; it is aiming to identify every person on the planet, for good or bad.

AI-assisted cross-lingual conversation is a challenging problem. To this end, Google introduced Translatotron in 2019.

Speech-to-speech translation can aid communication between people who speak different languages.

Google claims the revised version can successfully transfer voice even when the input speech consists of multiple speakers.

Last year, Facebook open-sourced graph transformer networks (GTN), a framework for automatic differentiation with a weighted finite-state transducer graph (WFSTs). To put things in perspective,

Article is about Pitch Recognition, aka Pitch Estimation.

Facebook AI Research (FAIR) has published a research paper introducing Hidden Unit BERT (HuBERT), their latest approach for learning self-supervised speech representations. According to FAIR,

Ahmedabad-based VSpeech.ai was founded in 2015. The startup sensed an opportunity while working with Interactive Voice Response (IVR) call centres, and soon pivoted to IVR

Recent advances in computer vision, pattern recognition, and signal processing have led to a budding curiosity in automating the challenging task of lip reading. Visual

The Librispeech dataset is SLR12 which is the audio recording of reading English speech.

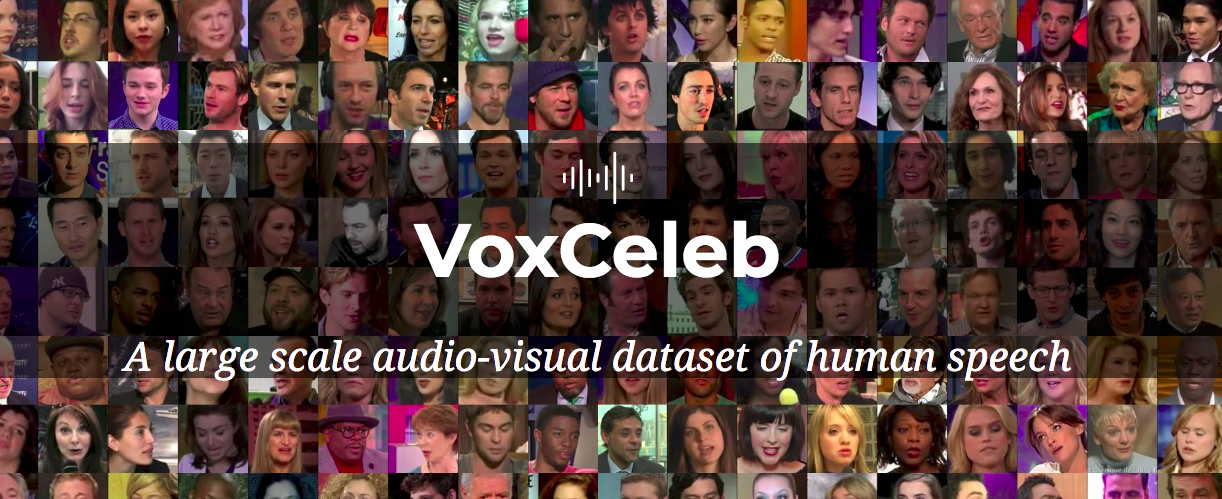

Guide To VoxCeleb Datasets For Visual-Audio of Human Speech.

Recently, researchers from UC Berkeley introduced a new AI model that can convert silently mouthed words to audible speech. The task of digitally voicing silent

Gnani.ai, a conversational AI startup, has announced the launch of a new integrated speech recognition based solution for the Indian Armed Forces. According to the

Deep Learning DevCon 2020 or DLDC 2020 is another conference of the year that is hosted in partnership with Analytics India Magazine. Scheduled for 29th

Join the forefront of data innovation at the Data Engineering Summit 2024, where industry leaders redefine technology’s future.

© Analytics India Magazine Pvt Ltd & AIM Media House LLC 2024

The Belamy, our weekly Newsletter is a rage. Just enter your email below.