Meta’s Answer to the Missing Link of Self-Supervised Learning

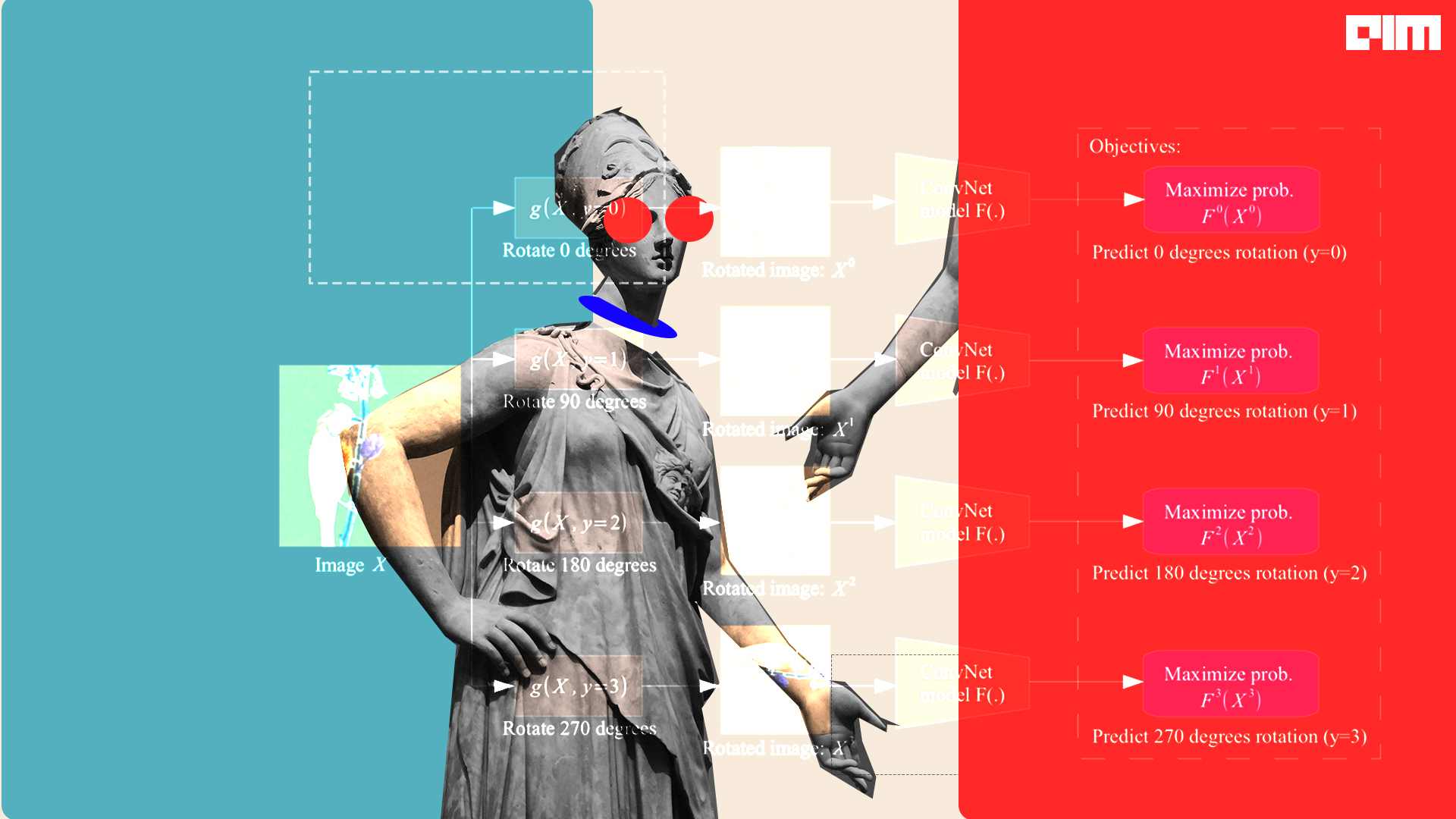

data2vec 2.0 is a self-supervised algorithm that works across three different modalities—speech, vision, and text.

data2vec 2.0 is a self-supervised algorithm that works across three different modalities—speech, vision, and text.

Data scarcity is seen as a major bottleneck to AI progress, but MetaAI’s Yann LeCun thinks otherwise.

Just like reinforcement learning, self-supervised or unsupervised learning also witnessed various new models in 2022. Here are a few of them

On the contrary, to collapse in contrastive methods, FAIR identified non-contrastive methods to suffer from a lesser collapse problem of a different nature.

graph structure has much additional information with them like node attributes, and label information of nodes. Using this source of information, we can have unprecedented opportunities to design advanced level self-supervised pretext tasks

By merging self-supervised learning and contrastive learning we can make it contrastive self-supervised learning, which is also a part of self-supervised learning.

In the last few years, we have seen that self-supervised learning methods are emerging rapidly. It can also be noticed that models using self-supervised learning

Facebook believes that self-supervision is one step on the path to human-level intelligence.

Anomaly detection is used to detect fraud and identify intrusions, and figure ecosystem disturbances.

Over time, scientists have introduced several techniques that offer the best of both.

Did you know that almost 90% of the world’s data has been created in the last two years alone, and nearly 2.5 quintillion bytes of

Self-supervised learning is gathering steam, slowly but surely. A relatively new technique, self-supervised learning is nothing but training unlabeled data without human supervision. Yann LeCun

Barlow twins is a novel architecture inspired by the redundancy reduction principle in the work of neuroscientist H. Barlow.

VISSL is a computer VIsion library for state-of-the-art Self-Supervised Learning research. This framework is based on PyTorch. The key idea of this library is to

Facebook AI Research (FAIR) has published a research paper introducing Hidden Unit BERT (HuBERT), their latest approach for learning self-supervised speech representations. According to FAIR,

SelfTime is the state-of-the-art time series framework by finding inter-sample and intra-temporal relations

Meta AI successfully trained a visual transformer model with 632M parameters utilising 16 A100 GPUs within a span of 72 hours

The model is open source and is pre-trained on 142 million images in self-supervised fashion without any labels

It is important but not the only technique we need to create intelligent systems, said Kohli DeepMind’s Head of Research (AI for science).

Yann LeCun said that though RL is inevitable in machine learning, the purpose behind incorporating it in algorithms should be to eventually minimise its use.

LeCun clearly is at odds with reinforcement learning and believes that for AI with common sense, it is not the way forward

The best of everything and anything released in ML!

Hey ML enthusiasts, check out this list of algorithms that you can explore.

While there are various practical applications of reinforcement learning, the concept as a whole poses some limitations when used in developing autonomous machine intelligence

The Graph Contrastive Learning aims to learn the graph representation with the help of contrastive learning.

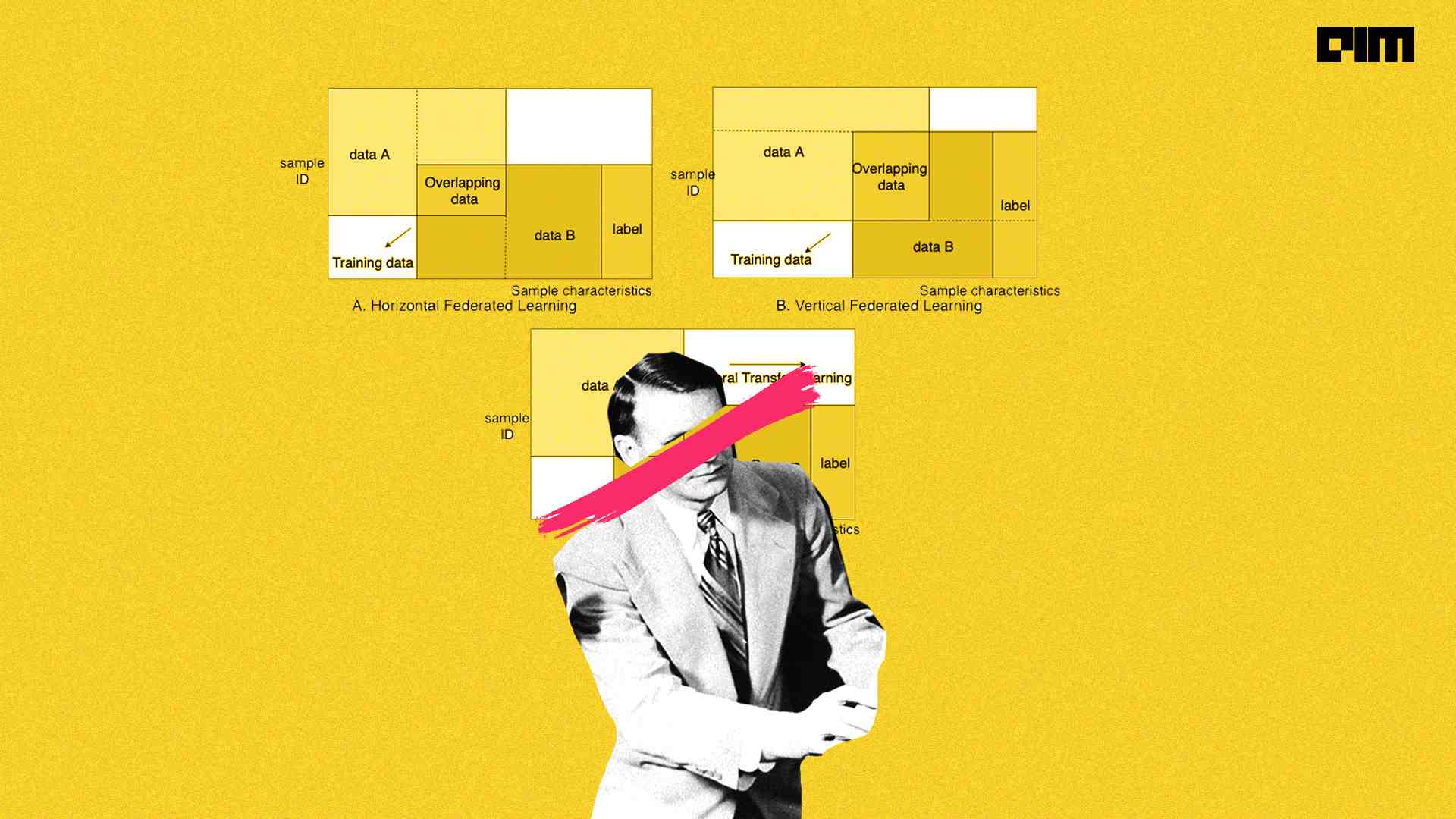

Federated learning enables smarter models, lower latency, and less power consumption, all while ensuring privacy.

As popular as it may be, RL does not come without its challenges. Analytics India Magazine has noted some common RL challenges and ways to overcome them.

They have applied it separately to speech, text and images where it outperformed the previous best single-purpose algorithms for computer vision and speech.

in the self supervised learning process we are mainly focused about making the data workable to the downstream algorithms. but when using the self-supervised learning we make the data specifically for classification we can say the process is self-supervised classification.

Unsupervised deep learning research indicates that the brain divides faces into semantically relevant characteristics like age.

Join the forefront of data innovation at the Data Engineering Summit 2024, where industry leaders redefine technology’s future.

© Analytics India Magazine Pvt Ltd & AIM Media House LLC 2024

The Belamy, our weekly Newsletter is a rage. Just enter your email below.