Bias is the inability to capture the true relationship between the data and the learning line by the machine learning algorithm. Bias is like racism in our society: it does favour a certain kind and ignores others. Bias could be introduced at various phases of the model’s development, including insufficient data, inconsistent data collecting, and poor data practices. There are different kinds of bias in general that would be discussed in this article and the ways by which bias could be mitigated from the machine learning algorithm. Following are the topics that would be covered in this article.

Table of contents

- Reason for Bias

- Types of Bias

- Mitigation techniques

Bias can’t be completely resolved but it could be decreased to a minimum level so that there is a balance between the bias and the variance. Let’s start by understanding the reason for the occurrence of bias at the initial levels.

Reason for Bias

Bias is a phenomenon that occurs when the machine learning algorithm has made an enormous number of assumptions that are not compatible with the real-life problems for which the algorithm is used to solve. So basically bias skews the result of an algorithm in favour or against the solution. High bias would fail to capture true data patterns and underfitting of machine learning algorithms.

Biases are typically unintentional but their existence can have a substantial influence on machine learning systems and outcomes could be disastrous from terrible customer experiences to fatal misdiagnoses. If the machine learning pipeline contains inherent biases, the algorithm will not only learn them but will also make worse predictions. When creating a new machine learning model, it is vital to identify, assess, and eliminate any biases that may influence the predictions. The above explanation is presented in the form of a chart for better understanding.

Types of Bias

Bias may be a human problem, but the amplification of bias is a technical problem, a mathematically explainable and controllable byproduct of the way models are trained. Bias occurs at different stages of the machine learning process. These commonly known biases are listed below:

- Sample bias occurs when data collected is not representative of the environment in which a program is expected to implement. No algorithm can be trained with all data of the universe, rather it could be trained on the subset that is carefully chosen.

- Exclusion bias occurs when some feature(s) are excluded from the dataset usually during the data wrangling. When there is a large amount of data, let’s say petabytes of data, choosing a small sample for training purposes is the best option, but while doing so features might be accidentally excluded from the sample, resulting in a biased sample. There can also be exclusion bias due to removing duplicates from the sample.

- Experimenter or observer bias occurs while gathering data. When gathering data, the experimenter or observer might only record certain instances of data and skip others, the skipped part could be beneficial for the learner but the learner is learning from the instances which are biased to the environment. Thus a biased learner is built.

- Measurement bias is the result of not accurately recording the data. For example, an insurance company is sampling the weight of customers for health insurance and the weighing machine is faulty but the data is still being recorded without being noticed. The result would be the learner would classify customers into wrong categories.

- Prejudice bias is the result of human cultural differences and stereotyping. When this prejudiced data is fed to the learner, it applies the same stereotyping that exists in real life.

- Algorithm bias refers to the certain parameters of an algorithm that causes it to create unfair or subjective outcomes. When it does this, it unfairly favours someone or something over another person or thing. It can exist because of the design of the algorithm. For example, an algorithm decides to approve credit card applications and the data is fed that include the gender of the applicant. On this basis, the algorithm might decide that women are earning less than men and therefore women’s applications would be rejected.

In general, a bias is either implicitly (unconsciously) added to the learner or explicitly(consciously) added to the learner but ultimately the result would be biased. Let’s see the mitigation of bias to get an unbiased result from the learner.

Mitigation techniques

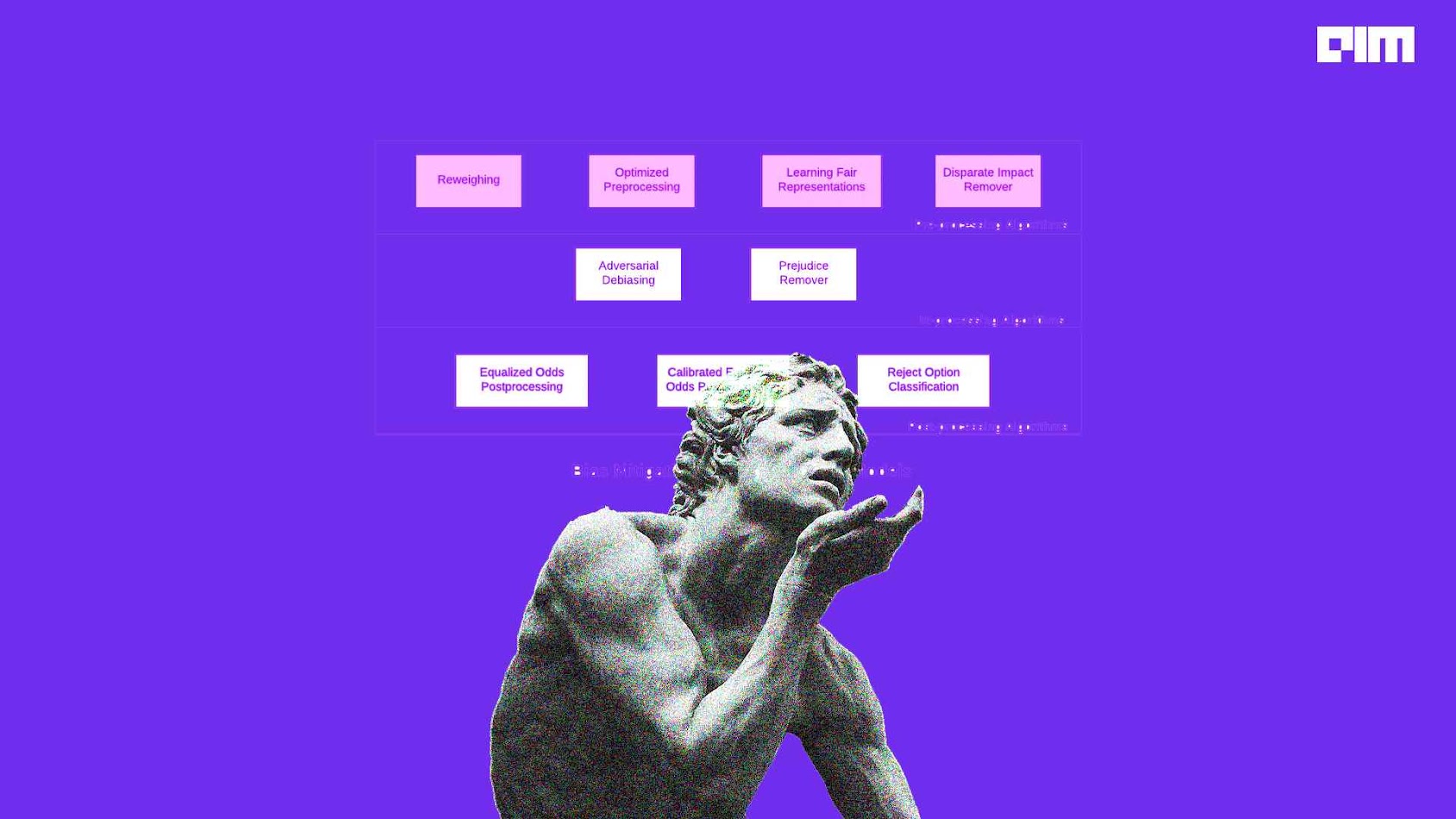

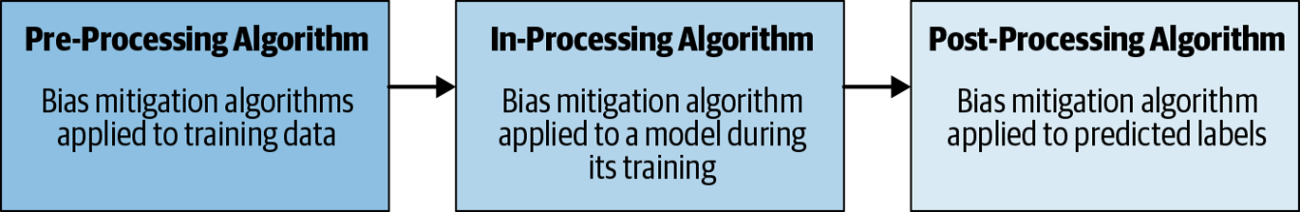

Bias mitigation algorithms are categorized based on where in the machine learning pipeline they are deployed. A pictorial is represented below. Generally, if it’s pre-processing algorithms, you can modify the training data. In-processing algorithms are changing the learning procedure for a machine learning model. If you cannot modify the training data or learning algorithm then need to use the post-processing algorithms.

Pre-Processing bias mitigation

Mitigation of pre-processing bias starts with training data, which is used in the first phase of the AI development process and often introduces underlying bias. Analysis of the model performance train on this data may reveal disparate impacts (i.e., a specific gender being more or less likely to get car insurance), consider this in terms of harmful bias (i.e., a woman do crashes their vehicle and still they are getting small budget insurance) or in terms of fairness (i.e., I want to make sure that customers are getting insurance unbiased on their genders).

Negative outcomes will likely arise with a lack of diversity within the teams responsible for building and implementing the technology during the training data stage. The way data is used for training the learner moulds the results. If a feature is eliminated according to the team but it could be important for the learner the resultant would be biased.

In-processing bias mitigation

In-processing models offer unique opportunities for increasing fairness and reducing bias when training a machine learning model. For example, when a bank is attempting to calculate a customer’s “ability to repay” before approving a loan. The AI system may predict someone’s ability based on sensitive variables such as race, gender, or proxy variables which may correlate. This can be overcome by using Adversarial debiasing and prejudice remover.

- Adversarial debiasing is a classifier model which learns to maximize prediction accuracy and simultaneously reduce an adversary’s ability to determine the protected attribute from the predictions. This method leads to a fair classifier since the predictions are not discriminatory among group members. Essentially, the goal is to “break the system” and get it to do something that it may not want to do, as a counter-reaction to how negative biases impact the process.

- Prejudice remover is to add a discrimination-aware regularization term to the learning objective.

Post-processing bias mitigation

Post-processing mitigation becomes useful after the model is trained but now wants to mitigate bias in predictions. This could be achieved by using:

- Equalized odds solve a linear program to optimize equalized odds by changing output labels based on likelihood probability.

- Calibrated equalized odds calculate the probabilities with which to change output labels with an equalized odds objective using over calibrated classifier outputs.

- Classifying reject options is used for giving favourable outcomes to unprivileged (biased) groups and unfavourable outcomes to privileged groups(unbiased) in a confidence band around the decision boundary with the highest uncertainty.

However, when augmenting outputs accuracy could be altered. For instance, an algorithm is sorting resumes of applicants, this process might result in hiring fewer qualified men if sorting on equal gender, rather than relevant skill sets (sometimes referred to as positive bias or affirmative action). This will impact the accuracy of the model, but it achieves the desired goal.

Final Verdict

Ultimately there is no way to completely eradicate bias from the algorithm, but it could be mitigated with the help of certain techniques as mentioned in the article. This helps to build a balanced biased machine learner.