Optical character recognition (OCR) is a sort of image conversion that basically extracts text from a given image, a document photo, etc. Various applications and technologies, such as Adobe Acrobat and the ML-based tool, such as Tesseract OCR, have been developed to aid with this process. In this article, we will go over tasks performed in the OCR method. Thereafter, we will look into MMOCR, a Python-based application that centralizes all OCR-related operations. Below are major points listed that are to be discussed in this article.

Table of contents

- Text detection

- Text recognition

- How MMOCR combines all above

Let’s first discuss text detection.

Text detection

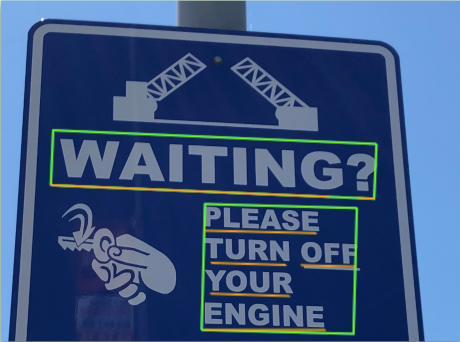

Text detection is the technique of detecting text in a picture and then enclosing it with a rectangular bounding box. Text can be detected using image-based or frequency-based algorithms.

Image-based approaches are used to segment images into several segments. Each segment is made up of pixels that have comparable qualities and are connected. The statistical features of related components are used to categorize and shape the text. Machine learning techniques such as support vector machines and convolutional neural networks are used to classify the components as text or non-text. Below one is an example of text detection.

The high-frequency coefficients are extracted using discrete Fourier transform (DFT) or discrete wavelet transform (DWT) in frequency-based approaches. The text in an image is believed to have high-frequency components, and picking only the high-frequency coefficients separates the text from the non-text regions.

For a given image there are text and non-text regions that have different textual qualities, region-based approaches partition images into small sections using windows and search these regions for the presence of text using texture or morphological operations. Some techniques categorize text and non-text using a 64 x 32-pixel window and the Modest AdaBoost classifier on 16 different spatial scales of the image, taking into account substantial changes in text size.

Text recognition

The text recognition stage transforms text pictures into a string of characters or sentences. Words are an elementary entity used by humans for visual recognition, hence converting images of text into words is critical.

Character recognition and word recognition are two different techniques of recognition. Character recognition algorithms separate a text image into several single-character cutouts. For these strategies, character separation between adjacent characters is crucial.

Character recognition using the Optical Character Recognition module (OCR) is used in the recognition process, where images are first segmented into k classes, followed by binary text image hypothesis generation, which passes through connected components analysis and the greyscale consistency constraint module before being fed to OCR.

A classifier based on Support Vector Machine (SVM) is utilized for character recognition since SVM supports multi-class classification well.

Word recognition recognizes words from text images by combining character recognition outputs with language models or lexicons. In the case of degraded characters, it can be employed. Word recognition is a superior approach to character recognition for situations with a restricted number of word possibilities in input photos.

How MMOCR combines all above

The MMOCR stands for MultiMedia Optical Character Recognition which is a python-based toolbox that combines all the modalities as we discussed above required for a complete end-to-end solution in the OCR field.

MMOCR, in particular, offers a pipeline for text detection and recognition, as well as downstream tasks like named entity recognition and critical information extraction. MMOCR has 14 cutting-edge algorithms, which is much more than any other open-source OCR project, such as Tesseract OCR.

The toolbox now includes seven text detection methods, five text recognition methods, one key information method, and one named entity recognition method.

Let’s now see how we can make use of this tool practically. By running the below script we can install all the dependencies that are required to run this tool if you encountered any issues refer to this official installation guide.

# installing pytorch prebuilt ! pip install torch==1.6.0 torchvision==0.7.0 # install mmcv (computer vision based library) ! pip install mmcv-full -f https://download.openmmlab.com/mmcv/dist/cu101/torch1.6.0/index.html # now below install mmdet, mmocr ! pip install mmdet ! git clone https://github.com/open-mmlab/mmocr.git %cd mmocr ! pip install -r requirements.txt ! pip install -v -e . !export PYTHONPATH=$(pwd):$PYTHONPATH

We’ll first perform text detection for that import MMOCR class from the installed repository as below and inside this class, the various methods can be initialized such as detection, recognition, and understanding.

from mmocr.utils.ocr import MMOCR

ocr = MMOCR(det='TextSnake', recog=None)

results = ocr.readtext('/content/street-sign-board-500x500.jpg', output='/content/street.jpg', export='/content/')

In the above method, we have defined the path for the output image to the default colab directory with the name of the output file and it results in the detection informs bounding boxes as we can see below.

Similarly, when combining detection and recognition, we need to initialize both det and recog inside the MMOCR class as below.

# detection+recognition

ocr = MMOCR(det='PS_CTW', recog='SAR')

# Inference

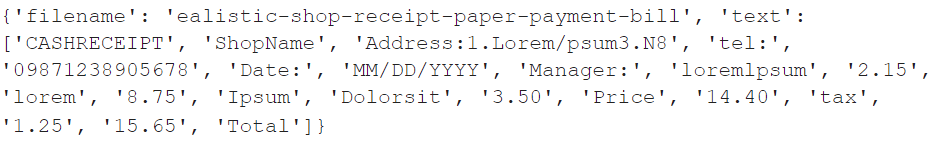

results = ocr.readtext('/content/ealistic-shop-receipt-paper-payment-bill.jpg',export='/content/',output='/content/bill.jpg', print_result=True)

Inside the ocr.readtext we set print_reult to True which gives us Jason format of the result and for this task we have used a bill receipt,

Jason result,

JPG result,

Final words

Through this article, we have discussed text detection and recognition for a given image and briefly what methods are used to address these tasks. To facilitate an end-to-end platform, we have a deep learning-based toolbox called MMOCR by which we can have all the OCR-related tasks inside a single frame.