During the last decade, technology has advanced in tremendous ways where analytics and statistics have played major roles. These techniques fetch an enormous amount of dataset that is usually composed of many variables. For instance, the real world datasets for image processing, internet search engines, text analysis, etc. usually have a higher dimensionality and to handle such dimensionality, it needs to be reduced but keeping in mind that the specific information should remain unchanged.

Dimensionality reduction is a method of converting the high dimensional variables into lower dimensional variables without changing the specific information of the variables. This is often used as a pre-processing step in classification methods or other tasks.

Components

There are basically two types of components in dimensionality reduction. They are

Feature Selection: This technique extracts the most relevant variables from the original data set that involves three ways; filter, wrapper and embedded.

Feature Extraction: This technique is used to reduce the dimensional data to a lower dimensional space.

Importance In Machine Learning

The data are incrementing day-by-day which leads to an increase in dimensionality too, that may result in overfitting. To overcome this issue, Dimensionality Reduction is used to reduce the feature space with consideration by a set of principal features. Dimensionality Reduction contains no extra variables that make the data analyzing easier and simple for machine learning algorithms and resulting in a faster outcome from the algorithms.

Steps Using Python

There are several techniques for implementing dimensionality reduction such as

- Backward Feature Elimination: In this technique, the selected classification algorithm is trained on n input features at a given iteration. Then the input feature will be removed one at a time and the same model will be trained on n-1 input features.

- Forward Feature Construction: This technique implies the reverse process of backward feature elimination. Here, the iteration starts with one feature only and will progress by adding one feature at a time.

- Low Variance Filter: In this technique, the data columns with variance lower than a given threshold are removed since data columns with little changes in the data carry little information.

- Factor Analysis: In this technique, the variables are grouped by their correlations that means all the variables in a particular group will have a high correlation among themselves but lower in other groups.

- Principal Component Analysis: This technique helps in extracting a new set of variables from an existing large set of variables

In this article, we will try our hands on applying Principal Component Analysis (PCA) using Python. This is an efficient statistical method that transforms the original dataset into a new set of datasets orthogonally where the new set is known as the principal component. This involves some steps that are listed below:

- The covariance matrix of the data is to be constructed.

- Then the eigenvectors of this matrix are needed to be constructed.

- The eigenvectors corresponding to the largest eigenvectors are used to reconstruct a large fraction of variance of the original data.

We will import PCA from sklearn library in order to implement PCA in Python. The database we are using is the Wine Recognition Data from UCI. The total number of attribute and class are 13 and 3 respectively. Click here to access the dataset.

# Importing NumPy Libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing The Dataset

dataset = pd.read_csv(‘Wine.csv’)

X = dataset.iloc[:, 0:13].values

y = dataset.iloc[:, 13].values

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X = sc.fit_transform(X)

#Importing PCA libratry from sklearn

from sklearn.decomposition import PCA

pca = PCA(n_components = 13)

X = pca.fit_transform(X)

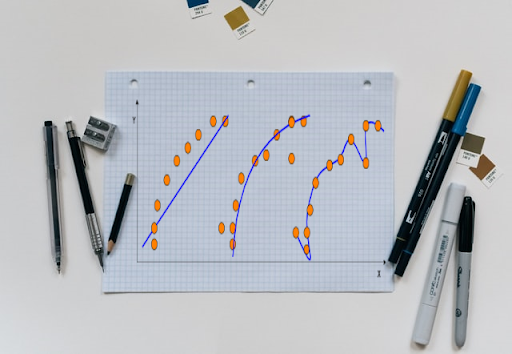

explained_variance = np.cumsum(pca.explained_variance_ratio_)

#Plotting the cumulative explained variance

plt.plot(explained_variance)

plt.title(“PCA:Cumulative Explained Variance”)

plt.xlabel(“Components”)

plt.ylabel(“Cumulative SUM”)

In the graph, the blue line represents the cumulative explained variance. To take a deep dive into PCA on Python from sci-kit learn documentation. Click here.

Benefits:

- This technique reduces the storage space by compressing the data.

- An algorithm consumes less computational time after applying dimensionality reduction.

- This technique helps in removing redundant features.

Shortcomings:

- When mean and covariance are not enough to define datasets, in that case, Principal Component Analysis tends to fail.

- PCA is applied on a dataset with numeric variables.

- It may lead some amount of data loss.