It has been 150 years since Charles Darwin made the claim that facial expressions might be the key to understand an animal’s emotion. With relevant tools lacking in the era, this could not be deeply explored. However, with the development of devices like cameras and advanced genetic techniques, these facial expressions can be captured and analysed today.

In a recent study by neuroscientist Nadine Gogolla of the Max Planck Institute of Neurobiology in Martinsried, Germany, artificial intelligence (AI) has been used to decode the facial expressions of laboratory mice. Gogolla and team used a machine-learning (ML) algorithm to understand facial recognition, which could pinpoint neurons in the human brain to encode specific expressions.

To perform this new research study, Gogolla drew inspiration from the 2014 Cell paper2 written by neuroscientist David Anderson at the California Institute of Technology in Pasadena. Describing the research, Nadine said that mice exhibit facial expressions that are specific to the underlying emotions. The findings were significant as they offered researchers new ways to measure the intensity of emotional responses, which could help them probe how emotions arise in the brain.

Facial Recognition

The team of researchers fixed the subject’s (mice) head to keep them still and provided them with different sensory stimuli to trigger expressions and filmed them. To create the expression, the researchers presented the subject with sweet and bitter substance to invoke expression. They also gave electric shocks to the subject’s tail and injected them with lithium chloride to bring out different emotions.

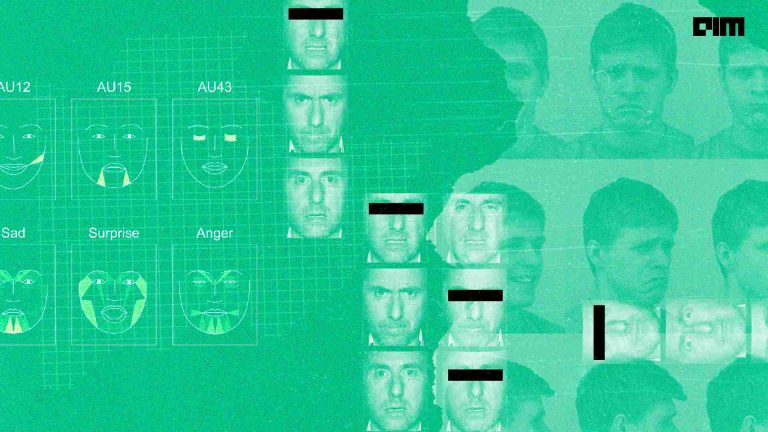

Once the facial expressions were recorded, the team turned to computer vision technology that captured the images and evaluated the difference in the picture. The software revealed the differences in the photographs taken before and after the study. It also showed the different expressions of mice with different stimuli, such as electric shock and sweet and bitter substances.

The team of researchers were aware how the mice changed their expression by moving their ears, cheeks, nose and the upper parts of the eyes, but they were not able to assign the expressions to particular emotions. To figure out the differences, the team broke the videos of the facial movement into ultra snapshots as the mice reacted to different stimuli.

Using Machine Learning

The team employed a machine learning algorithm that was designed to recognize patterns and sort data into different groups. The algorithms categorized the facial expressions of the mouse objectively and qualitatively at million seconds timescale. The algorithm was fed with the facial expressions of the mouse along with the labelled corresponding emotions. Furthermore, when the algorithm was presented with unlabelled facial images, it predicted the emotions to be 90% accurate.

The team also looked at different brain regions associated with emotions of the mice. They came to the conclusion that if they stimulated areas linked to a particular emotion, the mice displayed expected corresponding facial expressions, which was differentiated through machine learning.

End Result

In the next step, the team of researchers searched for brain cells which might help in encoding the emotions in the brain. Through the use of a technique called optogenetics, the researchers targeted neuronal circuits in the mice, which displays specific emotions in humans and other animals. As the author began to stimulate these circuits directly, relevant facial expressions were assumed by the mice.

To end with, the team used a technique called two photon calcium imaging to identify which neurons in the brain of mice were triggered when particular emotions and expressions were evoked. Gogolla claims to have been fascinated by the fact that mice have emotional states that they experience as feelings. Leading the study for three years, Gogolla wanted to see if it was possible to learn how this state emerges in the brain from animal studies.