|

Listen to this story

|

A few months ago, Alexa was declared dead. The company had pulled a plug on its ‘Amazon Alexa’ voice-assisted feature succumbing to huge operating losses.

But, now the tide is changing. It looks like the unfaltering wave of AI will revive the almost-lost virtual assistant technology. Recently announced partnership between HuggingFace and AWS gives further confidence that Amazon has something up its sleeve to boost users’ conversational experience with Alexa.

Meanwhile, Amazon has also been hiring for Alexa AI from candidates with background in dialogue systems or information retrieval.

The success of ‘text-to-anything’ generation models like ChatGPT has people thinking that naturally, the next step of the evolution would be the introduction of multimodal neural networks.

A multimodal AI system can process different sets of inputs—natural language processing, computer vision, speech recognition, and others—to get an understanding of the context they’re operating in and solve different tasks.

The “multimodal” vision was equally asserted by Amazon’s head of Alexa, Rohit Prasad, who referred to it as “ambient intelligence”. Prasad mentioned that in addition to advancing Alexa’s voice AI, the company is focused on improving Alexa’s ability to process various sensory signals, including visuals, touch, ultrasound, and speech.

A new model in works

Against the tide of making models more general and bigger, Amazon Alexa AI researchers have demonstrated that a lot could be achieved more efficiently with models having less parameters. As one of the authors in their recent paper put in their blog, “As commercial AI models continue to increase in scale, relying on data annotation is becoming unsustainable.”

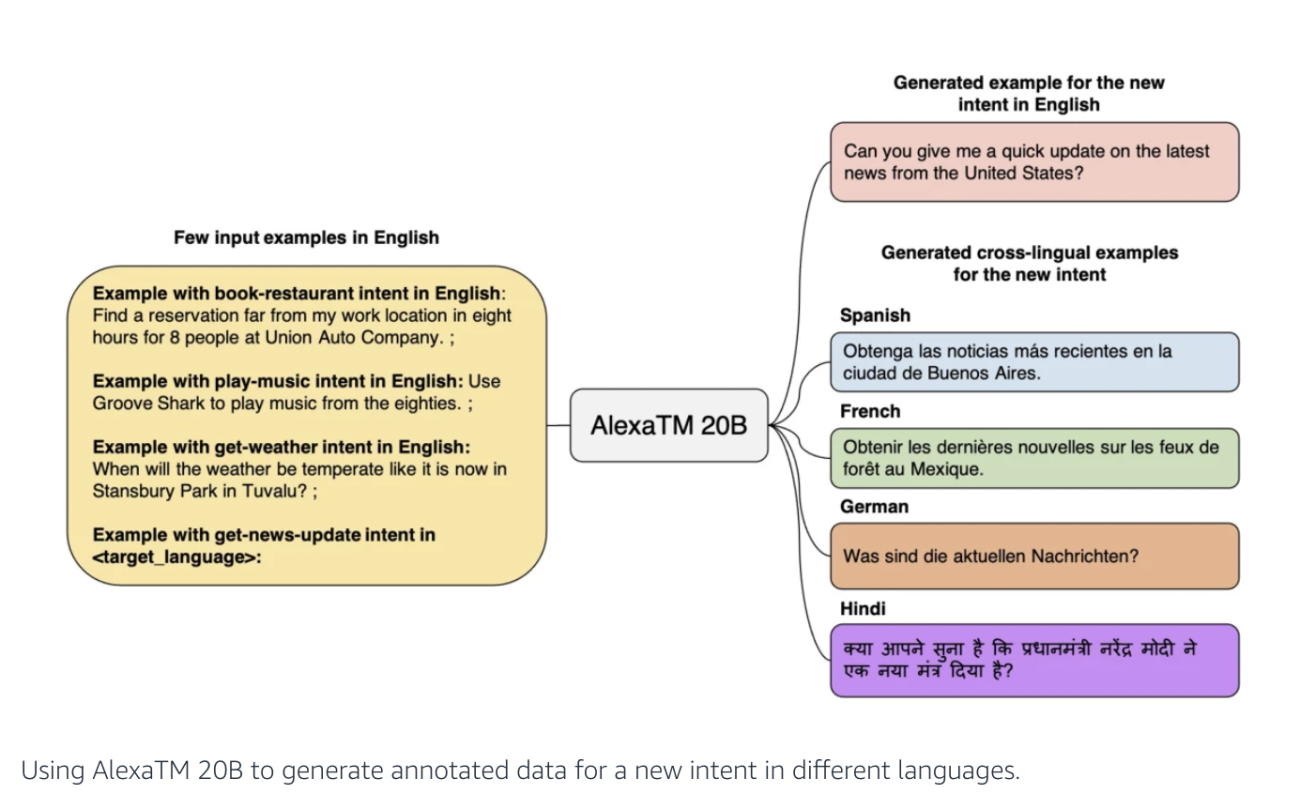

The paper discusses that Alexa AI is moving to a new paradigm of “generalizable intelligence” where models will be able to learn novel concepts and transfer knowledge between different languages or tasks with little to no human input. In this regard, the researchers developed a 20-billion-parameter generative model called ‘AlexaTM 20B’, which not only will be able to transfer what it learns across different languages but also learn new tasks from only a handful of examples.

The model—which is based on sequence-to-sequence (seq2seq) encoder-decoder—uses bidirectional encoding to represent an input text, which makes it more effective in tasks like machine translation and text summarisation, outperforming even a 175 B parameter model like GPT-3.

What can Microsoft, Google learn from Amazon?

However, what is interesting with Amazon’s foray into the generative AI segment is that the research has been built around the tasks that customers want executed which includes booking restaurants, playing music, and accessing weather information.

Their blog reads, “The model can generalise from these [the above-mentioned tasks] to the unfamiliar intent get-news-update and generate utterances corresponding to that intent in different languages.”

Austin Carr, in writing for a Bloomberg newsletter, makes a similar observation in noting that Alexa was able to bring some incremental use cases to ‘Echo’, which were a selection of basic but essential daily tasks such as setting kitchen timers, playing music, checking the weather, and so on. “Timers, music, and weather forecasts are all things I need, and the Echo has eliminated a ton of annoyances for me,” Carr added.

In recent cases, LLMs have been all the talk—for negative reasons as well. Be it Bing’s AI, or Google’s BARD, or for that matter, OpenAI’s ChatGPT—the chatbots have shown wrong information, shown various biases, and even led to certain provoked responses. In this light, it will greatly help these companies to “perfect more incremental, mass-appeal capabilities of its AI, just as Amazon did with Alexa”.

Therefore, instead of following the herd to create a “generalised chatbot”, Amazon has been able to double down on what it originally did with Alexa.

What went wrong before?

Amazon’s Alexa voice-assistant reportedly racked up an operating loss of $10 billion in 2022, which when placed under the macroeconomic lens—with soaring interest rates and unstable foreign exchange—the losses were simply unsustainable.

This also led to one of the former employees describing Alexa as a “colossal failure of imagination” and “a wasted opportunity”.

As per one report, the business model of this division didn’t work since the organisation only makes money when people use the devices, rather than simply buy them.

When it was initially rolled-out in 2015, it did push users to ask bizarre questions to the voice-assistant, which included questions on the meaning of life, or on desires, and so on. But over a period of time, the unsatisfactory answers of Alexa couldn’t keep the users’ attention. Most importantly, people only used Alexa for trivial commands as mentioned above.

The devices didn’t provide a personalised experience—or anything that could bring some advertisement revenue to the company.

Although, it seems like generative AI might just be what Amazon needs to encourage people to use its device more for all sorts of daily tasks—basically be the google search for voice inputs.