Beautiful Soup is a HTML and XML parser available on Python 2.6+. Soup is named after the unstructured HTML documents which are hard to understand and noisy. It parses the data from the HTML and XML documents from where it can be extracted.

In this article, we will be going through functions which help us extract data from the HTML document. We will be using a toy HTML to explain how Beautiful Soup works and walk through the steps involved in Scraping — one of the techniques of data mining — data from a website’s HTML format.

With the help of headless browsers such as Selenium and PhanthomJS, one can easily practice how to scrape data out of a website. With these browsers, it will be easy to scrape through multiple pages or extract a large amount of data from the websites. Using a headless browser will also increase the computation speed which will result in the consumption of less memory. In fact, PhanthomJS assigns unique processes to each browser as well.

Installing Beautiful Soup 4

Beautiful Soup library can be installed using PIP with a very simple command. It is available on almost all platforms. Here is a way to install it using Jupyter Notebook.

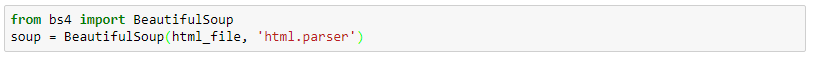

We can import this library with the following code and assign it to an object.

Installing An Alternative Parser

Beautiful Soup has a default parser available in the standard Python Library. We can use a different parser depending on the objective. The most common alternative parsers are “lxml” and “html5lib”. This can be installed with the help of the following code:

Below is a tabular representation of various parsers with their advantages and disadvantages:

Getting Started

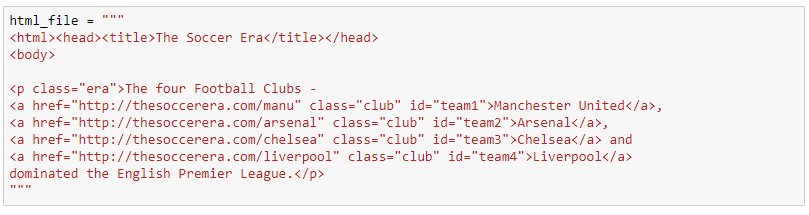

We will be using this basic, and default, HTML doc to parse the data using Beautiful Soup.

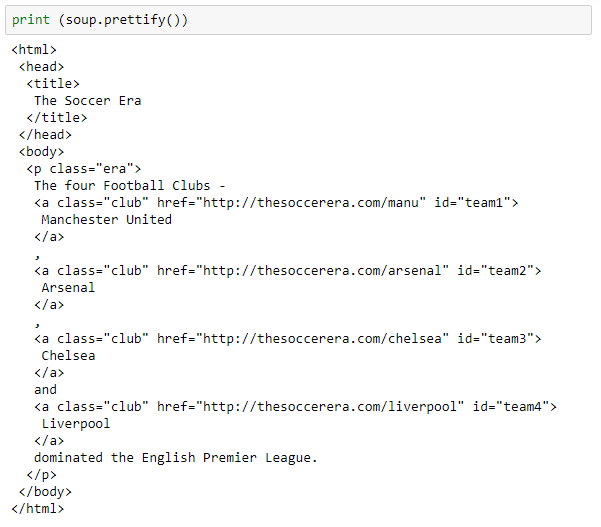

The following code will expand HTML into its hierarchy:

Exploring The Parse Tree

To navigate through the tree, we can use the following commands:

Beautiful Soup has many attributes which can be accessed and edited. This extracted parsed data can be saved onto a text file.

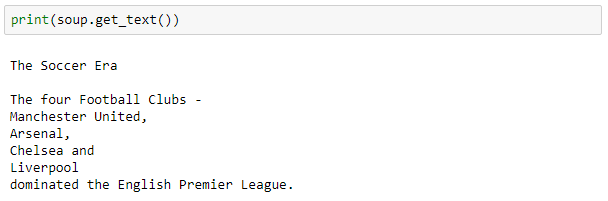

To extract the text from the string, we can use the get_text() command.

Strings: How To Remove White spaces

The string can be accessed using the strings command. But it also includes white space which can be stripped easily.

Since the above output has a lot of white space, the striped.strings command will help us remove it.

Parent And Siblings

We can obtain the parent of a particular HTML with .parent attribute, like here:

To access the siblings — previous as well as the next — we can use the following commands:

Find And FindAll

This function is used to search for a very particular field throughout the HTML document. It is one of the key features required while data mining or scraping a data from a website with the help of Selenium and PhanthomJS.

Conclusion

Since finding the right tags from the HTML source is hard, scraping the data takes a lot of time. It can also depend on the amount of data extracted from a page. That is why, the wait time is necessary for the browser to load the data. Depending on their computation speed and availability of resources one can scrape data from almost any website using the right tools.