|

Listen to this story

|

The human mind can get really complicated at times. That’s when we turn to meditation, taking deep breaths to forget all the chaos. It gives us certain fulfilment—by bringing out new traits of understanding and empathy—sometimes by not doing anything at all.

What if machines could also meditate or do nothing for a day or two—to learn better?

As absurd as it may sound, a group of researchers have now discovered how artificial neural networks can mimic sleep patterns of the human brain, boosting their utility across a spectrum of research areas. Moreover, this may help the networks to mitigate the threat of catastrophic forgetting later.

Let machines take a nap or two!

On an average, the human body requires at least 7 to 13 hours of sleep in a day. While being asleep, the heart rate, metabolism ebb, breathing, and hormone levels undergo some changes while the body stays in a relaxing state. But the brain remains almost the same.

University of California San Diego School of Medicine’s Professor of medicine and sleep researcher Maxim Bazhenov says, “The brain is very busy when we sleep, repeating what we have learned during the day”. He further adds that sleep helps reorganise the memories, trying to present them in the most efficient way possible.

In a previously published work, Bazhenov and his colleagues have reported how sleep helps to increase the ability to remember indirect or arbitrary associations between objects or people, build rational memory, and protect against forgetting old memories.

From basic science, finance and medicine, artificial neural networks have leveraged the architecture of the human brain to improve numerous technological systems.

Many neural networks have achieved superhuman performance, one being the recent advancement of computational speed. However, they fail in one key aspect. Artificial neural networks tend to overwrite previous information when new information is learnt sequentially. The phenomenon is majorly called catastrophic forgetting.

Bazhenov says that the human brain learns in a continuous manner, incorporating new data into existing knowledge. He claims that for memory consolidation, the brain learns the best when new training is combined with periods of sleep.

Instead of information being communicated continuously, the scientists have used spiking neural networks that mimic natural neural systems, which are transmitted as discrete events at certain time points.

While the network of spiked neural systems were trained with occasional off-line periods that imitated sleep, the team discovered that the catastrophic forgetting was mitigated by the network. Just like the human brain, ‘sleeping’ allowed the networks to replay old memories—without using any old training data.

Augmenting sleep patterns

The human brain consists of hundreds of memories which are represented by patterns of synaptic weight. Each pattern showcases the amplitude of how strong a connection can be between two neurons.

The research showcases that while learning new information, these neurons fire in a particular order that increases the synapses between them. While asleep, the spiking patterns that are derived during a human mind’s awake state are repeated several times in a spontaneous manner, often termed as ‘replay’ or ‘reactivation’.

Bazhenov says, “Synaptic plasticity, the capacity to be altered or molded, is still in place during sleep and it can further enhance synaptic weight patterns that represent the memory, helping to prevent forgetting or to enable transfer of knowledge from old to new tasks.”

The team applied this approach to artificial neural networks, only to discover that the process helped the networks avoid catastrophic forgetting. Meaning, the networks could learn just like humans or animals. The research was a new breakthrough to understand how the brain processes certain information during sleep, only to help augment memory in the human subject. Furthermore, augmenting sleep rhythms can lead to good memory.

The team has also developed computer models to develop optimal strategies to apply stimulation to examine factors affecting sleep, such as auditory tones which improves learning and enhances sleep rhythms. They also claim that the project becomes vital with non-optimal memory during some conditions in diseases like Alzheimer’s.

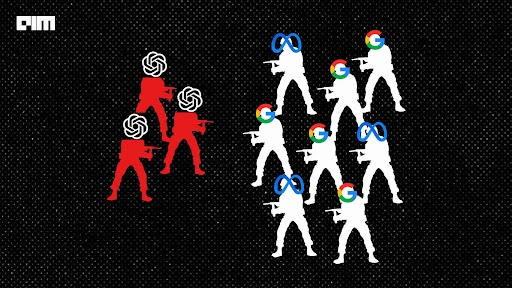

Furthermore, Google DeepMind last week released a framework to enable the creation of AI agents that can understand human instructions to perform certain actions.

Several existing AI frameworks have received criticism for ignoring the situational understanding on how humans use language. Generative AI such as DALL.E 2 comments on how it failed to understand the syntax of the text prompts. For an input text of ‘a spoon on a cup’, the results would include images consisting of a cup and spoon from the dataset—without knowing the relationship between the objects given in the text prompt.