As machine learning algorithms most often accept only numerical inputs, it is important to encode the categorical variables into some specific numerical values. In this article, we compare the label encoding and one-hot encoding techniques by implementing it in Python.

Label Encoding

Label encoding is one of the popular processes of converting labels into numeric values in order to make it understandable for machines. For instance, if we have a column of level in a dataset which includes beginners, intermediate and advanced. After applying the label encoder, it will be converted into 0,1 and 2 respectively.

OneHot Encoding

One-Hot Encoding is one of the most widely used encoding methods in ML models. This technique is used to quantify categorical data where it compares each level of the categorical variable to a fixed reference level. It converts a single variable with n observations and x distinct values, to x binary variables with n observations where each observation denotes 1 as present and 0 as absent of the dichotomous binary variable.

Implementation in Python

Loading Dataset

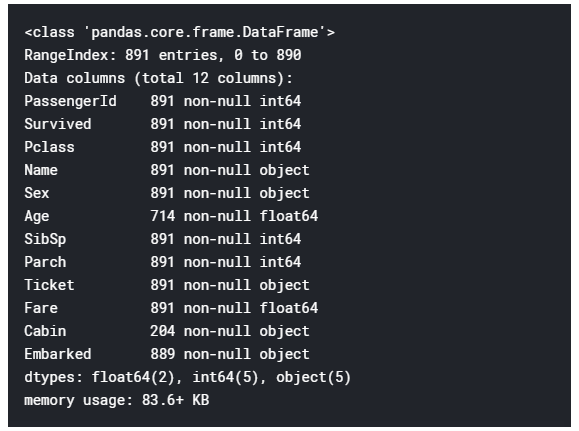

For the comparison, we used the Titanic: Machine Learning from Disaster dataset. Both the training and testing dataset are given where the training dataset contains 891 rows and 12 columns.

You can download the dataset from here.

Source: Kaggle

After loading the dataset, we used the dropna() method which allows eliminating the null values from the rows and columns. After dropping the null values, the remaining rows and columns are 792 and 8 respectively.

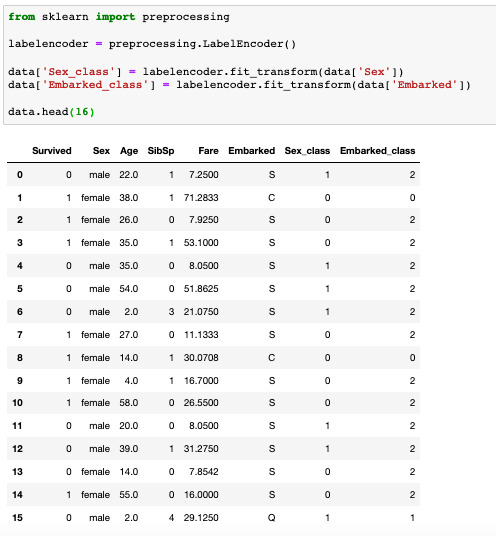

Label Encoding

In Python, label encoding can be done with the help of the Sklearn library. We used label encoder for specifically two columns or class which are “sex” and “embarked”. After appling label encoder we can notice that in embarked class C, Q and S are assumed as 0,1 and 2 respectively while the male and female in sex class is assumed as 1 and 0 respectively. We further implemented the data in Support Vector Machine (SVM) and the accuracy score is shown as 60%.

The code snippet is shown below:

One-Hot Encoding

We will now apply the one-hot encoding in the dataset in a similar manner and imply it to one of the popular classification methods, Support Vector Machines (SVM). This will provide us with the accuracy score of the model using the one-hot encoding. It can be noticed that after applying the one-hot encoder, the embarked class is assumed as C=1,0,0, Q=0,1,0 and S= 0,0,1 respectively while the male and female in the sex class is assumed as 0,1 and 1,0 respectively.

The code snippet is shown below

Here, by comparing the accuracy scores of the two encoder techniques, we can see that the accuracy score of the label encoder is less than the accuracy of the one-hot encoder.

Outlook

Most of the time, the outcome of a machine learning model is represented by how much accuracy rate the model is providing. The whole idea behind the implementation in Python is to show the difference in the accuracy rate in the ML model. Also, important to mention that the accuracy we are achieving here is around 60% since we have been using a small number of data in our model just to compare the two encoder techniques. To get better accuracy, one must always use a large number of the dataset.