In recent times, ensemble techniques have become popular among data scientists and enthusiasts. Until now Random Forest and Gradient Boosting algorithms were winning the data science competitions and hackathons, over the period of the last few years XGBoost has been performing better than other algorithms on problems involving structured data. Apart from its performance, XGBoost is also recognized for its speed, accuracy and scale. XGBoost is developed on the framework of Gradient Boosting.

Just like other boosting algorithms XGBoost uses decision trees for its ensemble model. Each tree is a weak learner. The algorithm goes on by sequentially building more decision trees, each one correcting the error of the previous tree until a stopping condition is reached.

- Random Forest Vs XGBoost – Comparing Tree-Based Algorithms (With Codes)

- How To Use XGBoost To Predict Housing Prices In Bengaluru: A Practical Guide

- How to use XGBoost for time-series analysis?

- 8 Best Free Resources To Learn XGBoost

- Research on Global Markets

In this article, we will discuss the implementation of XGBoost Algorithm in R.

About the dataset

The dataset is downloaded from the following link. It contains several parameters which are considered important during the application for Masters Programs for a candidate.

Code Implementation

getwd()

Set the directory path for this project.

setwd("C:\\Users\\Ankit\\Desktop\\shufflenet\\XGBoost In R")

Install all packages required for this project.

install.packages("data.table")

install.packages("dplyr")

install.packages("ggplot2")

install.packages("caret")

install.packages("xgboost")

install.packages("e1071")

install.packages("cowplot")

install.packages("matrix")

install.packages("magrittr")

Load all the packages.

library(data.table) library(dplyr) library(ggplot2) library(caret) library(xgboost) library(e1071) library(cowplot) library(Matrix) library(magrittr)

Reading the CSV file

Read the CSV file. Since XGBoost requires numeric matrix we need to convert the rank to factor as rank is a categorical variable.

data <- read.csv("binary.csv")

print(data)

str(data)

data$rank <- as.factor(data$rank)

Split the train and test data

set.seed is to make sure that our training and test data has exactly the same observation. We will take a sample of 2, nrows as the number of rows in data and probability of 80% and 20%.

set.seed(123) ind <- sample(2, nrow(data), replace = T, prob = c(0.8, 0.2)) train <- data[ind==1,] test <- data[ind==2,]

Create matrix – One-Hot Encoding

To use the XGBoost algorithm we need to create a matrix and use the one-hot encoding. This will create a dummy variable for the factor variable.

training <- sparse.model.matrix(admit ~ .-1, data = train) #independent variable head(training) train_label <- train[,"admit"] #dependent variable train_matrix <- xgb.DMatrix(data = as.matrix(training), label = train_label) testing <- sparse.model.matrix(admit~.-1, data = test) test_label <- test[,"admit"] test_matrix <- xgb.DMatrix(data = as.matrix(testing), label = test_label)

Defining the parameters

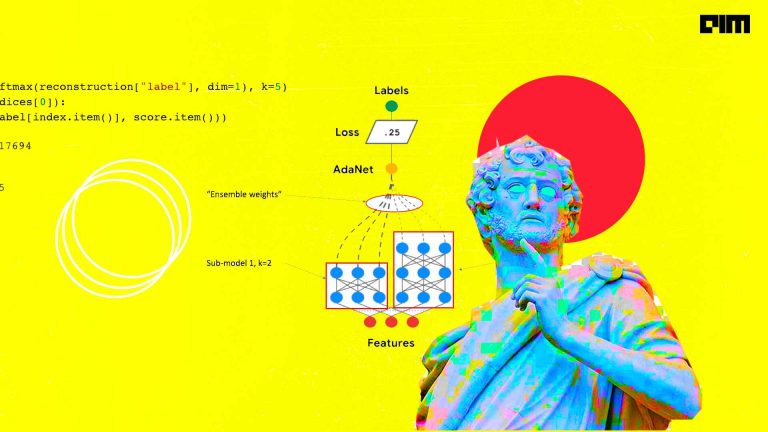

The learning task parameters specify methods for model evaluation and loss function. We need to pass these parameters to the xgb_params variable.

• objective = multi:softprob : This is used for multi-class classification using softmax objective. It returns predicted class probabilities.

• eval_metric = mlogloss : These are multi class log loss used in classification problems.

nc <- length(unique(train_label))

xgb_params <- list("objective" = "multi:softprob",

"eval_metric" = "mlogloss",

"num_class" = nc)

watchlist <- list(train = train_matrix, test = test_matrix)

Extreme Gradient Boosting Model

We can now define the boosting parameters. It controls the performance of the selected booster.

• nrounds: It gives the maximum number of iterations.

• eta: It controls the rate at which our model learns patterns in data. With a small value of eta, the model will be more robust to overfitting.

• max.depth: It controls the depth of the tree. Overfitting can be avoided with a smaller depth of the tree.

• gamma: It prevents overfitting. The optimal value of gamma depends on the data set.

• subsample: It controls the number of samples given to a tree.

• colsample_bytree: It corresponds to the fraction of features to use.

best_model <- xgb.train(params = xgb_params, data = train_matrix, nrounds = 100, watchlist = watchlist, eta = 0.001, max.depth = 3, gamma = 0, subsample = 1, colsample_bytree = 1, missing = NA, seed = 333)

After every iteration, it prints the error of training and test data. With a higher eta value, the training error decreases continuously but the test error remains constant. It leads to overfitting of the model. So we need to keep the value of eta relatively low for better performance of the model. Similarly, we can play with other parameters and check for the best model where both training and test error decreases continuously.

Training & Test Error Plot

e <- data.frame(bst_model$evaluation_log) plot(e$iter, e$train_mlogloss, col = 'blue') lines(e$iter, e$test_mlogloss, col = 'red')

Feature importance

imp_fearture <- xgb.importance(colnames(train_matrix), model = best_model) print(imp)

Gain is the most important column. The value decreases as we go down in the column. We can plot the gain column by using xgb.plot.importance.

xgb.plot.importance(imp_fearture)

Prediction on the test data

pred <- predict(best_model, newdata = test_matrix) prediction <- matrix(pred, nrow = nc, ncol = length(pred)/nc) %>% t() %>% data.frame() %>% mutate(label = test_label, max_prob = max.col(., "last")-1)

The column x1 and x2 show the probability of rejection and acceptance of a candidate. The label is the actual value and max_prob is the predicted value. When the probabilities of x1>x2 the candidate is rejected. For the fifth student, the prediction is wrong as x1 >x2. The student is rejected but the actual label is acceptance.

Confusion Matrix

table(Prediction = prediction$max_prob, Actual = prediction$label)

Conclusion

In this article, we have learned the introduction of the XGBoost algorithm. We further discussed the implementation of the code in Rstudio. Hopefully, this article will provide you with a basic understanding of XGBoost algorithm.

The complete code of the above implementation is available at the AIM’s GitHub repository. Please visit this link to find this code.