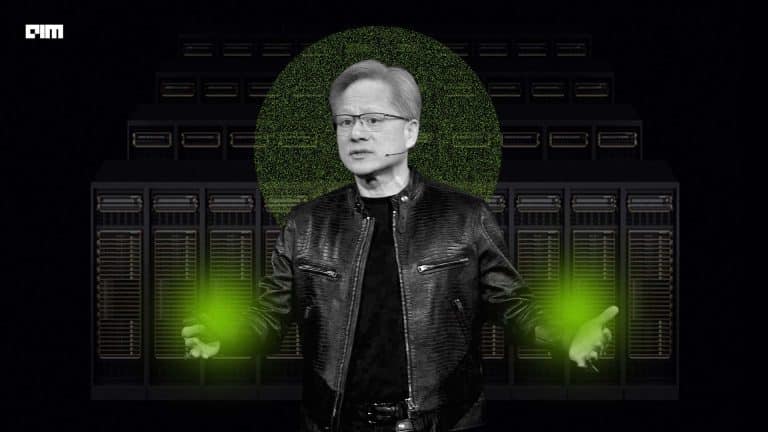

Early this week, netizens took to Twitter to express their disbelief and surprise, knowing that during the NVIDIA GTC Conference, CEO Jensen Huang did not deliver the entire keynote presentation himself. Some tech enthusiasts tweeted that they were ‘fooled’ by NVIDIA.

Had me fooled…https://t.co/y05RV7dFoH

— hardmaru (@hardmaru) August 13, 2021

For a good 14 seconds (from 1.02.41 to 1.02.55), Huang’s virtual replica spoke to the audience while introducing the CPU designed for terabyte-scale accelerated computing. Last week, the graphics processor company revealed in its blog post that it leveraged the power of Omniverse to pull this stunt off without anyone’s notice.

Creating a Virtual Replica

With the help of Omniverse — a tool for connecting to and describing the metaverse, NVIDIA collaborated with content creation tools, including Autodesk Maya and Substance Painter. The capabilities were further enhanced by tools including Universal Scene Description (USD), Material Design Language (MDL) and NVIDIA RTX real-time ray-tracing technologies. Collaboratively, these technologies helped NVIDIA create the photorealistic scene, physically accurate materials and lighting.

In this video, the NVIDIA team explains that to create a realistic clone of Huang’s background, they clicked hundreds of photographs of the kitchen (a popular backdrop for NVIDIA CEO’s talks ever since the beginning of the pandemic) and created a 3D model of the same. The engineers further explained that they had to ensure all the 16,000 details and elements — including easter eggs of the kitchen, were in place.

The deep learning, graphics research, engineering, and creative teams at NVIDIA did a full face and body scan to create Huang’s 3D model for his virtual replica. They then trained an artificial intelligence model to mimic his gestures and expressions and “Applied some AI magic to make his clone realistic,” the blog post read.

While the virtual replica of Huang managed to create a buzz, although much later than expected, a closer look will demonstrate the little details that team NVIDIA missed (details of his jacket) as shown in the picture above.

Omniverse’s Capabilities

NVIDIA revealed that its Omniverse is capable of more. Accompanied by tools including Foundry Nuke, Adobe Photoshop, Adobe Premiere, Autodesk Maya, and Adobe After Effects, Omniverse can render complex machines and create realistic cinematic environments.

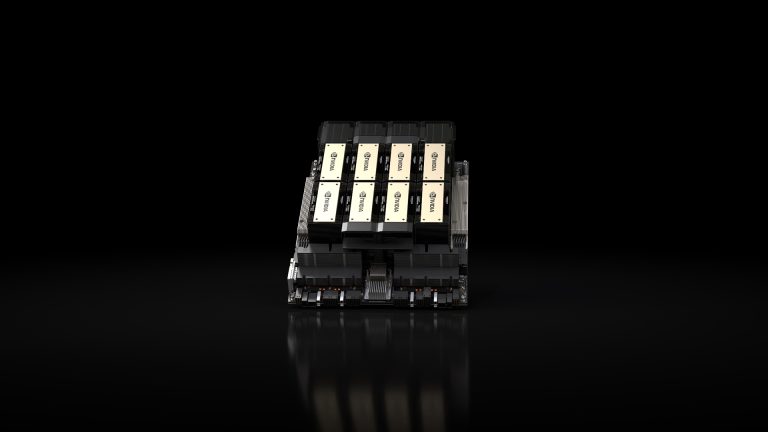

- During the keynote itself, Huang took the audience inside NVIDIA DGX Station A100 to take a look. According to NVIDIA, the team converted the CAD model into a physically accurate virtual replica using Omniverse’s capabilities.

According to former journalist and NVIDIA’s chief blogger Brian Caulfield, a project like this typically takes a team months to complete and weeks to render. However, Omniverse enabled the animation to be completed by one animator and rendered in less than a day.

- NVIDIA implemented PhysX, a staple in the NVIDIA gaming world, in Omniverse as research. The Omniverse engineering and research team re-rendered older PhysX demos in Omniverse, highlighting the critical PhysX technologies (think Soft Body Dynamics, Vehicle Dynamics, smoke and fire). It resulted in effects that were realistic-looking and obeyed the laws of physics in real-time.

- Additionally, Omniverse is critical to NVIDIA’s self-driving car initiative. It helps create an environment for the training of autonomous vehicles. In collaboration with Mercedes, NVIDIA has built its DRIVE Sim (NVIDIA’s simulation platform for AV development) on Omniverse to showcase how autonomous functions would perform in the real world. It allows the team to test lighting, weather and traffic conditions.

However, the cherry on the cake was the creation of a virtual replica of Huang’s kitchen during the GTC Conference keynote. The demonstration combines the works of NVIDIA’s deep learning, graphics research, creative and engineering teams.

Summing up

Last year, Neon, a collaboration of Samsung Technology and Advanced Research Labs or STAR Labs, debuted their virtual being. Unveiled at Consumer Electronics Show 2020 in Las Vegas, these virtual beings can be described as digital people with the ability to showcase intelligence and emotions. Essentially chatbots, these digital people are aimed at making video chatbots look real. However, Neon clarified that unlike AI assistants, these bots cannot function like intelligent assistants and do not know everything.

The virtual replica of Huang might be the inception of what the future of AI and virtual conferences might look like, but are we really ready for it? It all boils down to people spending hours and often money to watch and listen to their favourite celebrities and only receiving a virtual replica to deliver sessions.