|

Listen to this story

|

OpenAI has recently gained attention for ChatGPT and foray into enterprise solutions, but Cohere AI has been offering accessible and readily deployable Large Language Models (LLMs) to enterprises for a while now. And although Cohere competes with industry giants such as Google and OpenAI in the realm of LLMs, the company is relatively much obscure in comparison to its counterparts.

In fact, what sets Cohere apart is its mission and approach towards AI. While OpenAI’s mission is Artificial General Intelligence (AGI), Cohere AI is focussed on fulfilling their customer’s technological needs. “I think the biggest difference is how we make our advancements available to our customers. As you know, OpenAI has completely aligned with Microsoft, but our approach has been decidedly multicloud from the beginning,” Saurabh Baji, senior vice president of engineering at Cohere AI, told AIM.

Cohere AI’s mission

The Toronto-based startup wants to make its constantly improving tech accessible to all developers in a manner that is not prohibitively expensive, and is not hard as the barrier to entry is low. “In order to train the models, the amount of computer data and the kind of coordination needed, the kind of interconnect, and so on are something most of the developers are not going to have at their fingertips.”

“So building the model, maintaining it is just not worth it. So us doing this in the background, and then making the API available is what we do” Baji said.

Born out of Google

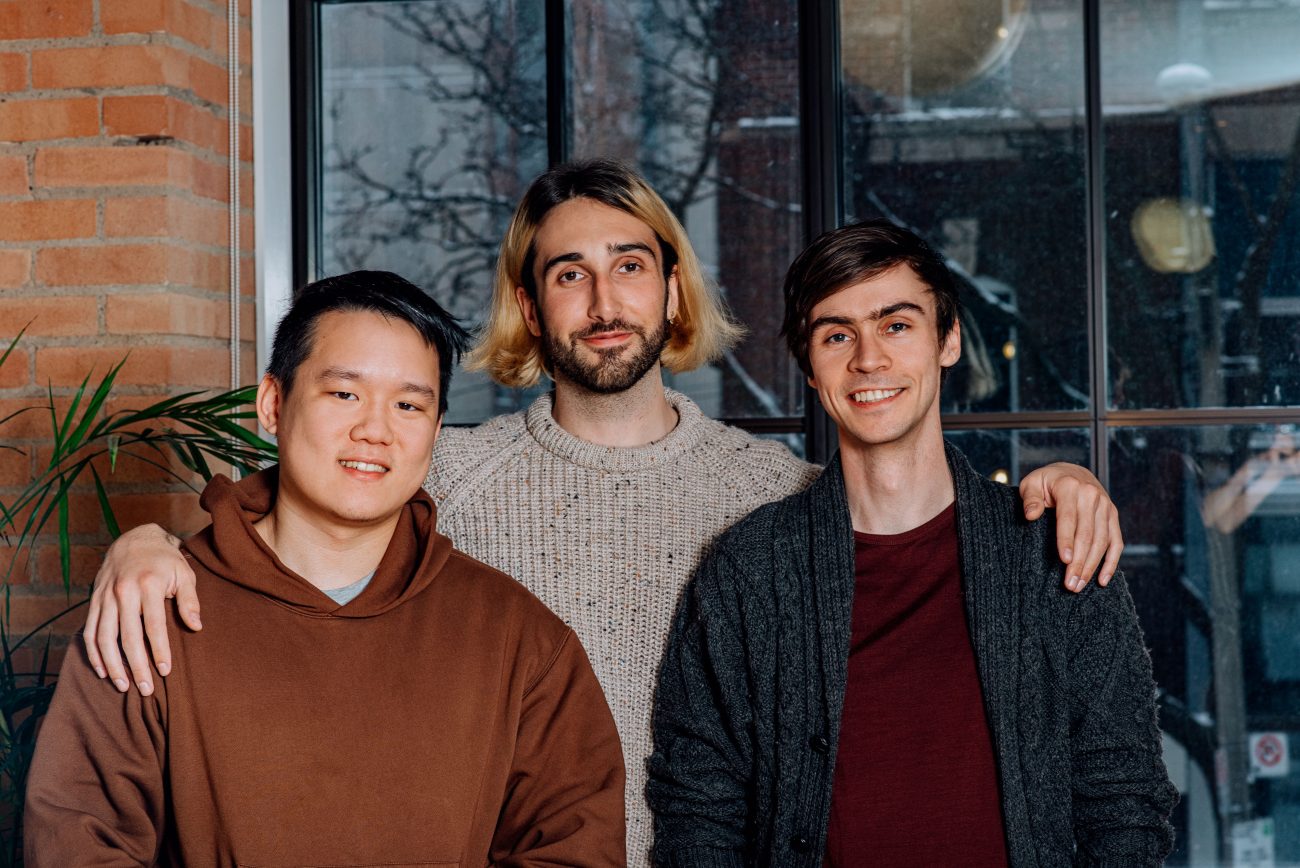

Two of Cohere AI’s co-founders, Aidan Gomez and Nick Frosst, were part of the Google Brain team. In fact, Gomez was the co-founder of the ‘Attention is all you Need’ paper which introduced Transformer-a novel neural network architecture based on a self-attention mechanism. “Aidan was there right from the beginning. He saw how this was born, played a part in it and saw the technology get adopted like wildfire inside Google,” Baji said.

While Gomez was intrigued by the developments at Google, he wanted to do more. However, even though the paper was published, Google wanted to keep the technology under the covers. “The slow pace at which this external adoption was happening was not something that Gomez and Frosst liked. So along with Ivan who attended the University of Toronto, they decided that they really wanted to get this out to the masses, and presented not only to select big-tech companies like Google, but to everyone in a manner that made a big difference.”

Models

Cohere has categorised its models into two categories — Generative and Embeddings. “On the generative side, we started off with what we call our base model, also what we call command models. They’re trained off of a very large corpus of data from the internet. But what we’ve seen more and more in terms of customer preference is the more instruction style tuned models,” Baji said.

Cohere’s model comes in different sizes-small, medium, large, and extra large. According to Baji, Cohere’s command models show weekly improvement, whereas other providers in the same domain only make significant announcements regarding a model after several months or even a year.

(Source: Stanford University)

“There might have been improvements and changes in the interim, but they’re all pretty much under the covers. We take a different approach. We started off with our command model and we immediately put it online for users to use,” he said.

Also, Cohere has another set of models called Command Nightly. “We are continually developing newer capabilities. You do need to get new data in and you need to do a significant amount of training. Then, you need to run evaluations on top to show this is actually an improvement over the last model.”

“So we do all of that and even though we release updates roughly on a weekly cadence today, we aim to actually bring it to nightly,” Baji said.

The second set of models Cohere has are called Embeddings, which are multilingual and can support over 109 languages. These offerings are designed for large enterprises whose end users are spread worldwide. “The data corpus that they want to index with our embeddings is often across several different languages. And so the search capabilities they need are also across different languages. Our embedding models deliver beautifully to be able to search across them,” Baji said.

hyped to see @CohereAI's new command beta model ranking competitively in @StanfordHAI HELM

— nick frosst (@nickfrosst) March 21, 2023

This is a result of the the hard work our team, and the weekly model updates they have made!

Benchmarking LLMs is difficult, and no single one paints a full picture but its nice to see :) pic.twitter.com/6yBsIso5mw

An enterprise-first approach

Even though Cohere AI has partnered with Google for its hardware capabilities, it is not limited to Google Cloud. For example, Cohere operates on AWS SageMaker. Besides, they plan to be available on other cloud providers as well. “We were the first large-scale TPU users outside of Google. And we are still the largest TPU users on Google Cloud. However, our approach remains very open and centric to where our customers are. So, we don’t expect our customers to get the data and come to where we are. We can give them the best in class latency by operating on AWS, if they exist on AWS, or any other cloud.”

Most recently, OpenAI released GPT-4, which has image capabilities. However, even though Baji acknowledges that image and video capabilities in LLMs are going to be significant, Cohere AI is yet to take a multimodal approach. “I think images and videos are very exciting. I think it’s also a bit of a different problem to tackle from a business perspective. We are not focused on AGI. But we’re still focused on the customer’s actual problems and the requests from many customers have been very language centric,” Baji said.