“The world is going to be decentralised, so the majority of data will be created at the edge. That’s the thing we have to solve in the future because, in order to get the value out of that data, you must share it somehow,” said Patrik Edlund, head of communication for Germany, Austria, and Switzerland, Hewlett Packard Enterprise.

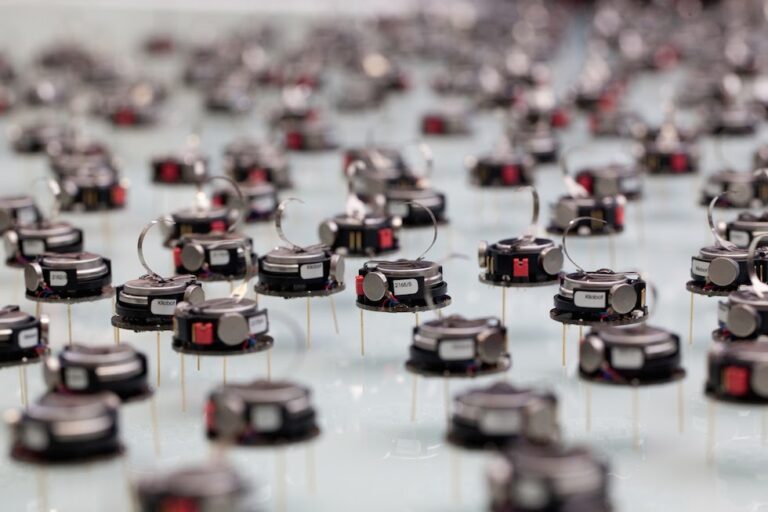

Swarm Learning is a decentralised machine learning framework that enables organisations to use distributed data to build ML models by leveraging blockchain technology that facilitates the sharing of insights captured from the data rather than the raw data itself. Swarm learning is a biologically inspired artificial intelligence approach based on the behaviour of social insects like ants and bees.

Why is swarm learning needed?

Traditional machine learning makes use of a data pipeline and a central server that hosts the trained model. The disadvantage is all the datasets are sent to and from the central server for processing. It is time-consuming, expensive and requires a lot of computing power. This communication can also hurt user experience due to network latency, connectivity and so on. In addition, huge datasets need to be sent to one centralised server, raising privacy concerns.

Many industries like healthcare depend heavily on data. When projects use their own limited sources of data without sharing or coordinating among organisations, the studies and datasets tend to be small which hinders the true potential of studies. Even teams and businesses fail to use another team’s knowledge because of data ownership attitudes resulting in tedious handling and duplication of datasets. Many times, by law, all data cannot be shared, and it must remain within the closed system of a singular model, which means other researchers will not be able to use that data and build upon it. Every research and development is burdened with data regulatory and privacy headaches.

Today, the swarm intelligence approach is mostly leveraged by the healthcare sector, a common use case for conducting research as many organisations share information about diseases and their identification. The approach has been proposed to accelerate the introduction of precision medicine noticeably. In a Nature paper, the central model was compared to a swarm learning model, and researchers found that the resulting accuracy of both models was identical.

How swarm protects data

According to the International Data Corporation, global data will grow from 33 zettabytes in 2018 to 175 zettabytes in 2025. In swarm learning, the ML method is applied locally at the data source. The approach leverages a decentralised ML approach. It makes use of edge computing, blockchain-based peer-to-peer networking and coordination without any need for one central server to process data. AI modelling is done by the devices locally at the edge (source of the data), with each node building an independent AI model of their own. The network amplifies intelligence with real-time systems with feedback loops that are interconnected.

Enhanced federated learning

Federated learning also works on a similar principle. The term was first introduced in Google AI’s 2017 blog. Federated learning, however, requires a central server that coordinates the participant nodes and receives model updates.

Centralized federated learning

“The networking and communication bottleneck is still one of the key issues in federated learning due to frequent interactions between the central server and the clients,” said Mehryar Mohri, head of the Learning Theory Team at Google. However, because AI training in swarm learning is done at the edge using the compute available on the clients, the back and forth to a central control is removed.

In swarm, central authority is substituted by a smart contract executing within the blockchain. Each node updates the blockchain, which then triggers a smart contract to execute an Ethereum VM code. This puts together all learning and constructs a model embedded in the Ethereum blockchain.

Swarm in action

Some companies have begun leveraging Swarm intelligence. For example, Italian startup Cubbit has developed a distributed technology for cloud storage that uses swarm intelligence to deliver speed and privacy, with each Cubbit Cell acting like a node in a swarm. Moreover, the maintenance of these systems costs much less as compared to traditional data centers.

Dutch company DoBots specialises in swarm robotics. The company’s project FireSwarm consists of a group of UAVs that specialise in finding dune fires. German startup Brainanalyzed enables scaling profits and predicting market movements for fintech customers. It combines swarm intelligence with data analytics to improve financial decision making.

Also read: How This Startup Is Using Swarm AI To Make Deep Learning Technology Accessible For Everyone

Swarm learning is also used for staff scheduling. The Particle Swarm Optimization (PSO) is used to solve nurse rostering problems. Ant Colony Optimization (ACO) can be used for vehicle routing problems. The Artificial Bee Colony (ABC) is a use case for group formation and task allocation in rescue robots.

The swarm learning code is available and can be downloaded from HPE, and the code for models and data processing used in the Nature paper analysis can be found at Github.