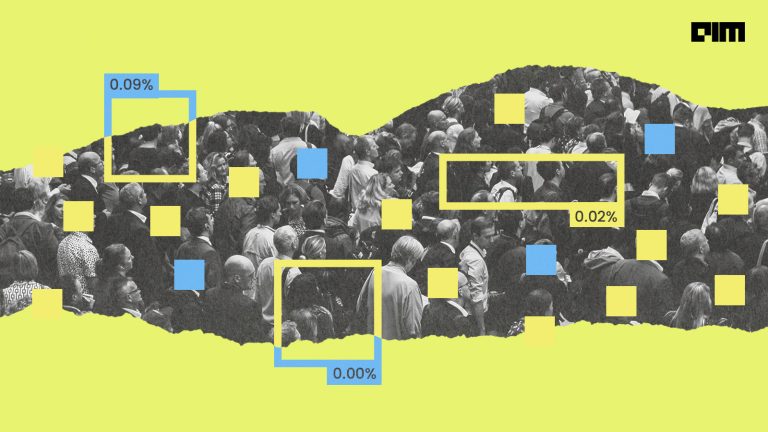

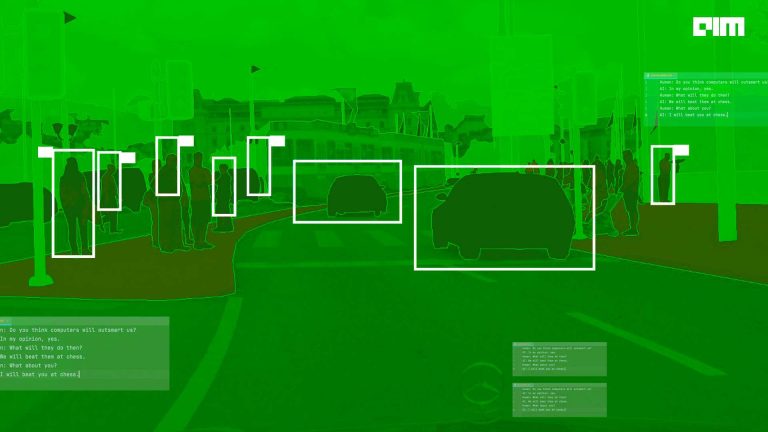

Object detection is a technique of training computers to detect objects from images or videos; over the years, there are many object detection architectures and algorithms created by multiple companies and researchers. In this race of creating the most accurate and efficient model, the Google Brain team recently released the EfficientDet model, it achieved the highest accuracy with fewest training epochs in object detection tasks. This architecture beats the YOLO, AmoebeaNet with minimum computation power.

It is an advanced version of EfficientNet, which was the state of art object detection model in early 2019, EfficientNet was a baseline network created by Automl MNAS, it achieved state-of-the-art 84.4% more accuracy and used a highly effective compound coefficient to scale up CNNs in a more structured manner.

There are many object detection techniques invented over the years some of the detection models are discussed here, but now EfficientDet has increased the bar and takes the accuracy and efficiency to new levels.

EfficientDet

EfficientDet is an object detection model created by the Google brain team, and the research paper for the used approach was released on 27-July 2020 here. As we already discussed, it is the successor of EfficientNet, and now with a new neural network design choice for an object detection task, it already beats the RetinaNet, Mask R-CNN, and YOLOv3 architecture. Also, the architecture of EfficientDet employs the ImageNet pre-trained EfficientNet as the backbone of the network.

- With EfficientNet-B3 as the backbone, it already increased accuracy by 3%

- It achieved 55.1 AP(average precision) on COCO test-dev that contains 77 million parameters.

- Their model runs 5x faster on CPU

- 2x-4x faster on GPU compared to other models.

EfficientDet purposed some of the new optimisation techniques to improve efficiency as follows:

- BiFPN

- & new Computational Scaling technique

Let’s understand the techniques that make this model so efficient.

BiFPN

As shown in the above diagram, BiFPN refers to a Bi-directional Feature Pyramid Network that can be enhanced with fast normalization and feature fusion. Basically, It is a type of pyramid network which allows easy multi-scale feature fusion. BiFPN idea was inspired by FPN(Feature Pyramid Network) where the information is inherently restricted by one-way information flow.

Traditionally, FPN techniques treat all the feature inputs the same even they have different resolutions, and that causes an unequal output feature.

PANet adds an additional feature of bottom-up flow at the cost of more computation.

NAS(neural architecture search) discovered NAS-FPN architecture. However, this architecture was irregular. But in BiFPN the information can be flow in both top-down and bottom-up approaches, Bi-FPN added the additional weight for each input so that network can learn each feature input differently.

Also, BI-FPN reduced the cross-scale connection by removing the nodes with a single input edge and added an extra edge to the output node if it’s on the same level. It treats each bidirectional path as one feature’s layer for making it more future fusion.

With all these optimisations, BiFPN further improved the accuracy by 4%

Model performance

On evaluating EffiecientDet on the COCO dataset, it achieved mAP(mean average precision) of 52.2 with 9.4x less computation and exceed current State of the art (SOTA) models by 1.5 points.

Implementation

As the model is open-sourced, let’s use EfficientDet for some object detection tasks. There are many pre-trained models on EfficientDet is available on the internet Like Monk: a Computer vision toolkit for low-code, easily installable object detection pipelines. They have already created a wrapper for all the different applications like Wheat head detection in the field, underwater imagery object detection, person detection in infrared imagery, and more here.

Multiple object detection using Google EfficientDet

We are going to use the official python notebook provided by the Google Automl team, for understanding the working.

- Install packages, download source code, and images with this script below. It will clone the automl repository and install all the dependencies from the requirements.txt file.

%%capture

#@title

import os

import sys

import tensorflow.compat.v1 as tf

# Download source code.

if "efficientdet" not in os.getcwd():

!git clone --depth 1 https://github.com/google/automl

os.chdir('automl/efficientdet')

sys.path.append('.')

!pip install -r requirements.txt

!pip install -U 'git+https://github.com/cocodataset/cocoapi.git#subdirectory=PythonAPI'

else:

!git pull

- You can make a parameter passing interface inside your notebook by using command like this, we are using effiicientDet-D0 that has 34.6 mAP, 10.2ms-batch1latency, 97fps-throughput.

- View graph in Tensorboard

!python model_inspect.py --model_name={MODEL} --logdir=logs &> /dev/null

%load_ext tensorboard

%tensorboard --logdir logs

- Let’s do the benchmark network latency, there are two main type of network & end-to-end latency,

- To ensure the network latency: from the first convolution to last class prediction output use the following code:

!python model_inspect.py --runmode=bm --model_name=efficientdet-d4 --hparams="mixed_precision=true"- Calculating End to End latency: from the input image to the final rendered new image, including image

m = 'efficientdet-d4' # @param

batch_size = 1 # @param

m_path = download(m)

saved_model_dir = 'savedmodel'

!rm -rf {saved_model_dir}

!python model_inspect.py --runmode=saved_model --model_name={m} \

--ckpt_path={m_path} --saved_model_dir={saved_model_dir} \

--batch_size={batch_size} --hparams="mixed_precision=true"

!python model_inspect.py --runmode=saved_model_benchmark --model_name={m} \

--ckpt_path={m_path} --saved_model_dir={saved_model_dir}/{m}_frozen.pb \

--batch_size={batch_size} --hparams="mixed_precision=true" --input_image=testdata/img1.jpg

- Inference images, below code will execute the automl inspect.py file with arguments model name, run mode, checkpoint path.

# first export a saved model.

saved_model_dir = 'savedmodel'

!rm -rf {saved_model_dir}

!python model_inspect.py --runmode=saved_model --model_name={MODEL} \

--ckpt_path={ckpt_path} --saved_model_dir={saved_model_dir}

# Then run saved_model_infer to make an inference.

# Notably: batch_size, image_size must be the same as when it is exported.

serve_image_out = 'serve_image_out'

!mkdir {serve_image_out}

!python model_inspect.py --runmode=saved_model_infer \

--saved_model_dir={saved_model_dir} \

--model_name={MODEL} --input_image=testdata/img1.jpg \

--output_image_dir={serve_image_out} \

--min_score_thresh={min_score_thresh} --max_boxes_to_draw={max_boxes_to_draw}

- Display image using display module:

from IPython import display

display.display(display.Image(os.path.join(serve_image_out, '0.jpg')))

- You can execute more outputs and detect objects by using the trained model and run the inference as follows:

serve_image_out = 'serve_image_out'

!mkdir {serve_image_out}

saved_model_dir = 'savedmodel'

!rm -rf {saved_model_dir}

# Step 1: export model

!python model_inspect.py --runmode=saved_model \

--model_name=efficientdet-d0 --ckpt_path=efficientdet-d0 \

--hparams="image_size=1920x1280" --saved_model_dir={saved_model_dir}

# Step 2: do inference with saved model.

!python model_inspect.py --runmode=saved_model_infer \

--model_name=efficientdet-d0 --saved_model_dir={saved_model_dir} \

--input_image=img.png --output_image_dir={serve_image_out} \

--min_score_thresh={min_score_thresh} --max_boxes_to_draw={max_boxes_to_draw}

from IPython import display

display.display(display.Image(os.path.join(serve_image_out, '0.jpg')))Conclusion

Google brain team developed the most powerful and efficient object detection algorithm so far we have seen a started code for object detection provided officially by google Github repository,

Also, there are many Repository, and other framework implementations are also available regarding EfficientDet, some of the repository you should look to get more information about this framework are as follows: