Though it’s been a year into the COVID outbreak, the researchers, healthcare workers, and hospital staff are still struggling to contain the situation. Not only has it been a challenge to make accurate predictions of the course of the disease, but the pandemic has also put a strain on hospitals’ resources.

To address this, Facebook AI has recently introduced pre-trained machine learning (ML) models to help doctors project the prognosis of COVID patients to make effective clinical decisions and allocate resources. The research is a part of an ongoing collaboration with NYU Langone Health’s Predictive Analytics Unit and Department of Radiology, where the ML models are used to predict patient deterioration using X-ray radiographs.

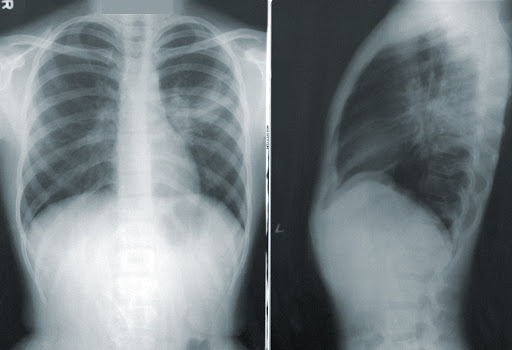

In a recent paper, Facebook AI researchers showed how self-supervised models based on the momentum contrast (MoCo) method could help in learning more general image representations for downstream tasks. The experiment proved the ML model (using sequential chest X-ray images) can predict the deterioration of COVID patients up to 96 hours with the highest accuracy. The researchers believe a model like this can help healthcare providers to predict the demand for the resources that would be critical to deal with high-risk patients.

Also Read: Are Easy-To-Interpret Neurons Necessary? New Findings By Facebook AI

Tech Explained

Deep learning methods for image-based diagnosis using supervised training have been a standard in predicting the risk of deterioration in COVID patients. However, such methods come with several constraints like the collection of labelled data, expensive training etc. Also, experts believe that large datasets often do not capture data from emerging diseases, such as COVID-19, and thus can restrict the use of deep learning methods.

That is why the Facebook AI team relied on a new self-supervised Momentum Contrast technique to generate accurate representations of images for classification. This allowed the group to achieve feature extraction independent of labels or tasks associated with the pre-training dataset, wrote the authors.

Diagram for momentum contrast training.

For the experiment, the team pre-trained a model using MoCo on two large public chest X-ray datasets — MIMIC-CXR and CheXpert with more than five lakhs chest X-ray radiographs. The pre-trained model was then used to build classifiers for predicting the clinical deterioration of COVID patients.

To evaluate this approach’s effectiveness, the team applied it on three downstream tasks — adverse event prediction from single images; oxygen requirements prediction from single images; and adverse event prediction from multiple images. The team noted that with DenseNet architecture, the self-supervised training achieves higher performance accuracy for predicting an adverse event at all time points.

Model outputs

According to the paper, the team used the NYU COVID dataset with over 26 thousand X-ray radiographs from 4,914 patients for fine-tuning the model. The labelled data showed whether the patient’s condition deteriorated within 24, 48, 72, or 96 hours of the scan, wrote the authors.

The results

The team further built two kinds of classifiers for COVID deterioration tasks — one that uses a single X-ray, and another that uses a sequence of X-rays by aggregating the image features via a Transformer model. According to the results, while the first model achieved an accuracy of 0.742 for predicting an adverse event within 96 hours, the new transformer-based architecture attained an accuracy of 0.786 to predict an adverse event at 96 hours.

Also Read: Tech Behind Facebook AI’s Latest Technique To Train Computer Vision Models

Wrapping Up

The COVID pandemic has massively increased healthcare providers’ need to understand COVID patients’ prognosis to make effective clinical decisions. With the uncertainty involved with this deadly disease, it has been challenging to develop machine learning models for predicting its risks. A lot of this could be attributed to the difficulty in gathering large datasets by most medical centres.

As a result, Facebook AI decided to address this problem using self-supervised contrastive loss pre-training. Facebook AI is also open-sourcing their pre-trained models for other researchers and healthcare providers to fine-tune their own X-ray datasets, and hopes to assist the broader community with resource planning.

Read the paper here.