A couple of years ago, Google offered a new service, one-stop solution to all the dataset woes — Dataset Search. After a beta launch in 2018, it was fully launched in January 2020. In a work titled, “Google Dataset Search by the Numbers”, Google provided an overview of the available datasets, current metrics and insights originating from their analysis, and suggested best practices for publishing future scientific datasets.

What Do The Numbers Say

According to Google, the Dataset Search corpus consists of nearly 31 million datasets from more than 4,600 internet domains. Of which, half are from .com domains along with .org and governmental domains which are well represented.

Major Sources

When it comes to datasets contribution, peer-reviewed journals such as Nature have published valuable datasets, with support from DataCite, which provides digital object identifiers (DOIs) for them. DOIs allow for easy citation, which in turn help in improved availability. However, Google pointed out that only about 11% of the datasets in the corpus have DOIs. Of these, about 2.3M datasets come from two sites mainly-datacite.org and figshare.com.

Open licensing improves the reusability of the data. Here are the licensed data statistics:

- Only 34% of datasets specify license information.

- Of these, recognisable licenses account for only 72% of the cases. These are typically from Government portals.

- 89.5% of these datasets are either accessible for free or use a license that allows redistribution, or both.

- Of these open datasets, 5.6M (91%) are open for commercial reuse.

- Only 44% of datasets specify download information in their metadata.

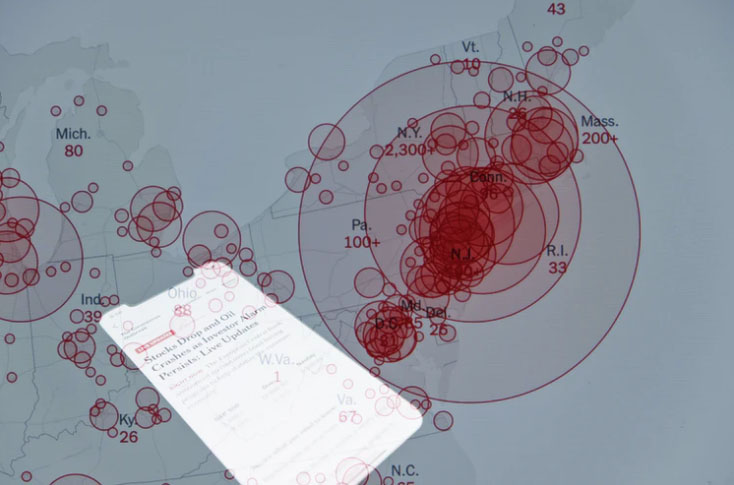

What Do Users Search For

Overall, 2.1M unique datasets from 2.6K domains appeared in the top 100 Dataset Search results over 14 days in May 2020. The distribution of topics being queried is different from that of the corpus as a whole. As illustrated above, geoscience represented a smaller fraction relative to their corpus when compared to medicine, which, according to the survey, can be influenced by the timing of the analysis.

The search results might keep on varying but to facilitate seamless scientific discourse, reusability and discoverability of the datasets are crucial. The team behind this survey recommend the following practices to those who publish the datasets:

- Open licenses are essential but so is making the licensing information accessible to be read. The ideal format is machine-readable. It helps to choose the open one easily. According to Google, encouraging and enabling scientists to choose licenses for their data will result in many more datasets being openly available.

- The dataset metadata should be on pages that are accessible to the web crawler. Reiterating on the previous point, these pages should provide metadata in machine-readable formats in order to improve discoverability.

- Pick popular portals to publish than personal ones if the publisher is not consistent. For instance, sites like Kaggle will show up in results more than a one time published portal. The report stated that data repositories, such as Figshare, Zenodo, DataDryad, Kaggle Datasets and many others, are an excellent way to ensure dataset persistence. Many of these repositories have agreements with libraries to preserve data in perpetuity.

- Dataset metadata should explicitly indicate the data collection sources and other changes. Since datasets are published in multiple repositories, having provenance information helps users understand who collected the data, where the primary source of the dataset is, or how it might have changed.

- Digital Object Identifiers or DOIs, as discussed above, are critical for long-term tracking and useability. Not only do these identifiers allow for much easier citation of datasets and version tracking, but they are also dereferenceable: if a dataset moves, the identifier can point to a different location.

Learn more about the results here.