Google AI has introduced an ML-based approach for game developers to efficiently train game testing agents and find bugs. Last year, Sony had pulled Cyberpunk 2077 from its digital store over bug issues and its developer CD Projekt Red is now facing a class action lawsuit from its investors. Assassin’s Creed Valhalla and cartoon shooter XIII also have a huge bug problem.

Google AI’s approach calls for minimal machine learning experience, works with a wide range of game genres, and can train an ML strategy that activates game actions from the game state in less than an hour on a single game instance.

ML for video games

Video game testing helps discover and document software defects, and the most basic form of game testing is simply playing the game. Most of the bugs are easy to detect and fix, but the real challenge is locating them in the sprawling state space of a modern game. Google AI trained a system to play games at scale, minimising human labour.

Researchers including Leopold Haller and Hernan Moraldo found the most effective way to solve the problem was not to try to train a single, super-effective agent capable of playing the entire game from end-to-end but of providing developers with the competence to train an ensemble of game-testing agents, each of which could effectively execute tasks of a few minutes each, widely referred to as “gameplay loops”.

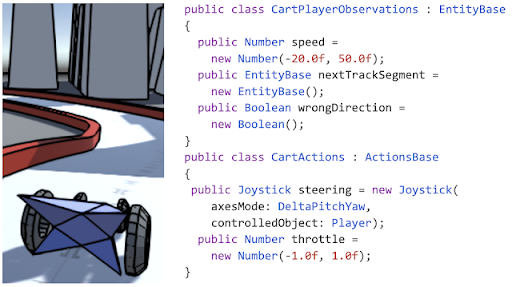

API to neural networks

This high-level, semantic API is not only simple to use, but it also allows the system to adapt to the specific game being developed — the game developer’s specific combination of API building blocks influences the network architecture choice of the researchers because it informs them about the different gaming scenario in which the system will be used.

Once the task of generating the neural network is over, researchers need to choose an algorithm to train them to play the game. Reinforcement learning was an obvious choice. However, RL algorithms typically require more data than a single game instance can generate in a reasonable length of time, and attaining acceptable results in a new domain frequently necessitates hyperparameter adjustment and a deep understanding of the ML domain.

The researchers used imitation learning (IL)– inspired by the DAgger algorithm. IL trains ML policies by observing professionals play the game and thereby needs to mimic the behaviour of the expert. It will further aid testers and game developers to provide playing demonstrations themselves – as they are experts in their field. In addition, training will now become real-time as the system drastically cuts down the training time and the amount of data required.