“Knowing how powerful machine learning has become, it’s just a matter of time before it completely takes over the animation industry.”

In recent years, deep learning has increased the modern scope of animation, making it more accessible and powerful than before. Artificial intelligence has become a shiny new weapon in the creator’s arsenal. The advancement of hardware and AI has blurred the lines between virtual and real characters(eg: movies like Alita). Something that could have taken hours to perform by animators is being done by automation in minutes.

According to Statista, the global animation market is expected to grow from $259 billion in 2018 to 270 billion by 2020. Animation has reached new heights because of the rapid evolution of deep learning and the proliferation of software tools. Here are some examples of how Artificial intelligence and machine learning are bringing animation to life in studios.

For rotoscoping

Rotoscoping is the technique of creating animations by drawing each animated sequence over the corresponding live-action sequence. It lets animators produce realistic characters who move like humans. For a long time, the animation industry has been using rotoscoping to create visual effects that feel more fluid on the screen. The original Star Wars films are an excellent example of rotoscoping. Through the technique of rotoscoping, animators were able to create the visual effects of the lightsaber. Disney used this method heavily for the first time in Snow White and the Seven Dwarves. Actors would be cast to provide guides for the animators’ movements. Since the studio used the stop motion technique for many of their films, they found it an excellent alternative to traditional animation techniques, including their animated films like Alice in Wonderland.

Video post-production in the animation industry is quite a repetitive process that requires manually composing, tracking and rotoscoping animation. However, AI and deep learning development have finally automated the time-consuming manual work, which has decreased cost by a considerable margin.

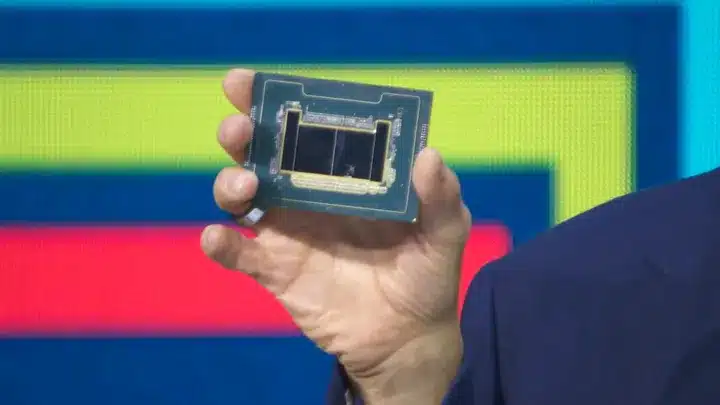

LAIKA, the renowned studio that created films like Coraline, ParaNorman, Kubo and the Two Strings, and The Boxtrolls, has partnered with Intel to combine machine learning and Artificial Intelligence to develop tools that will accelerate rotoscope tasks. LAIKA’s stop-motion films use unique character design and 3D-scanned facial animation, proving a challenge for repetitive tasks. Intel is therefore focusing on tools that will help make the animators work processes more efficient and streamline the design process.

For 3D face modelling

Latest advances in technologies include generative models, which can produce highly realistic results in fields like semantic images or videos. Disney has made the process of designing and simulating 3D faces easier through machine learning tools. Researchers at Disney have proposed a nonlinear 3D face-modelling method that utilizes neural architectures. This system learns a network topology that maps the neutral 3D model of a face into the target facial expression.

Whereas, animation tech start-up Midas Interactive has already put the wheels in motion. Jiayi Chong, a former technical Pixar director, used his experience to create a new tool called Midas Creature, which automates complex 2D character animation. With Midas Creature, artists and designers tell the engine to choreograph and figure out the movements themselves, and there will be no need for character design.

For voice over

AI is also playing a significant role in the exhaustive voice over chores in post-production. Adobe has recently created new software that uses AI-powered lip-syncing to help animators synchronize the dialogue and motion of animated characters. With Adobe Character Animator, one can use Adobe Sensei AI technology in Character Animation to assign mouth shapes to mouth sounds. It accurately syncs the character and voice in the process of dictation through traditional frame-by-frame lip-syncing.

AI has the potential to take over the mundane tasks and allow the creators to focus on critical jobs in the pipeline. The freedom to spend less time on relatively less creative works like rotoscopy can enable studios to come up with high quality content during the same time. And, with the pace at which ML research is progressing, there is no doubt that the creator’s economy will get the much needed boost.