In the last few years, the availability of a large amount of clinical data, especially to Electronic Health Records (EHR) data has spurred the growth of Deep Learning techniques which are being leveraged to analyse the patients’ data faster. Today, EHR software is being integrated with machine learning capabilities and tools are being built into EHR systems to provide better insights for patient outcomes.

Electronic health record collects a patient’s information such as health history and so on. EHRs have become a booming research area for AI and deep learning researchers since it can provide a host of untapped possibilities the data can bring about. In this article, we will discuss a recent study that has made use of EHRs to predict medical conditions at an accurate level.

(Photo courtesy: Urine Drug Test HQ)

The Need For Deep Learning

When an EHR is read, there are a number of factors that can be considered under a statistical purview. For instance, consider the variables in the health data that have statistical significance. The variables may even span hundreds of number based on the context.

A study has even used this to develop risk prediction models using EHRs and has found that larger the number of predictor variables, higher the inaccuracy associated with the prediction. In addition, there was the problem of missing data. In spite of the statistical advantages in the study, the authors suggest that complex prediction models should have a broader data reach essential for decision making in a clinical setting.

Integrating EHR For Deep Learning

The large number of variables in EHR leads to inaccuracy and can be void for prediction accuracy. This is the reason a group of researchers from Google in collaboration with University of California, University of Chicago Medicine and Stanford University have presented a novel way of using deep learning (DL) with EHR. The study has shown that Deep Learning methods are effective than prediction models mentioned earlier.

The first part of the study consists of designing a data processing pipeline, where the inputs are EHR data and the outputs are Fast Healthcare Interoperability Resources(FHIR) files – a standard format for exchanging healthcare information electronically. This is to make the data compatible across hospitals.

The second part consists of the researchers applying various DL methods on predictive problems obtained from health data labelled along two hospitals. The researchers give the account of the dataset for the study.

“We included EHR data from the University of California, San Francisco (UCSF) from 2012 to 2016, and the University of Chicago Medicine (UCM) from 2009 to 2016. We refer to each health system as Hospital A and Hospital B. All EHRs were de-identified, except that dates of service were maintained in the UCM dataset. Both datasets contained patient demographics, provider orders, diagnoses, procedures, medications, laboratory values, vital signs, and flowsheet data, which represent all other structured data elements (e.g., nursing flow sheets), from all inpatient and outpatient encounters. The UCM dataset additionally contained deidentified, free-text medical notes. Each dataset was kept in an encrypted, access-controlled, and audited sandbox.”

They use three DL algorithms — a long short-term memory(LSTM) recurrent neural network, attention-based ϴ adaptive neural network(TANN) and boosted time-based decision stumps. The first two algorithms were trained on TensorFlow while the last algorithm was trained with a C++ compiler. The prediction models with statistical analysis were performed on Scikit-learn.

The methodology entails data representation and processing for predicting medical conditions in the model prior to applying DL algorithms. Once conducted, the predictions were placed across four outcomes broadly which are, an important clinical outcome (death), quality of care(readmissions to hospitals), resource utilisation (length of stay) and patient’s diagnosis details. Predictions were analysed for specific time intervals (this is known as prediction timing).

Results

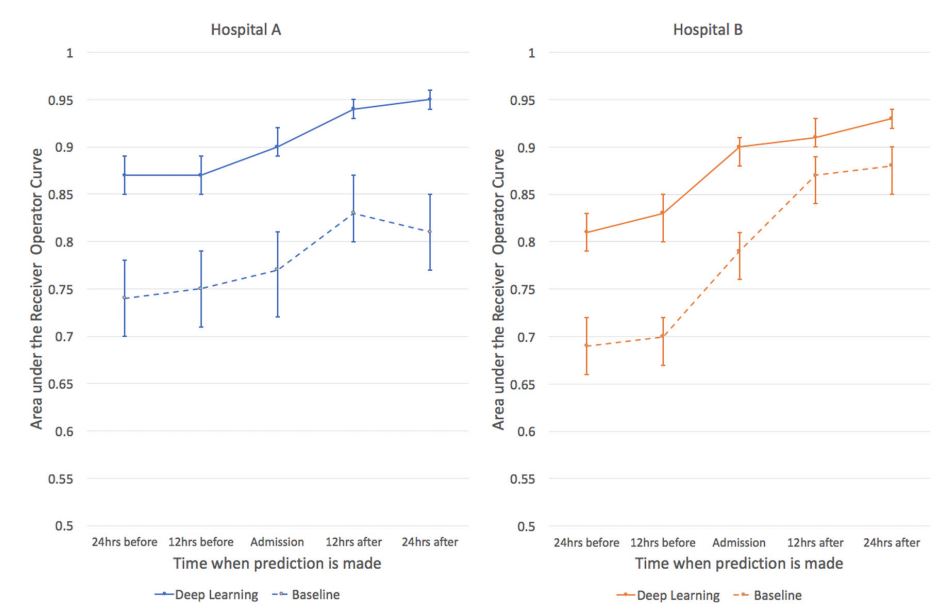

The researchers had data for 216,221 instances of hospitalisations with more than 100,000 unique patients. When predicted with DL, the experiment along the four outcomes: mortality, readmissions, long length of stay and discharge diagnosis show an accuracy of around 80 percent and above for both hospitals A and B, which is significantly higher than traditional prediction models. The DL model also improves with accuracy as the time interval period is expanded. The following figure shows a parameter called area under the receiver operating characteristic curve(AUROC) for the study.

Conclusion

With Deep Learning, researchers showed that it is possible to attain higher accuracies in medical predictions almost perfectly. However, the authors emphasise that this method should be explored for more clinical trials, to test the viability of predictions in EHR. Nevertheless, the effect of DL for exploring health data is worth the pursuit in research.