Computers, over the years, have gone through incredible phases of development. They started with being bulky, heavy, and extremely expensive devices used only for research purposes in the early 1900s. From there, they started getting miniaturised and developed into instruments for personal use in the 1960s. Now they come in different sizes and with varying modes of input (touch or external devices).

However, researchers are constantly developing new methods to make computers even more interactive and operational with minimal physical contact. Using different AI/ML models for this purpose has become a go-to method for all data scientists.

Using hand gestures as computer input

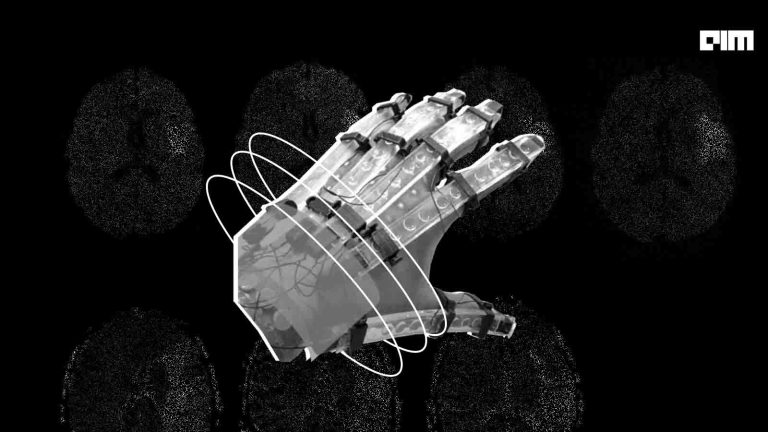

New research has proposed a novel method by which hand gestures can develop a more interactive computer system. The study published recently in Proceedings of the ACM on Human-Computer Interaction journal series discusses a new technology that uses hand gestures to pass on commands to the computer. There is no use of a keyboard, a mouse, or touch.

The researchers from the University of Waterloo have developed a prototype built on this concept—named “Typealike”. The basic principle is that the system uses a camera for input and utilises computer vision recognition techniques for interpreting different hand gestures to provide the necessary command. For example, if a user places their right hand with the thumb in an upward direction, the program reads it as a command to increase the system’s volume. First, researchers had to develop a mechanical attachment that reorients the webcam to focus on the user’s hand, then create an algorithm to recognise the different hand gestures.

The program uses OpenCV, a powerful open-source library in computer vision that is well known for its interactive display and real-time problem-solving abilities. They have utilised this library’s object detection and image processing capabilities to build a system that works with good precision. Here, the user has to wear a yellow-coloured cap on their fingertip to type on the virtual keyboard. When in the mouse controlling mode, the user only has to display a different number of fingers for various functions. The module was validated by a 52-year-old person with paralysis to achieve around 80% average accuracy.

Image: Typealike: Near-Keyboard Hand Postures for Expanded Laptop Interaction

Advantages and applications

AI-based technology has found several meaningful applications to help make the lives of people with different disabilities easier. This study aimed to develop a computer application that uses alternative controls than keyboard and mouse for a person who has suffered motor disabilities due to stroke. That is why it was tested by a stroke patient having paralysis on his left side. This new development can be the next step in revolutionising the human-computer interface and making it contactless for most purposes. Hopefully, it will at least soon make it possible for people with paralysis to stay in touch with the world using interactive computer systems.