What do we see on Chrome when there is no internet connection? The classic Chrome Dinosaur Game: T-Rex Runner Game (aka the No Internet Game)! Simply press the space bar and the dinosaur will start running. Press the arrow-up key to make the dinosaur jump; the longer you press the key, the higher it jumps. Every single one of us must’ve played this game at least once. Has it ever crossed your mind that you can play this very game using just your palm? To me, it has!

Here’s how I played the Chrome-Dino game with just my palm! Is it possible, you may ask? It is very much possible with a special Python module named PyAutoGUI.

What libraries did I use?

- NumPy (a Python library)

- OpenCV (another Python library, focussing on real-time applications) and of course,

- PyAutoGUI

import numpy as np

import cv2

import math

import pyautogui

How did I do it?

- Open up the camera and draw a rectangle

- Blur the image and convert it into HSV

- Erode, Dilate and Threshold

- Contour

- Run the code and play!

Do you want to implement the same? Follow these steps below!

Step-1: Open the camera to read input and draw a rectangle on the frame. I used a built-in function of OpenCV to draw the rectangle. Also, read the hand image from the frame. Feel free to change the frame size!

cap = cv2.VideoCapture(0)

while True:

ret, frame = cap.read()

# collect hand gestures

cv2.rectangle(frame, (100, 100), (300, 300), (255, 0, 0), 0)

hand_img = frame[100:300, 100:300]

Step-2: Smoothen the input image using Gaussian Blur. Image smoothing techniques help in reducing the noise. Gaussian blur is used to reduce the amount of noise and remove dark spots (speckles) within the image.

HSV (Hue, Saturation and Lightness) separates the image intensity from the colour information. Hence, I preferred HSV over BGR and converted the initial BGR image into HSV.

blur = cv2.GaussianBlur(hand_img, (3, 3), 0)

hsv = cv2.cvtColor(blur, cv2.COLOR_BGR2HSV)

#binary image with where white will be skin colors and rest is black

skin = cv2.inRange(hsv, np.array([2, 0, 0]), np.array([20, 255, 255]))

kernel = np.ones((5, 5))

Step-3: Erosion, dilation and thresholding.

Erosion helps in eroding away the boundaries of foreground object. Dilation is the exact opposite of osion. It helps in increasing the foreground object (increases the white region in the image). Thresholding is a method of image segmentation. It is used to create binary objects. If pixel value is greater than the threshold value, it is assigned one value (white), otherwise it is assigned another value (black). Take note that the larger the kernel value is, the more blurred the image will be.

dilation = cv2.dilate(skin, kernel, iterations=1)

erosion = cv2.erode(dilation, kernel, iterations=1)

filtered = cv2.GaussianBlur(erosion, (3, 3), 0)

ret, thresh = cv2.threshold(filtered, 127, 255, 0)

contours, hierachy = cv2.findContours(thresh, cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)

Step-4: Detecting contours.

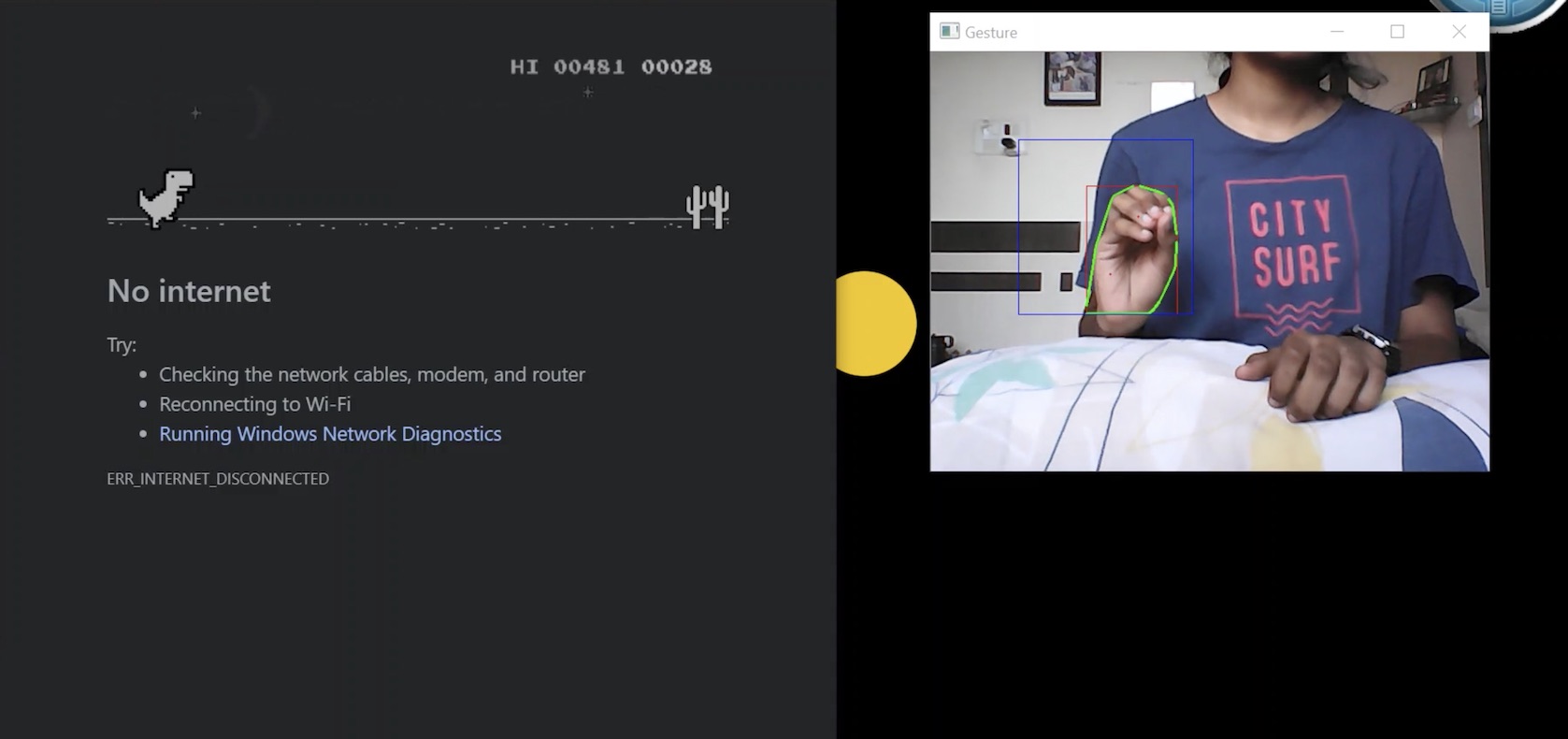

Wherever a white (skin colour) pixel is detected, an outline will be drawn. To detect the outline of my palm, I used cv2.moments and found out the centroid of the contour. When your palm is detected completely, space bar will be pressed so the T-Rex can jump. When your palm is not detected, the T-Rex will not jump (it just runs). This is done with the help of PyAutoGUI.

PyAutoGUI is a cross-platform GUI automation Python module for human beings. Using PyAutoGUI, we can control the mouse and the keyboard just by writing a few lines of code.

try:

contour = max(contours, key=lambda x: cv2.contourArea(x))

x, y, w, h = cv2.boundingRect(contour)

cv2.rectangle(hand_img, (x, y), (x + w, y + h), (0, 0, 255), 0)

hull = cv2.convexHull(contour)

draw = np.zeros(hand_img.shape, np.uint8)

cv2.drawContours(draw, [contour], -1, (0, 255, 0), 0)

cv2.drawContours(draw, [hull], -1, (0, 0, 255), 0)

hull = cv2.convexHull(contour, returnPoints=False)

defects = cv2.convexityDefects(contour, hull)

count_defects = 0

for i in range(defects.shape[0]):

s, e, f, d = defects[i, 0]

start = tuple(contour[s][0])

end = tuple(contour[e][0])

far = tuple(contour[f][0])

a = math.sqrt((end[0] – start[0]) ** 2 + (end[1] – start[1]) ** 2)

b = math.sqrt((far[0] – start[0]) ** 2 + (far[1] – start[1]) ** 2)

c = math.sqrt((end[0] – far[0]) ** 2 + (end[1] – far[1]) ** 2)

angle = (math.acos((b ** 2 + c ** 2 – a ** 2) / (2 * b * c)) * 180) / 3.14

if angle <= 90:

count_defects += 1

cv2.circle(hand_img, far, 1, [0, 0, 255], -1)

cv2.line(hand_img, start, end, [0, 255, 0], 2)

# if the codition matches, press space

if count_defects >= 4:

pyautogui.press(‘space’)

cv2.putText(frame, “JUMP”, (115, 80), cv2.FONT_HERSHEY_SIMPLEX, 2, 2, 2)

except:

pass

Step-5: Exit the capture window by pressing the key ‘q’.

cv2.imshow(“Gesture”, frame)

if cv2.waitKey(1) == ord(‘q’):

break

cap.release()

cv2.destroyAllWindows()

Step-6: Finally, run the program (it might take a while depending on the speed of your computer). Make sure you keep your hand in the rectangle that is visible on the screen. You may change the dimensions of it according to your convenience.

And that is how I played a game with just my palm! For best results, use this in a brightly lit area. You can use the online version if you don’t want to switch off your internet connection every time you want to play the game.

https://apps.thecodepost.org/trex/trex.html

This mini project is well suited for someone who is just getting started with OpenCV and Image Processing.

For the complete code, head to my GitHub profile, link to which is given below!

https://github.com/srinijadharani/Chrome_Dinosaur_Game

Or my LinkedIn profile: https://in.linkedin.com/in/srinija-dharani-553817194