Machine learning models for tracking eye movements have been picking up momentum in recent years. From identifying eyes in photos to analysing facial expressions, detecting diabetic retinopathy, to user experience research, the use cases are plenty.

Google has come up with a slew of machine learning models to power research in the fields of vision, healthcare etc. The tech giant has also highlighted the potential use of smartphone gaze technology as a digital biomarker of mental fatigue.

This article looks at two research papers — Accelerating eye movement research via accurate and affordable smartphone eye-tracking and Digital biomarker of mental fatigue — published by participants from a user study volunteers under the Google User Experience Research initiative.

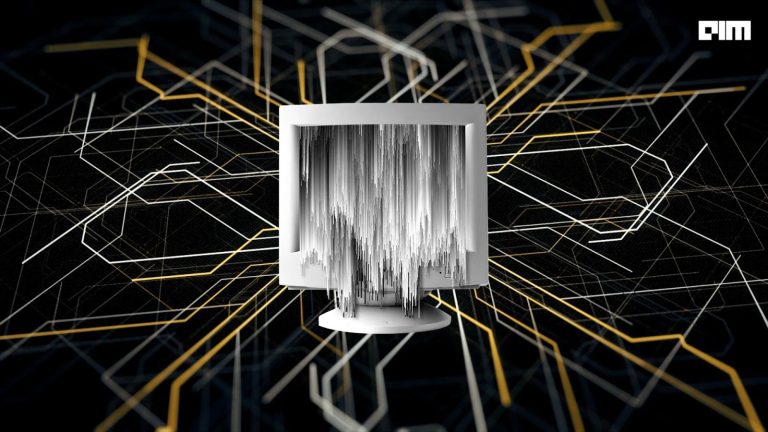

Gaze model architecture

Unpersonalised gaze model architecture (Source: Google AI)

As shown in the image, the eye regions, extracted from a front-facing image, were used as input into a CNN and fully connected layers, alongside combining the output with eye corner landmarks to infer gaze x-and y-locations on the screen.

The researchers have used a multilayer feed-forward CNN technique, trained on the MIT GazeCapture dataset, along with a face detection algorithm to select the face region with associated eye corner landmarks and cropped the images near to the eye region.

The images’ cropped were fed through two identical CNN towers with shared weights, where an average pooling layer followed each convolutional layer. Eye corner landmarks were combined with the output through fully connected layers. Except for the final fully connected output layer (FC6), rectified linear units (ReLU) were used for all layers (FC1, FC2, FC3…).

ReLU or rectified linear unit is an activation function, where the function returns zero if it receives any negative input, but for any positive value ‘x,’ it returns that value back.

The researchers said the model’s accuracy was improved by fine-tuning and per-participant personalisation, and a lightweight regression model was implemented to the model’s ReLU layer and participant-specific data.

Smartphone vs wearable eye trackers

The result noted the unpersonalised model had a high error, while personalisation with ~30s of calibration data led to an over 4x error reduction.

Plus, the usage of smartphone eye-trackers showed state-of-the-art accuracy compared to wearable eye trackers, both when the phone was placed on a device stand and when users held freely in their hand. The researchers believe their gaze model using a smartphone was significantly more cost-effective (~100x cheaper) and scalable.

Moreover, using a smartphone eye tracker, the researcher said they could replicate critical findings from previous eye movement research work in neuroscience and psychology, including oculomotor tasks and natural image understanding.

For example, the gaze scan paths in the above image show the effect of the target’s saliency (colour contrast) on visual search performance — pointing fewer fixations are required to find a target (left) with higher saliency. In comparison, more fixations are needed to find a target (right) with low saliency.

However, for complex stimuli, such as natural images, the researchers found that the gaze distribution from smartphone eye trackers was similar to those obtained from bulky, expensive eye trackers that use highly controlled settings (like chin rest system).

As shown in the image above, the smartphone-based gaze heatmaps have a broader distribution — they appear more ‘blurred’ — than hardware-based eye trackers.

The researchers found smartphone gaze could help detect difficulty with reading comprehension. The fraction of gaze time spent on the relevant excerpts was a good predictor of comprehension and negatively correlated with comprehension difficulty.

Digital biomarker of mental fatigue

In another study, the smartphone gaze was significantly impaired with fatigue and had applications in tracking the onset and progression of mental fatigue.

Researchers used a few minutes of gaze data from participants performing a task like using a language-independent object-tracking task and a language-dependent proofreading task.

As shown in the below image, while doing an object-tracking task, the researchers noted that the participants’ gaze initially followed the object’s circular trajectory. But under mental fatigue, their gaze shows high errors and deviations. The smartphone-based gaze model could provide a scalable, digital biomarker of fatigue.

Besides wellness, the smartphone gaze could monitor and detect autism spectrum disorder, dyslexia, concussion, diabetes retinopathy and more. It could provide timely and early interventions.

Moreover, this also has applications in accessibility. For instance, people with conditions such as (amyotrophic lateral sclerosis) ALS, locked-in syndrome, and stroke have impaired speech and motor ability; smartphone gaze models could make their daily tasks more accessible by using gaze for interaction/communication.

Conclusion

The above two could be the most accurate and affordable models that leverage smartphones to track eye movements seamlessly. Both models have been made using the open-source machine learning frameworks TensorFlow and scikit-learn.