At the ‘Deep Learning DevCon 2021,’ hosted by Analytics India Magazine, a two-day influential conference on deep learning, data science academic lead at Times Professional Learning Raghavendra Nagaraja Rao, touched upon various data imbalances and techniques to enhance the performance of a classification model.

Rao has over 15 years of experience. Before joining Times Professional Learning, a division of Bennett, Coleman & Co. Ltd, he had worked with Manipal Global Education Services as a technical evangelist with expertise in UNIX, SQL, Big Data, data exploration, stats and machine learning, text analytics and NLP.

What is a Classification Model?

A classification model is a technique that tries to draw conclusions or predict outcomes based on input values given for training. The input, for example, can be a historical bank or any financial sector data. The model will predict the class labels/categories for the new data and say if the customer will be valuable or not, based on demographic data such as gender, income, age, etc.

What is Target Class imbalance?

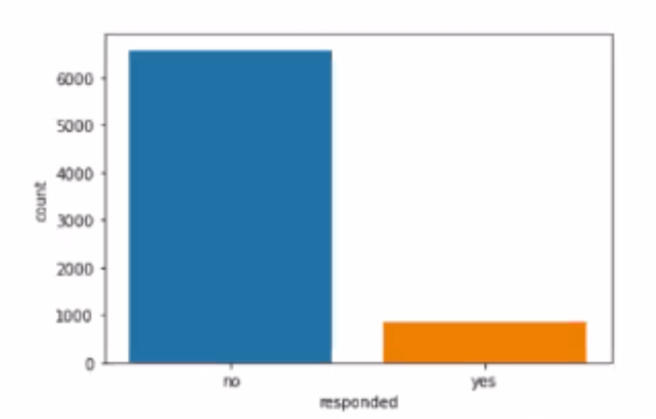

Target class imbalance is the classes or the categories in the target class that are not balanced. Rao, giving an example of a marketing campaign, said, let’s say we have a classification task on hand to predict if a customer will respond positively to a campaign or not. Here, the target column — responded has two classes — yes or no. So, those are the two categories.

In this case, let’s say the majority of the people responded ‘no.’ Meaning, the marketing campaign where you end up reaching out to a lot of customers, only a handful of them want to subscribe, for example, this can be you offering a credit card, a new insurance policy, etc. The one who subscribed or is interested would request more details. “This is the type of data that is extremely imbalanced,” added Rao.

That raises the drawbacks of class imbalance. Rao said, building predictive models from such imbalanced data will be biased towards the majority class. In other words, the model is trained with more observations from the majority class than the minority class.

He said that most classification problems on real-world data have imbalanced proportions in the classes of the target column like predicting fraud transitions, customer defaulting and email spam. “Again, when it comes to predicting spam or not spam, not every email is going to be spam; a majority of emails are not spam. The point is, many of these classification problems suffer this imbalance,” said Rao, highlighting the drawbacks of class imbalance.

Citing Covid19India.org, Rao said many very popular data show this imbalance. “If you look at this data, the total samples tested in India from the beginning of the Covid-19 pandemic is about 55.8 crore, and the total tested positive is about 3.35 crore. That is roughly 6% of the entire data. It is minuscule. Again, this suffers an extreme imbalance in the data,” said Rao.

What is the solution here?

Rao said that to predict the right data, we have to give many parameters, including a lot of genome data, a lot of information showing whether to conclude a person is tested or not, etc., and build an underlying ML model for that. “Today, we have an extreme imbalance in our data, be it retail, financial and healthcare,” he added.

Rao suggested the following techniques overcome class imbalance:

- Get more data, if possible

- Use tree-based algorithms or Ensemble of trees

- Use different resampling techniques

- Oversampling

- Under-sampling

- SMOTE

- Near-Miss

SMOTE: Synthetic memory oversampling technique

Throwing light on the drawbacks of over-sampling, Rao said it duplicates the records from the minority class and also does not add any additional information to the model. Instead, he suggested SMOTE. It is an improvement to the random over-sampling technique where new samples of the minority class are synthesized.

Further, he said that SMOTE works by adding samples to the minority class very close to the existing samples. It is obtained by drawing a line between the sample of the feature space and drawing a new sample at a point along that line.

Near-miss algorithm

Rao said this is an improvised technique for under-sampling. Here, the algorithm calculates the distance between the data points in the majority class with the data points in the minority class. Data points in the majority class, which have smaller distances to the minority class, are marked for elimination.

So, how do you select the right technique? Rao believes that it is important to understand the problem and choose the technique that gives the best outcome. Meaning no one solution fits all.

Accuracy is not everything

Talking about the performance metric classification problems, Rao said that we are all very obsessed with accuracy. But, that is not the case. “When we have an imbalance, accuracy is not the right measure. So, there is a very high chance that the model correctly predicts the majority class, so we are going to train more ‘yes’,” said Rao. He said there are many other performance metrics like f-score, the area under the receiver operating characteristics (AUC-ROC) score, etc.