Chip units designer, Nvidia has been an integral part of the whole AI revolution. It has been a moderately early adopter of artificial intelligence — making perfect processors for businesses with power much to support all of the new technologies. Now, it’s looking to the future. Nvidia researchers are working towards developing ways to use AI for training industrial robots that almost closely mimics the way humans are learning.

In a recently published paper, Nvidia researchers discussed an approach for robots to meet humans halfway, providing a human-to-robot handover of objects by classifying the human’s grasp and planning a trajectory to take the object from the humans’ hand. Nvidia claims that the transfer of objects between humans and robots is a critical capability of collaborative robots, and fluent handovers can improve the overall collaborative warehouse workers’ productivity.

The approach of handing over objects within human-robot collaboration has recently become a popular topic because of its multitude of applications in areas like collaborative manufacturing in warehouses to using robots assistance for the home. Explaining further, Nvidia researchers and co-authors stated that the majority of prior research always focused on robot-to-human handovers, assuming that humans can place the object in the robot’s gripper for the reverse. But, this approach created problems in scenarios where humans need to pay attention to their task at hand rather than the robot, such as performing surgery. In such situations, according to researchers, the accuracy goes down, and the handover gets occluded.

Consequently, for such instances, the robots require more reactive handovers that can adapt to the way that the human is presenting the object to the robot and meeting them halfway to receive the object. “Grasping” is indeed a critical act in current, and future robotic systems, ranging from warehouse logistics to service robotics, and handing over objects to humans is the next-level to enhance the warehouse productivity.

In this paper, Nvidia researchers proposed a collaborative robot approach — human-to-robot handovers, in which the robot meets the human halfway. It uses its training to classify the type human’s grasp of the object and concerning that, quickly change its trajectory and take the object from the human’s hand according to their intent.

Building The Dataset

The act of giving and taking objects to and from humans is one of the basic requirements in collaborative robots across industries and applications. Therefore, a growing community of researchers in robotics have been working to resolve this problem and enable an easy human-robot handover.

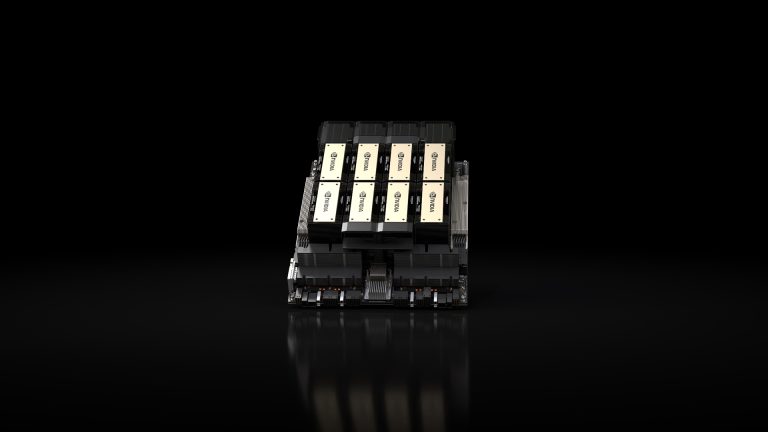

To achieve this handover process, the Nvidia team created a dataset of 151,551 images of human grasps, which included typical ways in which humans can hold objects — with various hand shapes and poses, which could be either open-palm, pinching or lifting. This dataset covered eight subjects with different hand poses and compiled them with the help of Microsoft Azure Kinect RGBD camera to train an AI model to classify the hand grasps into one of these categories. And, during the recording, the subject was allowed to move the body along with the hands to different positions in order to diversify the viewpoints of the camera.

Nvidia’s task model was based on robust logical dynamical systems, which represents tasks as a list of reactively executed operators with specific properties. This robust system also generates motion plans that avoid contact between the gripper and the hand. However, the system needed to adapt to different possible grasps, in order to reactively choose the correct way to approach the human user and take the object from them. Until it gets a stable estimate of how the human wants to present the block, it stays in a “home” position and waits.

Training The Model

One of the critical challenges in creating a reactive human-to-robot handover is the reliability and ability to perceive the object and the human continuously. To deal with this, one strategy was to estimate the position of the human hand as well as the 6D object by borrowing off-the-shelf methods from the computer vision community. However, even the state-of-the-art techniques for estimating the human side and the object focus on each of them independently.

Nvidia presented an execution method to take the object from the human hand by detecting the grasp and the hand position and accordingly re-plans its movements as necessary when the handover is interrupted. Explaining further, in a series of experiments, the researchers reviewed a range of different hand positions and their grasps, systematically including both the classification model and the task model.

The method used by Nvidia researchers portrayed a consistent improvement in the grasp success rate. The technique also reduced the total execution time and the trial duration compared with existing traditional approaches. The report stated that the grasp success rate was 100% versus the next best technique’s 80%, and a planning success rate of 64.3% compared with 29.6%. Moreover, it took 17.34 seconds to plan and execute actions versus the 20.93 seconds the second-fastest system took.

The researchers believe that their definition of human grasps covers 77% of the user grasps. “While our system can deal with most of the unseen human grasps, they tend to lead to higher uncertainty, and sometimes would cause the robot to back off and re-plan.”

“This has been an issue with this research, and suggests directions for future research, which ideally should be able to handle a broader range of grasps that a human might want to use.”

Wrapping Up

This approach, by Nvidia, described a system that will enable smooth human-to-robot handovers of objects via classifying different types of human grasp. However, the main flaw in their approach was that the model was trained on a single kind of human grasp, and therefore to make a robust model, Nvidia needs to learn the correct grip poses for different grasp types from data instead of using manually-specified rules. In the future, the team of researchers are planning to make a system, which is more flexible and can support more types of human grasps. This same approach is believed to enhance many human-robot collaborations in warehouse management.