|

Listen to this story

|

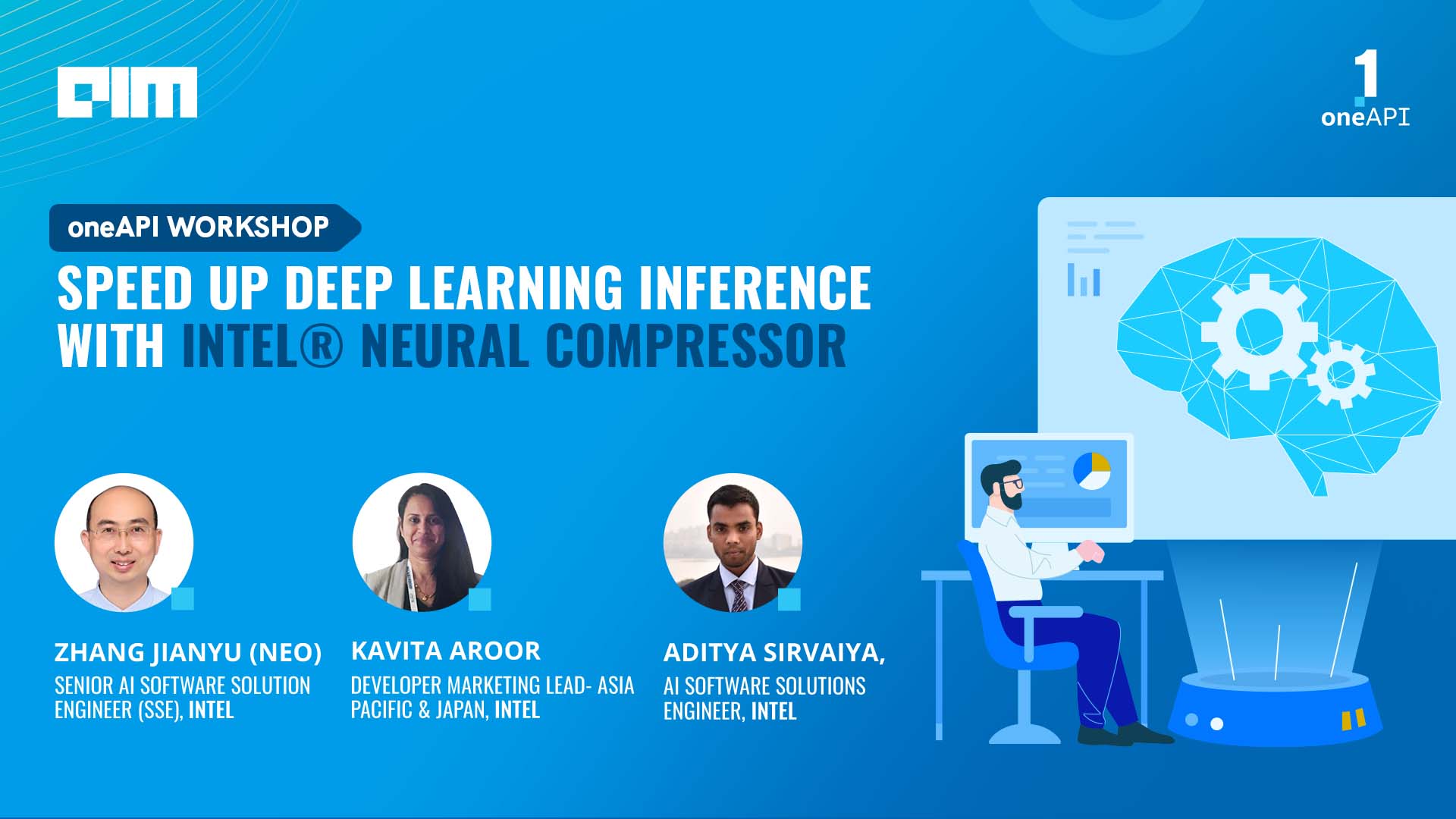

Intel® concluded its oneAPI masterclass on Intel® Neural Compressor on May 13, 2022, at IST 5:00 pm. The masterclass witnessed close to 200+ participants.

The workshop covered various initiatives and projects launched by Intel®, alongside deep-diving into Intel® Optimisation for TensorFlow to enhance the performance on Intel platforms. Intel® Optimisation for TensorFlow is the binary distribution of TensorFlow with Intel® oneAPI deep neural network (oneDNN) library primitives.

In addition to this, the masterclass gave an overview of the oneAPI AI analytics toolkit, which contained a core set of tools and libraries for developing high-performance applications on Intel® CPUs, GPUs, and FPGAs, alongside throwing light on Intel®’s open-source, cross-platform performance library oneDNN for deep learning applications.

The session also highlighted the importance of using Intel Neural Compressor to boost deep learning inference, alongside giving a hands-on demo, use-cases, benchmarks, and more. The workshop was led by Kavita Aroor, a developer marketing lead – Asia Pacific & Japan at Intel; Aditya Sirvaiya, an AI software solutions engineer at Intel; and Zhang Jianyu (Neo), a senior AI software solution engineer (SSE) of SATG AIA in the PRC.

Intel® had a demo on the following topics:

- oneAPI AI Analytics Toolkit overview

- Introduction to Intel® Optimization for Tensorflow

- Intel optimisations for Tensorflow

- Intel Neural Compressor

- Hands-on demo to showcase usage and performance boost on DevCloud

Key highlights

Throwing light on various initiatives launched by Intel® to uplift the developers’ ecosystem, Intel’s Aroor touched upon the DevMesh projects forum. She said they have about 2000-2500 projects across various technologies, including AI, gaming development, IoT and HPC, curated by Intel software developers and the student ambassador community.

Further, she said that developers could create their blog articles, where they can amplify their work, projects and more. She also said that oneAPI Technology partner program have a separate forum where they can partner with Intel to unleash the power of oneAPI. “Today, we have about 19-20 such organisations across ten different countries,” added Aroor. Next, she spoke about the oneAPI certified instructor programme, where developers can become certified instructors, become an advocate for Intel®, and work very closely with the company.

Click here to download Intel® oneAPI Toolkits to get started.

Click here to create an Intel® DevCloud account.

After this, Aditya gave the audience a quick overview of the oneAPI analytics toolkit, introducing Intel® Optimisation for TensorFlow, and then a hands-on, showcasing usage and performance boost on DevCloud.

This was followed by the introduction of Intel Neural Compressor Hands-on with quantisation workload. The demo explained an end-to-end pipeline to train a TensorFlow model with a small customer dataset and speed up the model based on quantisation by Intel® Neural Compressor. This included training a model by Keras and Intel Optimisation for Tensorflow, getting an INT8 model by Intel® Neural Compressor, alongside comparing the performance of the FP32 and INT8 models by the same script.

(Source: Intel)

Click here to download Intel® oneAPI Toolkits to get started.

Click here to create an Intel® DevCloud account.

During the event, Analytics India Magazine also ran a Lucky Draw, wherein lucky participants won an Amazon Voucher worth INR 2000/- each at the end of the workshop.

The winners were selected based on their engagement with Discord throughout the workshop. <https://discord.gg/ycwqTP6>

- Sahil Chachra

- Raviteja Peri

- Prateek Modi

- Ramachandrareddy Gadi

- Sreyashi Bhattacharjee

- Aishwarya Gholse

- Anirban Malla

- Dhruv Kothiya

- Chirumamilla Pitchaia

- Shivaraj Karki

- Priyanka Patny

- Akanksha Srivastava

- Maaz Mohammed

- Mani Shekhar Gupta