With the advent of Industry 4.0 — the automotive industry has seen a significant transformation thanks to artificial intelligence. The primary goal behind the implementation of these technologies is to reduce costs, optimize products, accelerate development cycles, and improve project team efficiency.

These technologies include virtual reality, photorealistic rendering, real-time engineering simulation, graphics virtualization, and artificial intelligence. Together, they contribute to an advanced product design workflow that enables manufacturers to create innovative, highly differentiated products and remain competitive.

For AI in mobility, machine learning will not be optional: it will be the technological foundation and the source of significant competitive advantage for decades to come. For example, human-level image recognition typically requires systems with tens of millions of parameters that are trained on a supercomputer for two to four weeks – a task that would take more than 1,000 years if done manually.

Here are few use cases of the automotive industry, where AI has found use cases beyond the much- touted self-driving:

Accelerated Workflows

It takes a lot of manpower to bring an idea from charts to chassis. From the moment of conceptualising the idea with rough sketches to simulating the design, the design engineers consider a lot of factors to tweak in whatever efficiency they can.

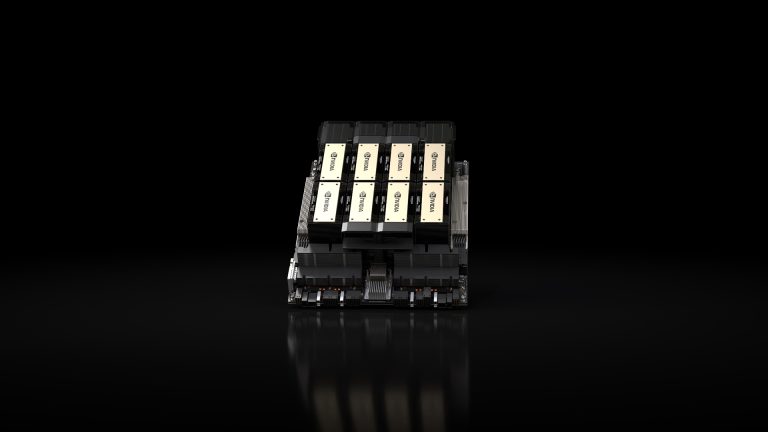

Nvidia’s Quadro RTX with NVIDIA Turing™ architecture fuses AI, real-time ray tracing, and programmable shading to fundamentally transform the traditional product design process. Quadro RTX is the foundation of an advanced ecosystem of hardware and software accelerating new design workflows and improving how teams collaborate.

AI-powered rendering denoising running on Quadro speeds up noiseless visualization of photorealistic renders. And the new Quadro RTX GPUs are built for AI inferencing to power the next generation of visual computing for manufacturing applications.

AI via Cloud

New age of car repair is here. Tesla’s over-the-air fix is already popular. With its recent improvements to Sentry Mode highlight Tesla’s constant efforts at optimizing and improving features that are conveniently introduced through a free over-the-air update.

For instance, when the government regulation had some issues regarding the fire safety of Tesla chargers, the engineers were quick enough to update the software systems, saving the ride down to service center.

Volkswagen and Microsoft, too have announced a partnership, designed to transform the auto company into a digital service-driven business. By tapping the power of Azure IoT, PowerBI, and Skype, Volkswagen plans to offer customer experience, telematics, and productivity solutions for the automotive market.

Blockchain could also play a significant part in enabling and securing connected transportation in the future, including payments and data sharing. Its potential was reflected in last week’s expansion of the MOBI blockchain consortium.

Image Classification

The applications of image classification is widely known with respect to autonomous vehicles. But, now the image classification algorithms are being used to alleviate the insurance hassles.

Ant Financial, a company part of the Alibaba group, is a Chinese fintech company created a software called Ding Sun Bao, to analyse vehicle damage and handles claims using machine vision.

Users can take the photo of their damaged vehicle using their smartphone camera and upload. Then, Ding Sun Bao compares the uploaded image of the damage to a database of images labeled as various severities of damage. These images might also be labeled with likely repair costs. Then the application produces a report for the user on the damaged parts, a repair plan, and the accident’s impact on the user’s premiums in the years following the accident.

NLP

Chatbots are seeing fruition of late, thanks to the ever improving natural language repository. With a new NLP project being open-sourced by the likes of tech giants like Google, the field of NLP has extended its reach into domains previously thought to be immune to AI.

Geico offers a virtual assistant and chatbot named Kate,Kate’s decision-making ability and her ability to process what is spoken or written to her is natural language processing. Potentially detecting the use of certain words or phrases, such as “my policy” and “mechanics I can go to” and generating an appropriate response in the form of a pre-written message or pre-formulated verbal response.

Whereas, Progressive offers a chatbot called Flo, which the company claims can help customers using natural language processing and cloud-based API insurance data to alter payment schedules, file insurance claims, and request auto insurance quotes. Flo Chatbot is built using Microsoft Azure Bot Service and LUIS.

Many auto insurance companies claim that a large pain point for their customers occurs when filing claims. Insurance companies report that negative claims experiences will cause customers to change insurance providers. Additionally, the claims process causes the largest number of negative experiences, so it would seem to behoove leadership to find solutions that would make the claims process as smooth and enjoyable as possible. AI may prove to be a more scalable way to reduce friction through instant customer support via chatbots.

Future Direction

Once autonomous vehicles and robotaxis hit the road, cars will be built with an emphasis on the passengers rather than the driver.

Besides safety, AI assistants enabled by Nvidia’s DRIVE IX can provide convenience features for every passenger.

Israeli automotive computer vision startup eyeSight uses AI and deep learning to offer an absolute plethora of in-car automotive solutions. Using advanced Time-of-Flight (TOF) cameras and IR sensors, eyeSight’s AI software detects driver behavior in four key areas.

The same deep learning algorithms that detects distracted drivers can also read body language to tell if a rider will need a cupholder after they take a sip of their drink or alert them if they’ve forgotten a personal item in the car. Passengers’ gaze, gestures and speech will become the primary cabin controllers as riders lean back in their seats and move away from buttons and knobs

This closer integration between AI and in-vehicle sensing is set to increase consumer engagement, optimise business models and unlock a myriad of new use cases.