Research in artificial intelligence has taken on a different slant – imbuing machines with consciousness is an actively emerging research area, spurred by several developments in cognitive sciences and neuroscience. Recently, this paradigm of research has also seen new breakthroughs in the form of Google’s Alpha Go Zero – touted as a step towards building intelligent machines. However, progress in human-level AI is not a new research direction – some of the earlier work of computer scientist Alan Turing was focused on advancing human-level AI but efforts were stalled by enterprises.

DeepMind researchers assert that traditional approaches to AI have historically been dominated by logic-based methods and theoretical mathematical models. Going forward, neuroscience can complement these by identifying classes of biological computation that may be critical to cognitive function.

Some of the recent advancements in building conscious AI can be attributed to the fact that all the necessary technology is already in place – fast processors, GPU power, large memories, new statistical approaches and the availability of vast training dataset. So, what’s holding back systems from becoming self-improving systems and gaining consciousness? Can a system bootstrap its way to human-level intelligence, thanks in part to evolutionary algorithms?

R&D focused companies like IBM, Google, Baidu are building models, demonstrating cognitive abilities, but they do not represent a unified model of intelligence. And even though there is a lot of cutting edge research happening in unsupervised learning, we are still far away from the watershed moment, noted Dr Adam Coates, Baidu. Also, deep learning techniques will play a significant role in building the cognitive architectures of the future

Journey towards General AI started with understanding cognitive architecture in 1950s

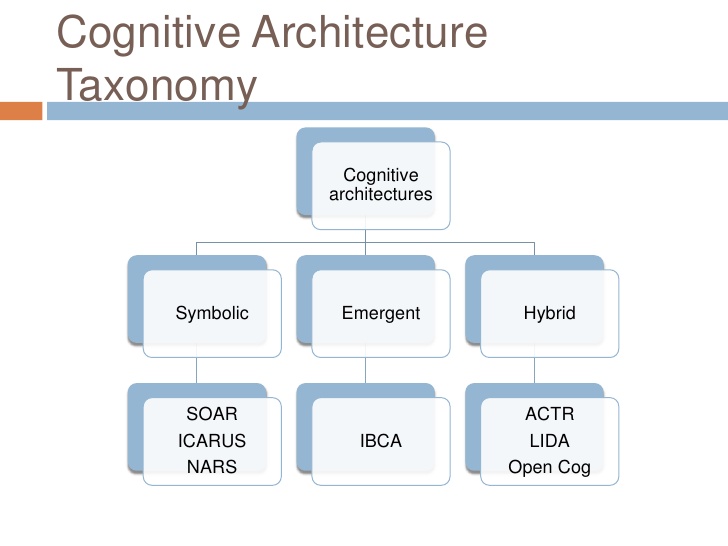

Research in cognitive architectures began in the 1950s with the goal of creating programs that could reason about problems across different domains, develop insights, adapt to new situations and reflect on themselves, note Iuliia Kotseruba & John K Tsotsos in their research paper. The ultimate goal of research in cognitive architectures is to model AI systems after the human mind, thereby producing intelligent behaviour. The paper also cites computer scientists Stuart Russell and Peter Norvig who outlined how AI can be realized in different ways through existing cognitive architectures.

Interest in symbolic architectures began in mid-1980s and continued up until 1990s, however after 2000s most of the newly developed architectures were hybrid. There were also architectures which were biologically-inspired. Companies that have made significant headway through this approach (especially in areas such as natural language understanding, general learning) are Google’s DeepMind and Facebook AI Research which have developed models capable of cognitive abilities, but they do not represent a unified model of intelligence.

According to Facebook research team member, AI is too complex a field to be built instantaneously, instead the first step should be defining the general characteristics. Two such characteristics of intelligence are communication and learning – some headway has already been made in these areas with a roadmap laid down by enterprises.

Let’s have a look at some of the recent application developed in this area

Araya Brain Imaging’s project, called machine phenomenology: According to Dr Ryota Kanai, founder-CEO of Araya Brain Imaging, his company is working on a project dubbed machine phenomenology – that comprises studying the structures of consciousness through systematic reflection on conscious experience. In simpler terms, instead of teaching AI systems to express themselves in a human language, the company’s project focuses on training AI system to develop their own language – a step beyond what machines normally communicate—namely, the outcomes of tasks. “We do not specify precisely how the machine encodes these instructions; the neural network itself develops a strategy through a training process that rewards success in conveying the instructions to another machine. We hope to extend our approach to establish human-AI communications, so that we can eventually demand explanations from AIs,” noted Kanai, a well-known researcher in structural and functional neuroimage analysis.

DeepMind’s Alpha Go Zero: DeepMind’s Alpha Go Zero draws heavily from the field of neuroscience and psychology demonstrated self-play capability – human-like ingenuity in learning and the ability to synthesize knowledge to produce a novel or original idea. Relying on the technique of reinforcement learning, where AI systems are systematically programmed to react appropriately in all situations, Alpha Go Zero started from random play without using any human data. The DeepMind press release called this technique more powerful than previous versions of AlphaGo because it not constrained by limits of human knowledge.

Self-driving cars are examples of AI systems building their own representation: Self-driving cars are pre-programmed with their own representations such as curves in the road, lane markings, changing lanes etc. but over a period of time, when the car collects enough data, it can forms its own representation, just the way humans do. Now, a car’s cognitive architectures is built up with inputs from various sensors like LIDAR and laser.

Sophia Robot is pegged as a just a few updates away from Conscious AI: Despite garnering widespread criticism from AI proponents like Yann LeCun as example of sophisticated animatronics, Sophia’s makers have dubbed it as being just a few steps away from achieving human-level consciousness. Hanson Robotics did admit that the work is not as ground-breaking as the one emerging from DeepMind’s labs, the bot does exhibit certain capabilities such as speech recogntion.

AIM View

So far funding for AI has been channeled towards AI applications related to optimization & pattern recognition and not so much on machine consciousness. And consciousness as a field is inter-disciplinary and requires a body of researchers from different fields such as Psychology, Philosophy, Neuroscience & Computer Science to come together to build more scientific models to simulate it computationally. Meanwhile, current AI applications do mimic human behaviour to a certain extent. Also, there is another body of researchers who believe that the famed Turing Test doesn’t hold water anymore, since consciousness is subjective matter and cannot be measured – in other words, machines can be intelligent in a number of other ways, and intelligence can’t be quantified.