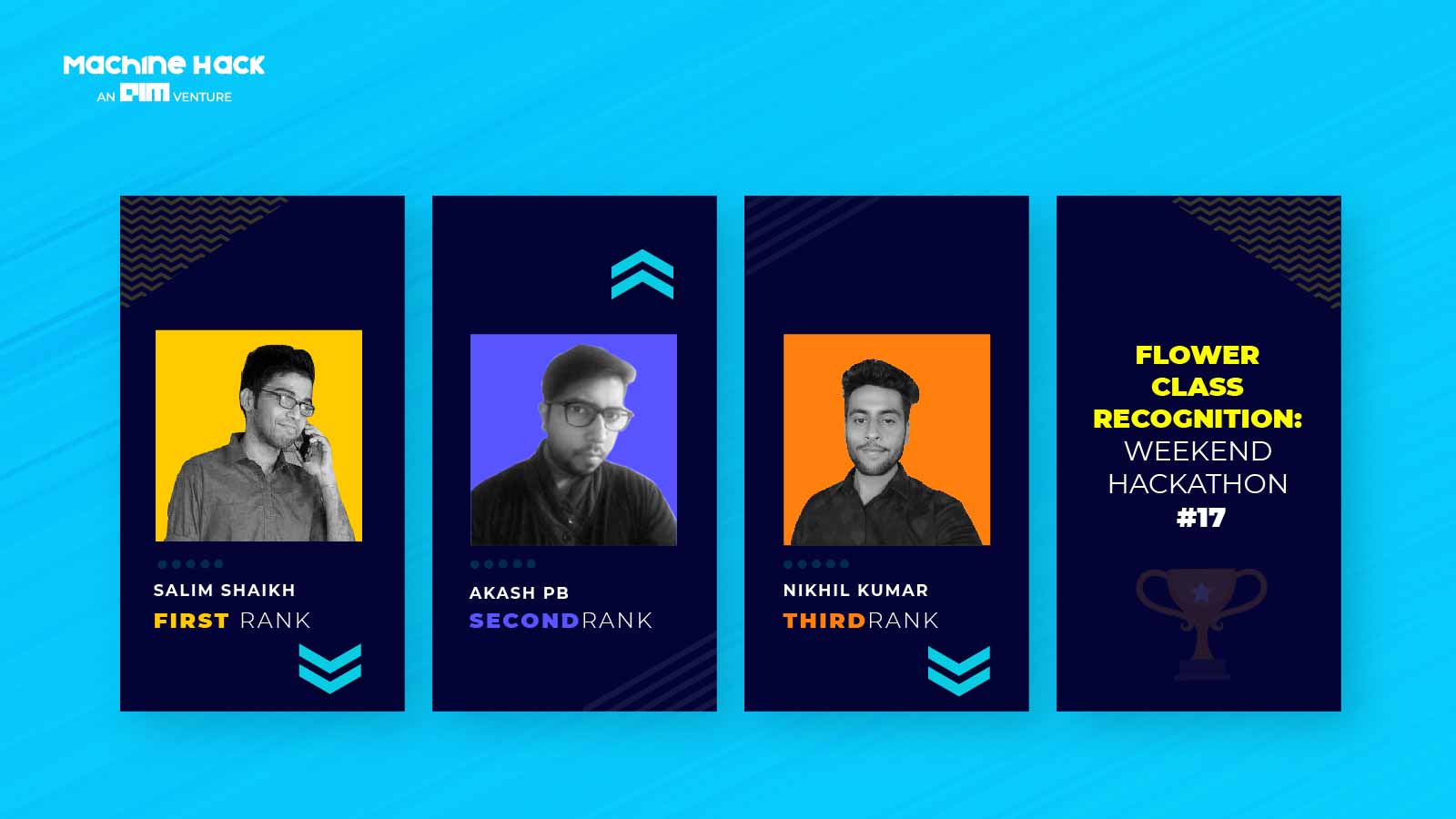

MachineHack successfully concluded its seventeenth installment of the weekend hackathon series this Monday. The Flower Class Recognition: Weekend Hackathon #17 hackathon provided the contestants with an opportunity to revisit the classification skills by classifying various classes of flowers into 8 different classes. The hackathon was greatly welcomed by data science enthusiasts with over 250 registrations and active participation from close to 125 practitioners.

Out of the 250 competitors, three topped our leaderboard. In this article, we will introduce you to the winners and describe the approach they took to solve the problem.

#1| Salim Shaikh

Although Salim had inclinations towards playing with numbers and getting insights from it, hence he did my masters in Statistics. Earlier he was not aware of Data Science as a field but after my placement at Vodafone Idea, he found his future roadmap. Having worked in Telecom and Banking domains, two of the largest sources of data he gets multiple opportunities to get his hands dirty and trying out various algorithms which also led me to participate in various hackathons organized by Kaggle, Analytics Vidhya, Zindi, HackerEarth and of course Machine Hack. This led to collaborative learning by reading the solution of other participants, team up with them, and exchange ideas, etc.

He would like to thank Machine Hack for giving us a chance to keep himself updated with the trend.

“I had quite a decent experience on Machine Hack, every weekend we get some new problems covering all the aspects like tabular, text, vision, etc to brainstorm and learn. Also, the recent update of the interface was a great job done by the organizers. I look forward to more such hackathons in future” – Salim shared his opinion about MachineHack

Approach To Solving The Problem

Salim explains his approach briefly as follows:

The dataset had a lot of scopes to group them and aggregate on the 2 numerical features. I also applied PCA between the 2 numerical features to get a linear combination of them. Since I had 4 categorical variable Multiple Correspondence Analysis (MCA) was the ideal algorithm to extract more features. After creating all these features the 2 biggest challenges were

1. Classes were highly imbalanced.

2. The testing dataset was 2X the size of the training dataset.

So in order to make the solution, generalizable Stratified Kfold was the ideal candidate. Hence I applied 50 folds just to avoid overfitting. With this, I got a log loss of 0.69597 as my best score and 0.69895 as my final score.

#2| Akash PB

Akash did his bachelor’s in Statistics and Masters in Operations Research from IITB. From his college days, he was interested in programming algorithms from scratch and hence came to this field. On one fine day, he found a precious gem on Coursera – Andrew Ng sir’s course, and after completion of his course, he felt a little bit confident to apply stuff in the real world. The company he worked for gave him the opportunity to be a part of a lot of projects that involved machine learning and data science and hence he earned a real-life flavor of theoretical knowledge. Since March, he has been participating in MachineHack hackathons and from there I am learning even more.

“Machinehack is a nice place for me to experiment with things. Moreover, the interface is very nice now as compared to the previous one. The hackathons are nice and hopefully, we will see more challenging stuff in the future.”- Akash shared his opinion.

Approach To Solving The Problem

He explains his approach briefly as follows:

In this hackathon, I tried two crucial things on categorical data – dimensionality reduction using multiple correspondence analysis and Exclusive Feature Bundling.

Adding various aggregations boosted the score as well. I captured the direction of maximum variance in the data using PCA and added the component as a feature in both the train and test set.

Finally, I used the blending of two best Catboost models within stratified k-folds to get the best results.

#3| Nikhil Kumar Mishra

Nikhil is currently a final year Computer Science Engineering student at Pesit South Campus, Bangalore. He started his data science journey when he was in his second year after being inspired by a youtube video on self-driving cars. The technology intrigued him, and he was driven into the world of Machine Learning. He started with Andrew NG’s famous course and applied his knowledge in the hackathons which he participated in.

“Whenever I learned a new technique, I was always eager to apply it myself, and competitions gave me a chance to do just that”– he said when asked about his Data Science Journey.

Kaggle’s Microsoft Malware Prediction hackathon in which he finished 25th was a turning point in his Data Science journey which gave him the confidence to take it further and challenge himself with more hackathons on platforms like Kaggle, MachineHack, and Analytics Vidhya.

“MachineHack is an amazing platform, especially for beginners. The problems here are simpler, giving a chance for new learners to get their hands dirty at machine learning problems. MachineHack team is very helpful in understanding and interacting with the participants, to get their doubts resolved. Also, the community is ever-growing and challenged with new and brilliant participants coming up every competition. I intend to continue using MachineHack to practice and refresh my knowledge on Data Science” – Nikhil shared his opinion.

Approach To Solving The Problem

He explains his approach briefly as follows:

- Focus on frequency features, aggregation features, and the number of unique values of one feature, for all values of some other feature, to deal with high dimensionality.

- Three 15 fold stratified models of LightGBM, XGBoost, and Catboost.

- The final model is a weighted blend of the three models based on LB and CV scores.