Microsoft integrated Graphcore’s AI-powered chip with its Azure to become the first cloud provider that offers optimizations for AI applications. With this addition, any organisation who leverages the Microsoft Azure cloud platform will be AI-ready. However, Microsoft will initially offer Graphcore’s intelligence processing unit (IPU) AI capabilities to organisations who are pushing the boundaries in machine learning.

Graphcore and Microsoft were working hand in hand for a little over two years to innovate and develop systems for Azure that could render ML tasks on IPUs. And this week, Microsoft announced the integration of the IPU with Azure to boost processing of artificial intelligence-based applications, thereby, evoking excitement among developers and businesses around the world.

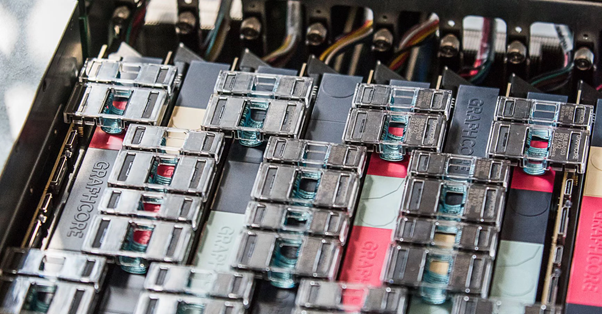

Graphcore Processor

Graphcore is a British startup that makes programmable AI processors. The firm is backed by some of the most prominent investors who are experts in ML. Even Microsoft has invested in Graphcore last December. Post capital raise, the firm’s valuation is now $1.7 billion. Graphcore, for long, has boasted about the processor’s ability to accelerate AI in companies for assisting them with their innovations.

IPU is a highly flexible, easy-to-use, a parallel processor that has been designed from square one to deliver high-tech performance while training and evaluating ML models. It has 16 IPUs that are linked with IPU-Link technology in a server. This allows IPU processors to handle 1,00,000 independent ML programmes parallelly, thereby, making it a must-have chip in today’s data science and AI workflows.

The processor also comes with full software stack and framework support – the Poplar software stack. It integrates with deep learning models to help developers improvise and build robust software. While it supports TensorFlow, the full support for PyTorch will be available in early 2020.

Unlike other processors, the IPU is designed by keeping in mind the requirements of resource-intensive ML applications. It was devised to support new breakthroughs in the AI landscape. Firms can utilise it to develop a wide range of products such as self-driving cars, computer vision, natural language processing, among others.

Benchmark

Graphcore mentioned in its blog that the IPU processor was tested with BERT, resulting in training the BERT base in 56 hours with only one IPU Server system of eight C2 IPU-Processor PCIe cards. Besides they also achieve decreased latency and three-fold higher throughput. Firms using this processor can gain over 20% improvement in latency, and in turn, reduce product time-to-market.

The firm has also asserted that one of its European clients has witnessed lower latency with image recognition model, ResNext. With the IPU chip, the client successfully increased accuracy along with speed while delivering desired image search results.

In ResNext they use grouped convolutions and depth-wise separable convolutions to enhance the accuracy. It includes splitting the convolution blocks into smaller, separable blocks, which is efficiently supported by IPUs. Due to the advantage of IPU, they achieved a 77x throughput advantage for group convolutions.

Expert Opinion

Although various firms are working on developing AI-based processors, no one has achieved a breakthrough. However, task-specific processors such as Tensor by Google and other AI-based chips have delivered exceptional results.

An expert from Moor Insights says that the chip’s flexibility will allow developers to programme a wide range of ML applications with specialised-chips.

Outlook

Various companies are working on chipmaking to optimise their AI initiative workflows. Facebook, Amazon, Google, among others, have announced their interest in developing superior chips. Consequently, it might be difficult for Graphcore to hold on to its position and lead the tech landscape.

The highly robust IPU is among the best in the market but with many domain-knowledge firms such as Intel, NVIDIA, IBM, and AMD, in the race, it will be interesting to see how Graphcore’s IPU will fare as and when these firms release their processors.