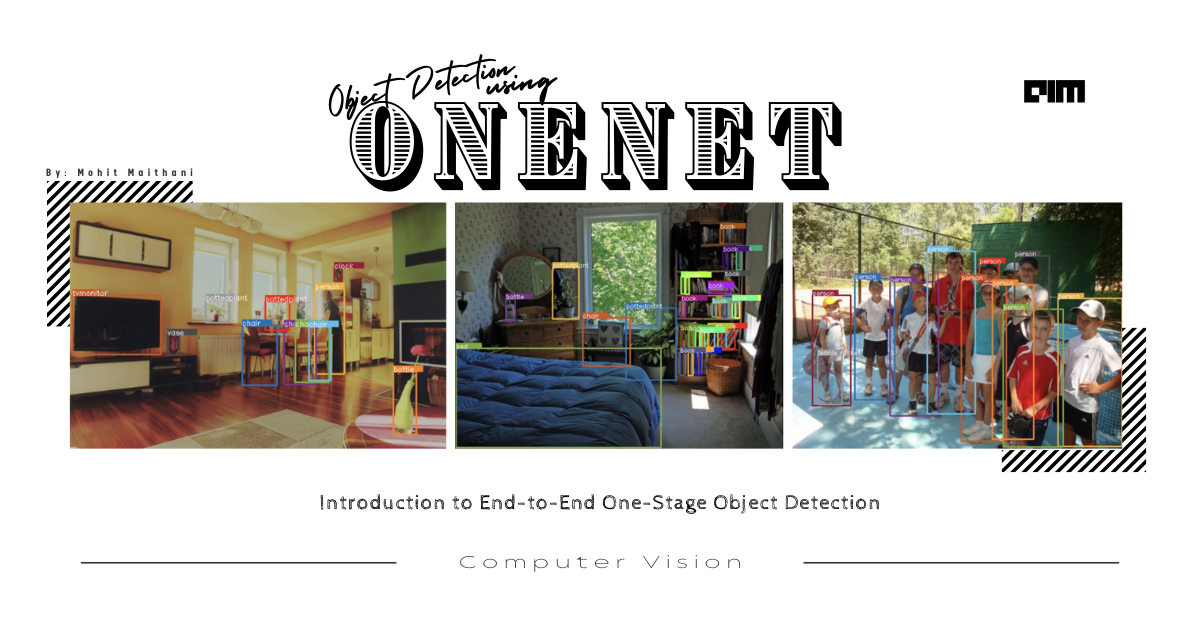

Object detection is one of the most talked-about subjects in the Artificial intelligence domain, object detection can be on an image or video; It can be multiple object detection in one shot using YOLO techniques or other models like Google EfficientDet, and CenterNet, In all of these different object detection, approaches everyone is trying to achieve the maximum accuracy with less computation power, the most challenging problem in object detectors is label assignment, more specifically how to differentiate positive and negative images and assign them object(for positive image) and background(for negative image) accordingly, the positive sample is always depends on IoU(intersection over union) threshold, primarily the object detection is done by sliding windows enumeration on image grid, there is much lack of classification information in label assignment previously in one-stage detectors.

Previously we assigned labels by only location cost between a sample and corresponding ground truth, due to this further post-processing is required using NMS(Non-maximum suppression), NMS is a key post-processing technique for removing redundant boxes around the same object.

Label assignment Methods:

There are previous label assignment techniques that are used extensively with post-processing NMS, let’s discuss all of them and find out why OneNet uses the Minumum Cost Assignment technique and how it’s efficient.

1.Box assignment

Modern object detection techniques use pre-defined thousands of anchor boxes in the image grid and perform object classification we call this approach “Box Assignment.”

Box assignment, as shown in the above figure is used over the years many times in different object detection frameworks. If Iou is greater than the high threshold, then the Detected boxes are labelled as green and negative(red) if it’s smaller than the threshold value.

2.Point assignment

To eliminate the complex box computation point assignment is used in many object detection frameworks. It directly treats the grid points in the feature map as an object and predicts the offset from grid point to object box boundaries. Label assignment is more simplified in the point assignment method.

3.Minimum cost Assignment(proposed method by OneNet):

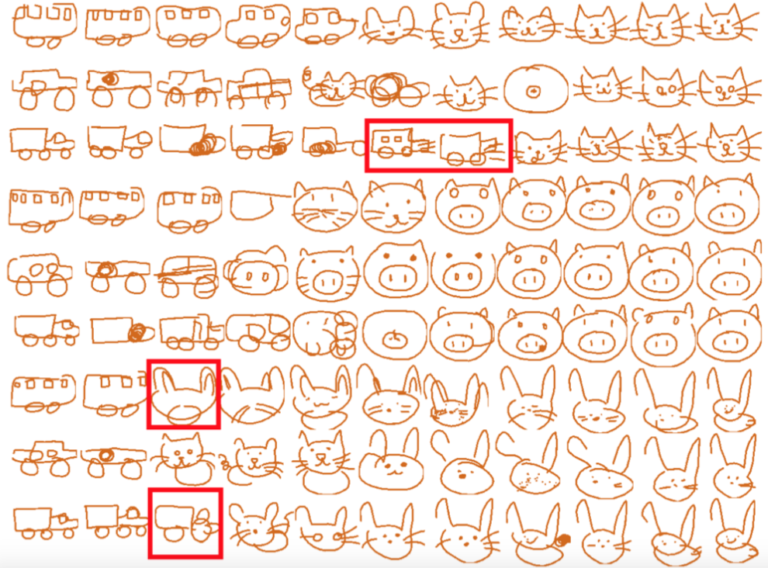

There was a major problem with both of the above-discussed label assignment techniques. Both suffered the Many-to-one assignment problem; they have more than one positive sample for one ground-truth box. That produces redundant results, and NMS(non-maxima suppression) post-processing becomes necessary.

In minimum cost assignment: positive sample is assigned only one sample of minimum cost and others are all negative.

It is a pretty straightforward method, for each ground truth they restricted the sample to one positive sample, no handcrafted heuristic approach Is involved and no need for NMS(Non-maximum suppression).

OneNet

Today we are going to discuss one of the recently launched object detector: OneNet. OneNet is an end to end one-stage object detector that purposes new techniques for object detection like Minimum Cost assignment. Its latest paper was published on 10 Dec 2020 by Peize Sun, Yi Jiang, Enze Xie, Zehuan Yuan, Changhu Wang, and Ping Luo at The University of Hong Kong It was the collaboration with ByteDance AI lab.

OneNet is a fully convolutional one stage detector without any post-processing technique such as NMS(non-maximal suppression).

Now they used many new techniques like minimum cost assignment that removed NMS totally we seen in the above label assignment demonstration. There are other advantages of OneNet too like:

- It archives 35.0 AP/80 FPS using ResNet-50 on COCO dataset

- No NMS(non-maximal suppression) or Max Pooling for post-processing.

- The whole network of OneNet is fully convolutional

- End-end-to training.

- No RoI operations

- Label assignment is based on the Minimum cost of classification rather than complex bipartite-matching

Architecture of OneNet

The pipeline of OneNet basically starts with an

- input image of HxWx3, with three channels,

- the backbone generates a feature map of H/4 x W/4 x C,

- the head produce classification prediction of H/4 x W/4 x K(number of categories),

- and regression prediction of H/4 x W/4 x 4.

- The final output is top-k scoring boxes.

1.Backbone

The backbone is the bottom-up and top-down structure of the architecture,

- the first bottom-up component of the architecture i.e. ResNet, OneNet used ResNet to produce multi-scale feature maps.

- The top-down architecture is used to generate the final feature maps for object recognition.

2.Head

The head performs classification and location on each grid point of the image feature map by two parallel convolutional layers.

- For K object categories the classification layer predicts the object probability.

- Location layers used to predict the offset from grid point to 4 boundaries of the ground truth box.

3.Training

As already discussed above, OneNet used the Minimum Cost Assignment for label assignment.

4.Inference

No Max pooling or NMS is used in OneNet, so the final output is direct top k (e.g. 100) scoring boxes.

Implementation

Let’s see how to get started with the implementation of OneNet, it provides two models dcn and nodcn one for accuracy and another for easy deployment.

- Download dcn from here and nodcn from here.

- torchvision’s ResNet-18.pkl

Installation

The code of OneNet is based on facebook’s Detectron2 and DETR and the code requirements are Linux or macOS with Python-3.6+, Pytorch-1.5+, torchvision

Steps for install, visualize, evaluation is as follows:

- Install pytorch for your CUDA version from here, in case of Google Colab install torchvision using below command:

!pip install torch===1.7.1+cu110 torchvision===0.8.2+cu110 torchaudio===0.7.2 -f https://download.pytorch.org/whl/torch_stable.html- Clone & Install from source

!git clone https://github.com/PeizeSun/OneNet.git

!cd OneNet

!python setup.py build develop

- Link coco dataset path to dataset/coco inside cloned OneNet repo

! mkdir -p datasets/coco

! ln -s /path_to_coco_dataset/annotations datasets/coco/annotations

! ln -s /path_to_coco_dataset/train2017 datasets/coco/train2017

! ln -s /path_to_coco_dataset/val2017 datasets/coco/val2017

- Train

!python projects/OneNet/train_net.py --num-gpus 8 \

--config-file projects/OneNet/configs/onenet.res50.dcn.yaml

- Evaluate

!python projects/OneNet/train_net.py --num-gpus 8 \

--config-file projects/OneNet/configs/onenet.res50.dcn.yaml \

--eval-only MODEL.WEIGHTS path/to/model.pth

- Visualize

!python demo/demo.py\

--config-file projects/OneNet/configs/onenet.res50.dcn.yaml \

--input path/to/images --output path/to/save_images --confidence-threshold 0.4 \

--opts MODEL.WEIGHTS path/to/model.pth

Comparison to CenterNet

CenterNet is one the most popular one-stage detector which was a followup of CornetNet. CornerNet uses the corner-key points to overcome the limitations of using anchor-based methods. But it was having major accuracy flaws during small object detection so CenterNet tried to overcome the restriction encountered in CornerNet by using triplet cornet to localize objects.

OneNet beats the CenterNet with comparable performance in both speed and detection accuracy.

Conclusion

Using Minimum cost assignment and excluding NMS methodologies was proved a great success for OneNet, we have seen how previous label assignments were using more computation and also the pipeline of OneNet how it perform the object detection efficiently. We have also seen how the classification cost is the key to the success of end to end one stage object detection.

The above demonstration of OneNet is referred from this research paper published by the OneNet team at arXiv. The source code for OneNet is open source at Github. The coding tutorial is available at: https://github.com/mmaithani/data-science/blob/main/OneNet.ipynb