OpenAI has released a 12-billion parameter version of GPT-3, called DALL.E, to generate images from text prompts.

The name is a play on surrealist painter Salvador Dali and Pixar movie, WALL.E. DALL.E is a transformer language model built to receive both the text and the image as a single stream of data packing up to 1280 ‘tokens’.

Simply put, a token refers to a particular symbol from a vocabulary. For example, every letter from A-Z in the English alphabet is a token. However, in DALL.E terms, token stands for both the text and the image input.

DALL.E can render an image from scratch and also alter aspects of an image using text prompts.

Credit: OpenAI

For example, in the above image, the text prompt is — a pentagon green frame. Any alteration to the three aspects here–shape (pentagon), colour (green), object (frame), would generate a different set of images.

For a caption prompt, with changes to the above text to triangle red clock, DALL.E gives the following set of images.

Credit: OpenAI

DALL.E model is also trained for working with multiple objects in an image. For example, in a text prompt, “a hedgehog wearing a yellow hat, red gloves, blue jacket, and pink pants”, the model would need to correctly compose each of the pieces (hedgehog, hat, gloves, jacket, and pants) but also establish the correct association between each of the object– yellow hat, red gloves, blue jacket, and pink pants on a hedgehog, without mixing them up.

DALL.E model is also trained for working with multiple objects in an image. For example, in the text prompt, “a hedgehog wearing a yellow hat, red gloves, blue jacket, and pink pants,” the model would need to correctly compose each of the pieces (hedgehog, hat, gloves, jacket, and pants) but also establish the correct association between each of the object– yellow hat, red gloves, blue jacket, and pink pants on a hedgehog, without mixing them up.

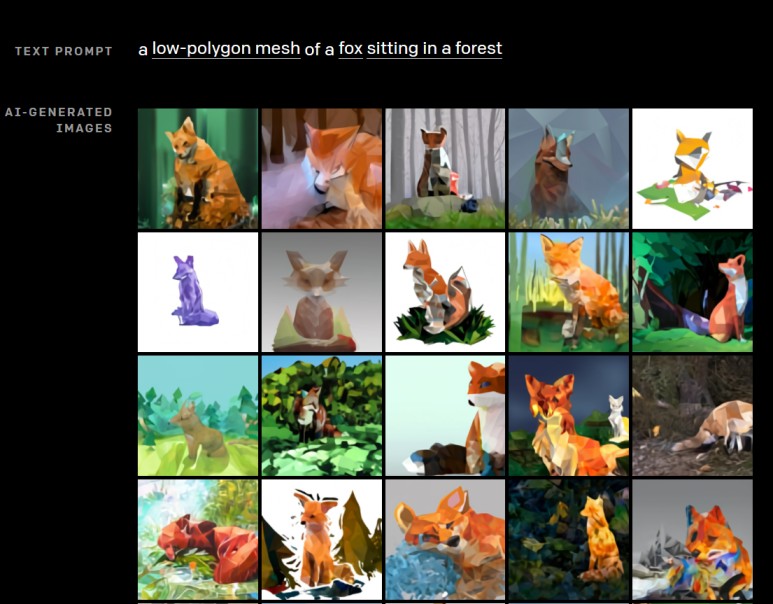

The OpenAI team has also tested DALL.E’s capabilities against other specific situations, such as generating 3D imagery, cross-sectional views, and images based on contextual text caption.

Interestingly, DALL.E was often seen to take creative liberties to generate images rich in details without explicit prompts, making it a cut above 3D rendering engines that require specific and unambiguous inputs.

The DALL.E model can also perform image-to-image translation tasks based on prompts.

CLIP

Following the announcement of DALL.E, the OpenAI research team has also demonstrated a neural network called Contrastive Language-Image Pre-training or CLIP. This neural network has been trained on 400 million pairs of images and text.

In a paper introducing the model, OpenAI research team wrote, “We find that CLIP, similar to the GPT family, learns to perform a wide set of tasks during pretraining, including object character recognition (OCR), geo-localisation, action recognition, and many others. We measure this by benchmarking the zero-shot transfer performance of CLIP on over 30 existing datasets and find it can be competitive with prior task-specific supervised models.”

CLIP has proved to be highly efficient, flexible, and more generalised. Further, CLIP is far less expensive compared to deep learning models. CLIP relies on text-image pair datasets already available on the internet and can adapt to perform a broader range of visual classification tasks without requiring additional training examples.

Two Sides To A Story

The DALL.E model is already creating ripples in the research community. The OpenAI team counts on the capabilities of this model to deliver a broader societal impact. The team is also looking at DALL.E’s potential influence on specific processes and professions. However, the DALL.E is not exactly foolproof. Experts fear, like the GPT-3 model, both DALL.E and CLIP models can reinforce racial and gender stereotypes. A bias test found the CLIP model was likely to miscategorise people under 20 as criminals or non-humans. Further, the model was more likely to label men as criminals than women.