|

Listen to this story

|

The world mourns the loss of Gordon Moore, whose impact in the field of electronics parallels none. A visionary leader and philanthropist, Moore’s legacy will continue to inspire and influence generations to come. Here is a look at the magnitude of the difference he made in his lifespan.

Birth of Silicon Valley

Moore was the final surviving member of the “traitorous eight”, a legendary group of engineers who left William Shockley’s research lab due to their dissatisfaction with his management style. In 1957, they established their own company, Fairchild Semiconductors.

Besides Moore, the group consisted of Julius Blank, Victor Grinich, Jean Hoerni, Eugene Kleiner, Jay Last, Robert Noyce (who later co-founded Intel with Moore), and Sheldon Roberts. The legacy of Fairchild is such that its former employees went on to form several other groundbreaking companies, such as Intel, National Semiconductor Corp, Advanced Micro Devices, LSI Logic Corp, and others.

These companies, established in an area now known as Silicon Valley, continue to shape and define the technology industry to this day.

The traitorous eight

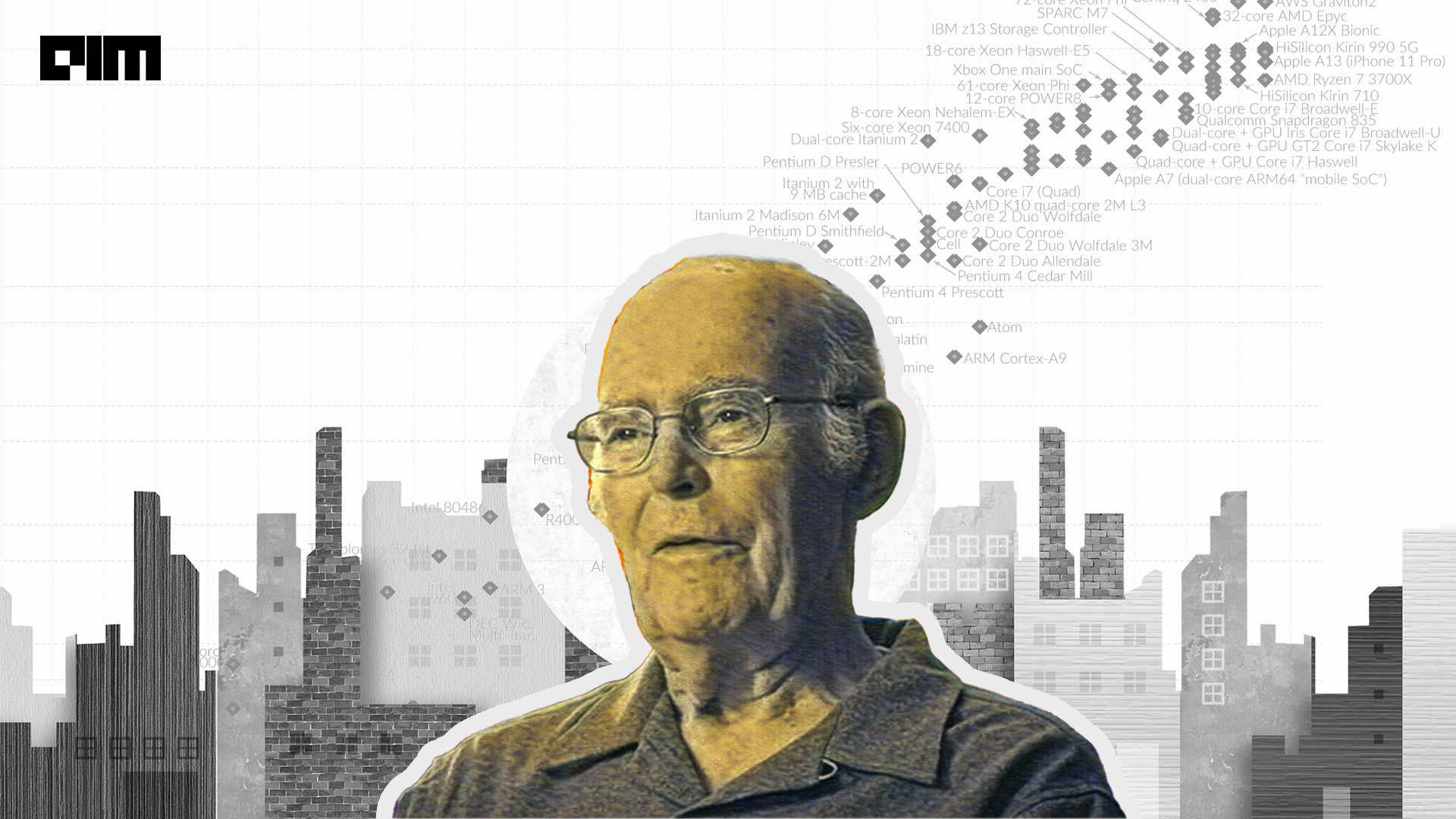

Moore’s law

In 1965, Moore published a paper describing the trend the course of integrated circuits will follow. He noted that the number of components in integrated circuits had doubled “every year” from the invention of the integrated circuit in 1958 until 1965 and predicted that the trend would continue for at least ten years.

A decade after its initial proposal, the timeframe for an increase in transistor density was pushed to every two years. However, the origins of the term ‘Moore’s law’ itself remain somewhat unclear, with some scholars suggesting that the phrase was first coined in the Electronics magazine.

The modern electronics industry is linked to Moore’s law in various ways — be it with processing speeds, memory capacity, sensors, or even the number and size of pixels in digital cameras. All of them have demonstrated exponential growth in accordance with the law. This sustained, exponential improvement has revolutionised the digital electronics industry, driving rapid technological change across the world economy in recent decades.

“All I was trying to do was get that message across, that by putting more and more stuff on a chip we were going to make all electronics cheaper,” Moore said in a 2008 interview.

We have been told for decades every year that Moore’s law is going to die this year, but Moore’s law has been alive and well, and many believe it will continue to be alive for a long time to come. Now, this is not to say that we will not see its demise – this will definitely happen sometime in the future. However, it is important to remember how the law has been the guiding force for pushing the boundaries of what was possible on a silicon substrate.

Along came Intel

Let’s backtrack a few years. In February 1965, for Electronics magazine, Moore almost played the role of a foreteller when providing the list of possibilities with silicon microchips. “He offered an impressive, visionary list of possibilities — home computers, automatic controls for automobiles, portable communications equipment, and the electronic wristwatch — a list that today seems conservative but in 1965 was startling, exciting, and provocative,” reads a Wired article.

Then came 1968, the year when Moore founded Intel (short for integrated electronics) with his Fairchild colleague Robert Noyce. The company paved the way for pioneering the innovation needed to keep up with Moore’s own law.

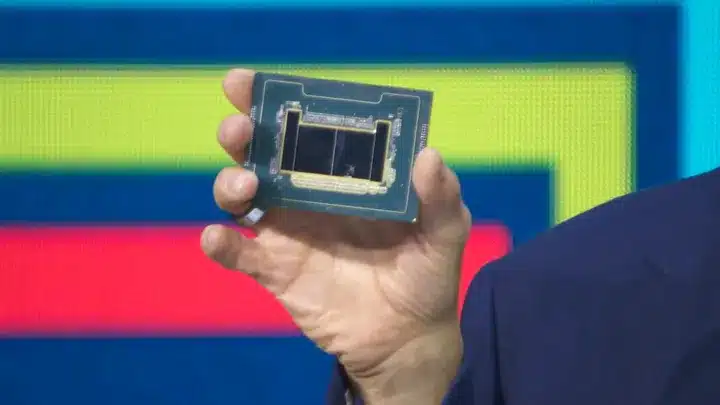

Within two years of being formed, Intel introduced the world’s first electronically programmable microprocessor. The Intel 4004 came to be when a Japanese calculator manufacturer asked them for 12 different chips for different functionalities – instead, they made 1 chip which could do all the 12 tasks. This chip was a breakthrough that announced the rise of the modern digital age.

From there, one innovation after another, the era of personal computers was just around the corner. Within two years, the company released its second microprocessor, the 8-bit CPU known as the 8008, followed by the 8080 microprocessor in another two years’ span. The 8080 microprocessors will go down in history as one that powered one of the first personal computers like the Altair 8800, SOL-20, and IMSAI 8080. The same year Moore also became the CEO of the company, replacing Noyce.

From left: Andy Grove, Robert Noyce and Gordon Moore, all of whom would serve first as Intel’s chief executive and then as its chairman at some point

During the 1970s, Intel experienced a period of significant triumphs, including its debut on the Fortune 500 list. This decade-long success story reached its pinnacle when IBM decided to use Intel’s 8088 chip in its personal computer, which was launched in 1981. This laid the groundwork for the development of the Wintel or Intel architecture, also known as x86, which went on to dominate the personal computer market for the next four decades. The use of the 16-bit 8088 processor in the IBM PC signalled the beginning of a new era, where personal computers emerged as powerful business tools.

Moore continued to hold the position of chairman and CEO until 1987, post which he was named chairman emeritus in 1997.

Moore, the Philanthropist

He had been an active philanthropist for many years. He is known for his contributions towards scientific research, environmental conservation, and educational initiatives through the Gordon and Betty Moore Foundation, established in 2000. Moore has particularly been a strong advocate for science education, with the foundation providing funds for initiatives to improve science and mathematics education in schools.

Since inception, the foundation has donated more than $5.1 billion towards charitable causes. Moore has been recognised for his philanthropic work with numerous awards, including the Carnegie Medal of Philanthropy and the IEEE Medal of Honour.