The year 2020 saw many exciting developments in machine learning. As the year 2020 comes to an end, here is a roundup of these innovations in various machine learning domains such as reinforcement learning, Natural Language Processing, ML frameworks such as Pytorch and TensorFlow, and more.

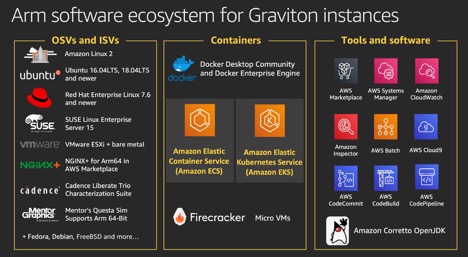

Graviton Processors

Arm-based Graviton processors went mainstream in 2020, which utilize 30 billion transistors with 64-bit Arm cores built by Israeli-based engineering company Annapurna Labs. AWS recently acquired it for powering memory-intensive workloads like real-time big data analytics. It showed a 40% performance improvement emerging as an alternative to x86-based processors for machine learning, shifting the trend from the Intel-dominated cloud market to Arm-based Graviton processors.

Image courtesy: AWS

Reinforcement Learning

There have been significant developments from reinforcement learning in 2020 with accelerated commercialization of reinforcement learning algorithms from various industries. There has been a greater industry-academia collaboration with the implementation of papers, including autonomous vehicles for making complex decisions in dynamic environments in both discrete and continuous state spaces. A number of corporations have explored implementing reinforcement learning applications for edge computing applications for Industrial IoT and IoT.

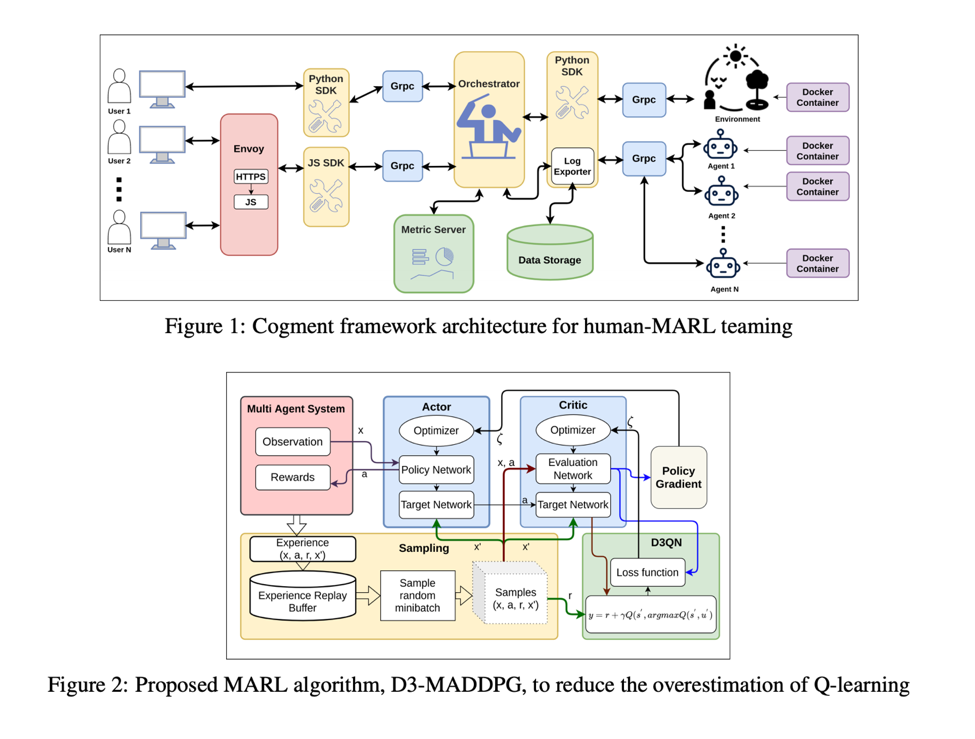

Some of the significant advancements include MARL (Multi-agent reinforcement learning) framework called COGMENT with internal interactions among the agents for leveraging the effective results from the observations and rewards in highly dynamic environments. Further, researchers developed a new technique, Human-MARL learning, where humans individually cannot achieve the goal without an agent, and agents cannot accomplish the goal without the human.

A new hybrid MARL reinforcement algorithm has been introduced dubbed D3-MADDPG (Dueling Double Deep Q Learning) for parallel training of decentralized policy rollouts with a joint centralized policy. Graph convolutional reinforcement learning algorithm has been introduced to learn to cooperate among humans and multiple agents in human-MARL environments. Deepmind has introduced a behavior suite for reinforcement learning in 2020 with Python implementation. The Github code repository also shows some examples from OpenAI Baselines and Dopamine as reference implementations.

Another reinforcement learning algorithm has been introduced in a randomized environment by Deepmind with randomized convolutional neural networks in 2D CoinRun, 3D DeepMind Lab, and 3D Robotics control tasks. Berkeley’s research in 2020 on deep reinforcement learning algorithms have found new adversarial policies can be reapplied from a particular adversary with reinforcement learning. An encoder-decoder neural network has been developed to search for the direct acyclic graph with reinforcement learning for best scoring. The model-free based reinforcement learning algorithms in the Atari gaming environment have been applied to learn effective policies. However, a new reinforcement learning algorithm has been introduced with a mode-free reinforcement learning environment dubbed SimPLe.

Image Courtesy: arxiv

PyTorch Vs. TensorFlow

PyTorch from Facebook was released in 2017, and TensorFlow was released in 2015 by Google. In 2020, the line blurred as both frameworks have seen a convergence in popularity and functionality. TensorFlow 2.0 had a major revamp on the programming API with the inclusion of Keras into the main API. TensorFlow’s static computational graphs were great for wrapping the modules and running on several devices such as CPUs, GPUs, or TPUs. However, it’s always hard to debug a static computational graph.

PyTorch always had a dynamic computational graph that allowed the data scientists to perform the computation line by line as the code gets interpreted, making it easy to debug and identify the problem. In 2020, TensorFlow introduced a dynamic computational graph similar to PyTorch with Eager mode. Now, PyTorch also allows static computational graph. In 2020, TensorFlow worked similar to PyTorch in many ways, including distributed computing with the ability to run on single or multiple distributed GPUs or CPUs.

PyTorch has shown rapid uptake in data science and deep learning engineering community, as the fastest-growing open-source projects according to Github report. According to Gradient’s analysis, in 2019, the platform grew 50% year-over-year with every major AI conference presented papers implemented in PyTorch. Overall, PyTorch has shown a giant leap of growth on PapersWithCode with PyTorch implementations compared with TensorFlow.

Natural Language Processing

Electra was introduced at ICLR 2020 for the cross-lingual ability of multilingual BERT with pre-training text encoders as discriminators rather than the generators leveraging commodity computing resources to pre-train the language models. StructBERT is another algorithm to incorporate language structures into pre-training for deep language understanding at the word and sentence levels to achieve SOTA results based on the GLUE NLP benchmark.

Transformer-XL NLP algorithm was also introduced, which goes beyond a fixed-length context learning the dependency that’s 80% longer than the recurrent neural networks and 450% longer than Vanilla transformers, and 1800% faster than Vanilla transformers. BERT reached a GLUE score of 80.5% and MultiNLI accuracy of 86.7%.

Google and Microsoft Research developed neural approaches for conversational AI for NLP, NLU, NLG with machine intelligence. ALBERT, XLNet papers have shown advancements with an earlier generation of NLP papers. Microsoft introduced Turing Natural Generation (T-NLG) language model with 17 billion parameters trained on NVIDIA DX hardware setup with Infiniband connections for communication between GPUs with NVIDIA V100 GPUs on NVIDIA Megatron-LM framework. DeepSpeed with Zero was introduced on T-NLG to reduce the model-parallelism degree, which is compatible with the PyTorch framework. Undoubtedly, GPT-3 has left its mark in 2020 on the trenches of natural language processing warfare in the entire history of humankind. GPT-3 was tuned with 175 billion parameters. It can create tweets and blogs.

However, data scientists from LMU Munich, just in October 2020, have developed another advanced technique, PET (Pattern-Exploiting Technique), and trained the NLP models with just 223 million parameters that have outperformed GPT-3 on the GLUE benchmark. OpenAI has to rethink the architecture for GPT-4 with unlabeled samples, as PET implemented on a fine-tuned ALBERT transformer, that has achieved 76.8% compared to the earlier benchmark of 71.8% from GPT-3.

What Lies Ahead For 2021

The digital computing for machine learning will shift to “neuromorphic” brain-like in-memory computing as the future of the machine learning paradigm. Manufacturing of large-scale neuromorphic spiking array processors to mimic the brain will be embraced by all the leading chip manufacturers, led by Hewlett Packard Enterprise, Samsung, IBM Corporation, Intel, Applied Brain Research Inc, General Vision Inc, and BrainChip Holdings Ltd. The neuromorphic computing market offering artificial neural networks, hardware, signal recognition, data mining in Aerospace, Defense, data science, Telcom, automotive, medical, and industrial regions is expected to grow at 86% CAGR in 2022 to USD $272.9 millions from USD $6.6 million in 2016 for supercomputing and high-performance computing applications.

In the 10,000 years of human civilization, only in the past 60 years, the speed of computation has gone from 1 floating-point operation per second to 250 trillion FLOPS, just in a blink of an eye. The first exascale supercomputer Aurora that can operate at floating-point operations per second will be launched in 2021 by US DoE to break the exascale barriers funded with $500 million.

The exascale supercomputer is expected to profoundly shake up human civilization with enormous calculation speeds to solve problems in medicine, bioinformatics, genomics, plasma turbulence, molecular interactions quantum computing, and eventually be able to simulate the entire human brain regions by decoding the brain. We’ve seen a spur of material science advancements with the advent of memristors in 2020, shaking the foundations of in-memory computing. We can expect to see the rise of the memristors and graphene-based processors for in-memory computing with spiking neural network architectures for deep learning in 2021.

Memristive crossbar architectures will be the linchpin for the future of deep learning as powerful in-memory computing engines for artificial neural networks. The traditional memristors round off the nearest conductance states for the trained weights and cause slow-down on-chip training. In 2021 and beyond, we will see the introduction of graphene-based multi-level and non-volatile memristive synapses for programming endurance for greater computing accuracy while implementing machine learning and deep learning algorithms. The world will move away from the traditional Von Neumann architecture that operates between the logic and memory as a separate framework for scaling millions of synaptic weights of artificial neural networks.

For the survival of computers and human advancement in the Post-Moore era, there will be a rise of Spintronics memory with a magnetic random-access memory (MRAM), SOT-MRAM, VCMA-MRAM as an alternative to DRAM. In-memory computing and “neuromorphic” computing are the only way to break any exascale barriers for the future of deep learning in 2021 and beyond.