Creating large codes for software programs can sometimes be a time consuming and tedious task. Developers today are looking for methods and tools that can aid coding and improve turnaround times and accuracy for software development productivity. Therefore, automatic code generation capabilities are being discovered that can evolve within programming languages and IDEs that work at compile time. Automatic code generation can act as an amazing tool with potential use cases for enterprise settings. This article will discuss two of the most recently developed tools for automatic code generation, the Salesforce CodeT5 and Github Copilot.

Salesforce Code T5

The CodeT5 by Salesforce is an open-source machine learning tool that can understand and readily generate code in real-time. It is an identifier-aware unified pre-trained coder-encoder tool that enables a wide range of code intelligence applications. The tool aims to reduce time spent writing software as well as reduction of computational and operational costs. It consists of software code pre-training methods that boost a range of downstream applications in the software development lifecycle. CodeT5 possesses an uninformed model for natural language processing tasks, which reframes text-to-text with input, and output data always being strings of texts.

The existing code pre-training methods had two major limitations that CodeT5 addressed. First, they often rely on either an encoder-only model similar to BERT or a decoder-only model like GPT, which is suboptimal for generation and understanding tasks. Second, current methods can only adopt the conventional NLP pre-training techniques on source code by regarding it as a sequence of tokens like natural language, which largely ignores the rich structural information present in the programming language, information which is vital to fully comprehend code semantics.

CodeT5’s Architecture and Working

Salesforce’s CodeT5 is built on a similar architectural scheme of Google’s T5 framework, but it incorporates better code specific knowledge, which endows the model with a better code understanding. It takes the code to be worked upon and its accompanying comments as a sequence to build and generate upon.

Some of the pre-training tasks of CodeT5 include:

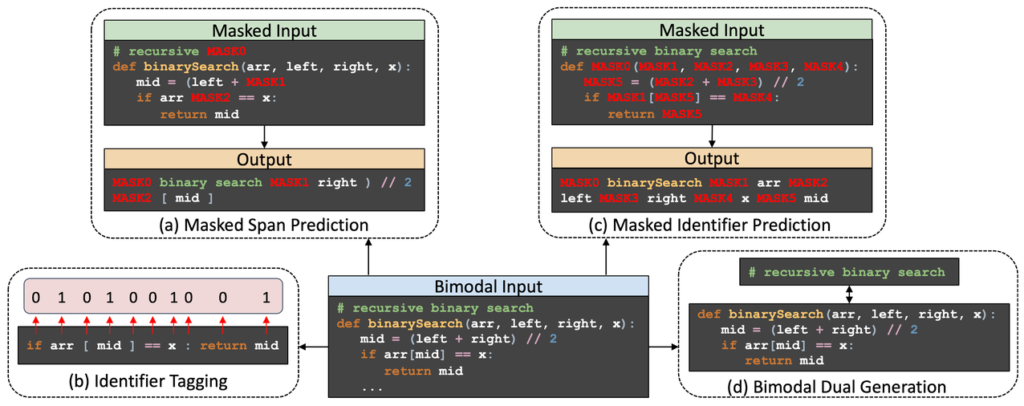

- Masked Span Prediction: Randomly masks span with lengths, and the decoder recovers the original input. Captures syntactic information of the NL-PL input and learns robust cross-lingual representations.

- Identifier Tagging: The encoder distinguishes whether each code is an identifier or not.

- Masked Identifier Prediction: Employs the same mask placeholder for all occurrences of one unique identifier. Comprehends the code semantics based on the obfuscated code.

- Bimodal Dual Generation: Jointly optimises conversions from code to its comments and vice versa. This encourages a better alignment between the NL and PL counterparts.

Image Source: Salesforce Code T5

Features of Code T5

Some features of CodeT5 include:

- Text-to-code generation: Can generate code based on the natural language description.

- Code autocompletion: Can complete the whole function of code, given the target function name.

- Code summarisation: It can generate the summary of a function in natural language description.

Risks with CodeT5

Although CodeT5 can be a potential tool for auto code generation, there are still some ethical risks involved that one should consider beforehand. CodeT5 team says that they are still working on improving the following risks:

- Automation Bias: Sometimes, the system might produce functions that superficially appear correct but might not actually align with the developer’s intents. If developers adopt these incorrect code suggestions, it might harm the schema and cause a much longer debugging time with significant safety issues.

- Security Implications: Pre-trained models might encode some sensitive information from the training data. It is possible that the tool might be unable to completely remove certain sensitive information and might produce code that harmfully affects the software.

Github Copilot

Github Copilot is a service tool created by GitHub and OpenAI and is described as an AI pair programmer. It is a plugin to Visual Studio Code and auto-generates code based on the current file’s contents and the current cursor location. Copilot can generate entire multiline functions and can even create documentation and tests based on the context of a file of code.

It is powered by a deep neural network language model called Codex, trained on several public code repositories on Github. It can help fine-tune and get state-of-the-art results on a wide range of NLP problems.

How does it work?

The Visual Studio Code sends comments and code typed by the developer to the Copilot service, which synthesises and suggests the implementation. Github states that the Copilot tool acts as a pen for generating code. The former claims that the Copilot understands more context than most of the currently available code assistants. It uses the provided context and synthesises a code to match. Copilot can work with a wide range of frameworks and languages such as Python, Javascript, TypeScript, Ruby, and Go. Alternative suggestions can be cycled through, and suggestions can be either accepted or rejected with an option to also manually edit the suggested code.

Image Source: Github Copilot

Features of Copilot

Some features of Github Copilot include:

- Converting comments to code: Write a comment that describes the logic, and Copilot assembles the code.

- Easy Autofill: Copilot can help quickly produce repetitive code patterns. When fed with a few examples, the Copilot learns and does the rest.

- Aids in testing: Copilot automatically suggests tests that match the code implementation.

Risks with Copilot

Github Copilot might come along with unknown issues at implementation, which can be a potential risk factor, some of which include:

- Bugs at implementation: A few developers who got their hands on the Copilot complained that it generated a number of bugs at runtime when trained on a large size of Github projects.

- Unwanted outputs: Github Copilot may sometimes produce undesired outputs that might include biased, discriminatory, abusive or offensive outputs.

Summing Up

Although auto code generators are tools that aim to automate tedious and time-consuming coding work for developers, they come with their own set of limitations and risk factors. These issues seem to be still at work and require strong attention. In the coming future, this technology will enable existing engineers to be more productive, reducing manual tasks and helping them focus on other interesting aspects of work.