Salesforce recently open-sourced a machine learning model known as CodeT5, which can understand and generate code in real-time. The team claims that CodeT5 achieves state-of-the-art performance on coding tasks, including code defect detection, predicting whether code is vulnerable to exploits, and clone detection, which predicts whether two code snippets have the same functionality.

The existing code pre-training methods have had two major limitations. First, they often rely on either an encoder-only model similar to BERT or a decoder-only model like GPT, which is not optimal for generation and understanding tasks. Second, most simply adopt the conventional NLP pre-training techniques on source code by regarding it as a sequence of tokens like natural language (NL). Unfortunately, this largely ignores the rich structural information in a programming language (PL), which is vital to understand code semantics fully.

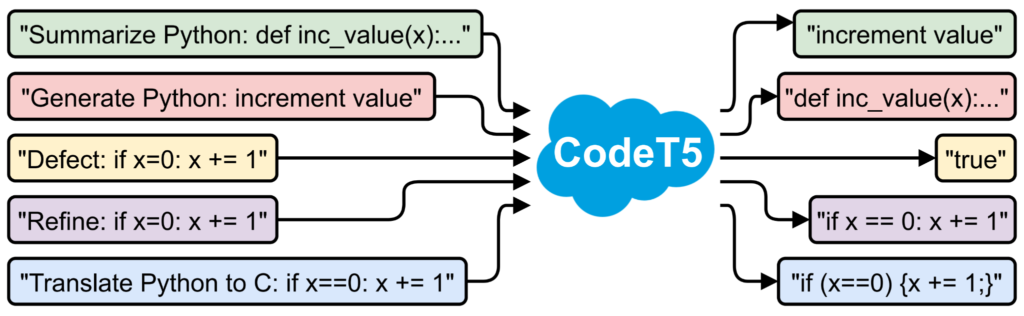

To address these limitations, CodeT5, an identifier-aware unified pre-trained encoder-decoder model, comes to the rescue.

Image Source: CodeT5’s GitHub

CodeT5 is built on a similar architecture to Google’s T5 (Text-to-Text Transfer Transformer) framework but comes with a better code understanding. A more unified model for natural language processing tasks is presented. It reframes text-to-text, where the input and output data are always strings of texts. This allows any task to be applied by this uninformed model.

The research team of CodeT5 had over 8.35 million examples to train the AI on, including user-written comments from open source GitHub repositories. While training, the largest and most capable version of CodeT5, which had 220 million parameters, took 12 days on a cluster of 16 Nvidia A100 GPUs with 40GB memory.

The coding assistant is packed with three code intelligence capabilities:

- Text-to-code generation: Can generate code based on the natural language description.

- Code autocompletion: Can complete the whole function of code given the target function name

- Code summarization: It can generate the summary of a function in natural language description

However, CodeT5 has not yet been tested on APPS, thus leaving possibilities of generating unreliable code. Researchers also acknowledged one major drawback being encoding stereotypes like race or gender from text comments in datasets used to train.