|

Listen to this story

|

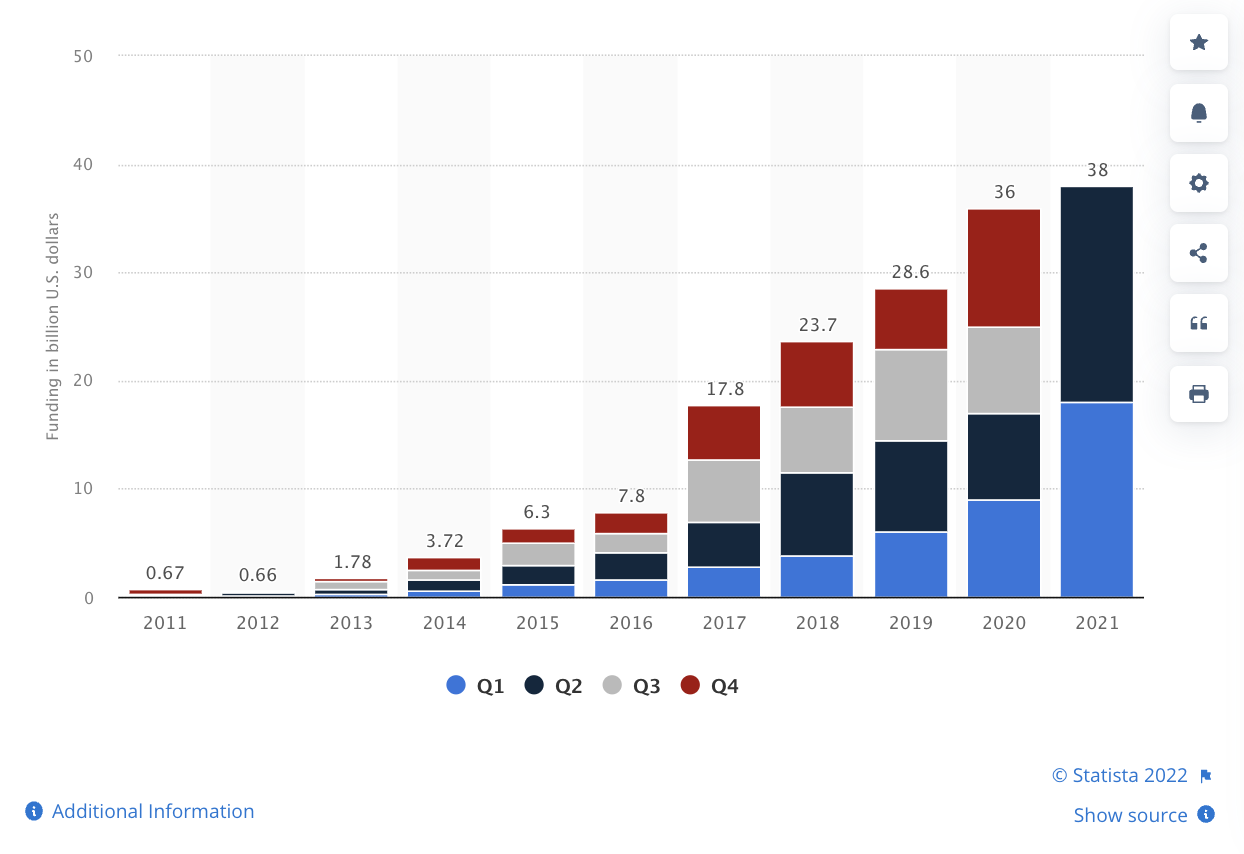

As research in AI moves ahead at a breakneck speed, the monumental goal of AGI may seem imminent. Investors are feeding millions of dollars into the AGI dream with companies like Open AI, DeepMind and Google Brain, all backed by big tech companies, leading the way. A report by Mind Commerce stated that investment in AGI will touch a massive USD 50 billion by 2023. However, the progress is more than just encouraging—OpenAI’s DALL.E 2 can create arresting images out of any text prompts, their GPT-3 can write about pretty much anything, DeepMind’s Gato, a multi-modal model promised to perform just about any task thrown at it. In July, a Google engineer, Blake Lemoine, who was working with a chatbot called LaMDA, was convinced that the robot was sentient. But for all the progress made, researchers worry that we might achieve intelligence in a way that will tick off the boxes of benchmarks but might not understand what this ‘intelligence’ is about.

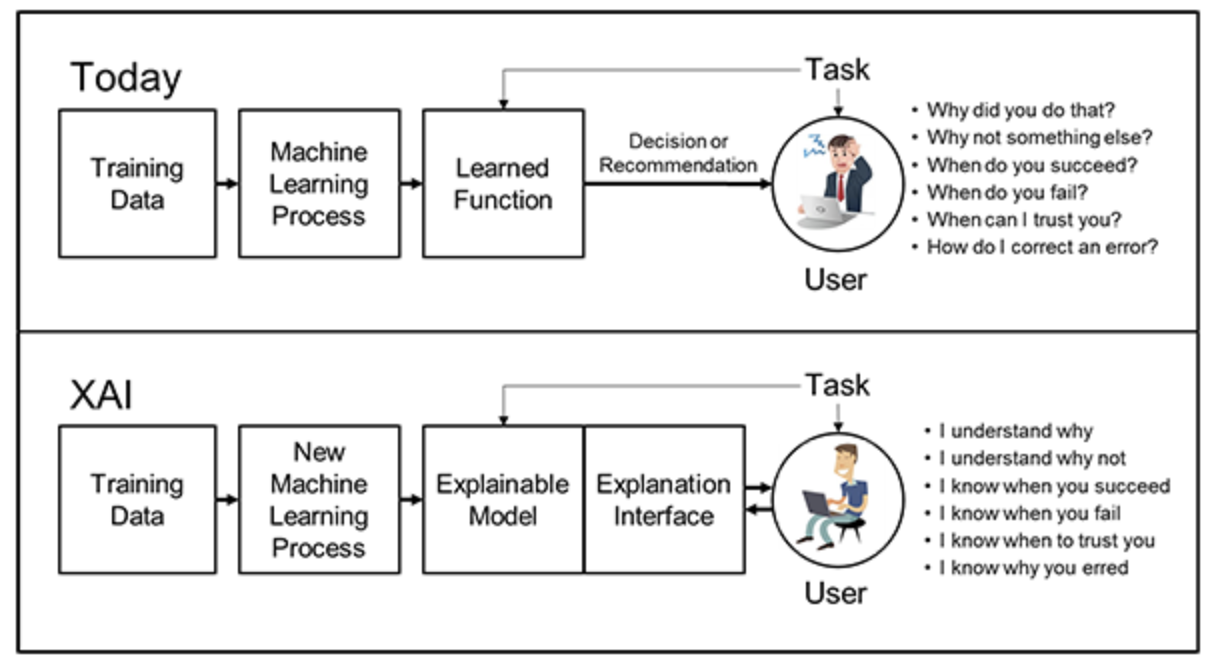

Need for AI Interpretability

Thomas Wolf, co-founder of Hugging Face, articulated these fears in a post on LinkedIn. Wolf noted that enthusiasts like him, who saw AI as a way to unlock deeper insights on human intelligence, now seemed to believe that even though we are seemingly inching closer towards intelligence, the concept of what it was still eluded us.

“Understanding how these new AI/ML models work at low level is key to this part of the scientific journey of AI and calls for more research on interpretability and diving in the inner-working of these new models. Pretty much only Anthropic seems to be really working on this type of research at the moment but I expect this type of research direction to be increasingly important as compute and large models become more and more widely available,” he stated.

Wolf’s prediction isn’t a novel one. Researcher and author Gary Marcus has often pointed out how contemporary AI’s dependence on deep learning is flawed due to this gap. While machines can now recognise patterns in data, this understanding of the data is largely superficial and not conceptual—making the results difficult to determine.

Marcus has said that this has created a vicious cycle where companies are caught in a trap to pursue benchmarks instead of the foundational ideas of intelligence. This search for clarity pushed a lot of interest into interpretability and the money followed later. Until a couple of years ago, explainable AI witnessed its time in the spotlight. There was a wave of core AI startups like Kyndi, Fiddler Labs and DataRobot that integrated explainable AI within them. Explainable AI started gaining traction among VCs, with firms like UL Ventures, Intel Capital, Light Speed and Greylock seen actively investing in it. A report by Gartner stated that “30% of government and large enterprise contracts will require XAI solutions by 2025”.

However, most of the growth in explainable AI was expected to arise in industries like banking, health care and manufacturing—essentially, areas which placed a high value on trust and transparency and required accountability from these AI models. The money also flowed in that direction. VCs were more keen to put their money on more tedious applications that were focused on transforming an existing industry rather than a distant moonshot.

Explainability in commercial AI and academic research

Startups like Anthropic were started with a very different intention than this. Founded by OpenAI‘s former VP of research Dario Amodei along with his sister, Daniela, the startup was formed less than a year back with nine other OpenAI employees. The young company picked up USD 124 million in funding then. Not even a year later, it raised another USD 580 million. The Series B round was led by the CEO of FTX Trading, Sam Bankman-Fried and included participation from Jaan Tallinn, co-founder of Skype, Infotech’s James McClave and former Google CEO Eric Schmidt.

What’s even more interesting is that Anthropic’s list of supporters didn’t include the usual suspects among deep tech investors. But, this is possibly because the startup is a non-profit organisation which immediately made it a deal breaker.

Ironically, like Wolf mentioned, the work that Anthropic was doing was rare. It wasn’t like companies that were waving the explainable AI flag to cater to the market. It is quietly working on improving the safety of compute-heavy AI models and understanding the source of behaviour in today’s LLMs.

After its big Series B funding, CEO Amodei said, “With this fundraise, we’re going to explore the predictable scaling properties of machine learning systems, while closely examining the unpredictable ways in which capabilities and safety issues can emerge at-scale. We’ve made strong initial progress on understanding and steering the behaviour of AI systems, and are gradually assembling the pieces needed to make usable, integrated AI systems that benefit society.”

A recent report titled ‘State of AI Report 2022’ by Nathan Benaich of Air Street Capital and Ian Hogarth of Plural Platform observed that funding in academic research in AI was drying up (with the exception of Anthropic) with a lot of the money shifting to the commercial sector. “Once considered untouchable, talent from Tier 1 AI labs is breaking loose and becoming entrepreneurial,” the report stated. More so, some research labs backed by tech giants have been shut down like Meta’s central AI research arm. “Alums are working on AGI, AI safety, biotech, fintech, energy, dev tools and robotics,” the document mentioned.