Data-driven researches are major drivers for networking and system research; however, the data involved in such researches are restricted to those who actually possess the data. The issue of data access is a major concern in the research community.

To mitigate this issue, one alternative is to create and share ‘synthetic datasets’. These synthetic data is modelled from real traces by identifying their key factors and creating generative models using statistical models. The main concern is whether these synthetic datasets have high fidelity and generalised for networking applications which require minimum human supervision.

To produce such a high fidelity dataset, researchers from IBM and Carnegie Mellon University jointly introduced DoppelGANger. The tool — DoppelGANger utilises generative adversarial networks or GANs to employ machine learning techniques for synthesising datasets that have the same statistics as that of the original training data.

What is DoppelGANger?

This study used GANs in particular as it offers three key benefits:

- GANs can be general across datasets

- GANs can be used for generating measurements and metadata, which enables it to support a wide range of use cases.

- GANs have already been used in other domains in creating high-fidelity datasets even for complex tasks such as images, text, and music.

For this study, time-series measurements associated with multi-dimensional metadata, for example, measurements of physical network properties and datacenter cluster usage measurements were considered.

Through this study, the researchers have attempted to solve two problems that exist with current GAN approaches:

- Complex correlations between measurements and associated metadata

- On datasets with a highly variable dynamic range GANs exhibit severe mode collapse. Here, the GAN generated data only covers a few classes of data samples and ignores other modes of the distribution.

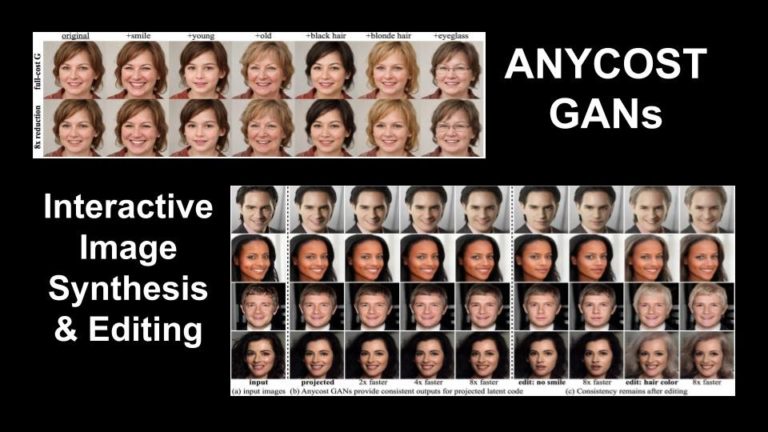

The proposed DoppelGANger tool tackles these issues in two ways. Firstly, to model correlations between measurements and their metadata, it decouples the generation of metadata from the time series and feeds metadata separately into the time series generation at each step. This is unlike the conventional methods where the two are generated simultaneously. For metadata generation, it introduces an auxiliary discriminator.

Secondly, the proposed GAN architecture tackles mode collapse by separately generating randomised maximum and minimum limits and a normalised time series that can be traced back to the realistic range.

Finally, the DoppelGANger tool gives batched samples as output instead of singletons.

The architecture of DG highlighting key ideas and ex-tensions to canonical GAN approaches.

The fidelity of DoppelGANger was tested on three datasets:

- Wikipedia Web Traffic (WWT): WWT tracks the number of daily views of Wikipedia articles between July 1, 2015, to December 31, 2016. Each sample has three metadata — Wikipedia domain, type of access, and type of agent.

- Measuring Broadband America (MBA): This dataset is from the United States Federal Communications Commission and consists of measurements such as round-trip times. Each sample has three metadata—internet connection technology, ISP, and the US state.

- Google Cluster Usage Traces: This dataset consists of usage traces of Google Cluster tracked over 12.5k machines for a period of more than 29 days in May 2011.

Observations Made

Through this study, it was found that:

- DoppelGANger can learn structural micro-benchmarks of each dataset.

- It was able to outperform baseline algorithms on tasks such as training prediction algorithms. In fact, predictors trained on DoppelGANger generated data to establish a test accuracy of up to 43%.

Apart from the above-mentioned points, the study found that while this tool could not satisfactorily resolve privacy tradeoffs of GANs, it can mitigate interference attacks on privacy by training DoppelGANger on larger datasets. Further, due to the decoupled generation architecture of DoppelGANger, data holders can hide certain attributes of the datasets.

Wrapping Up

This research has been chosen as one of the best papers at the ACM Internet Measurement Conference. The appeal of the DoppelGANger lies in the fact that while most of its counterparts require complex mathematical modelling, this tool requires very little prior knowledge of the datasets and their configurations. This is because GANs can generalise across different datasets and use cases. These features make the tool highly flexible, which is key to data sharing in cybersecurity situations.