The golden age for artificial intelligence may have just dawned, but the course is not without its challenges. A plethora of technology glitches seems to indicate that it is not quite there yet. Perhaps machines cannot be not perfect either.

Although AI is meant to solve problems, as it turns out, it can create new ones as well. These accounts may alarm or amuse consumers but are very embarrassing for the companies involved. Either way, it serves as a reminder of AI vulnerabilities and how the technology has a long way to go before it can be safely adjudged superior to humans.

From issues with Amazon’s facial recognition software to Google’s AI Panorama, here are 8 high-profile AI technology fails:-

Microsoft’s AI Chatbot Tay

With chatbots becoming popular across social networks, Microsoft launched its version for Twitter users in March 2016. Monikered ‘Tay’, it was programmed to have casual conversations in the language of a typical millennial.

According to the company, Tay leveraged AI to learn from these interactions to hold better conversations in the future. However, the Twitter chatbot had to be taken down less than 24 hours post its launch.

Targeting its vulnerabilities, trolls on the microblogging website manipulated Tay into making deeply sexist and racist statements.

Following this debacle, Peter Lee, Microsoft’s corporate VP for AI and research issued a public apology, which stated that the company took “full responsibility for not seeing this possibility ahead of time.”

Also read: Top 10 Biggest Failures oF AI In 2019

Amazon’s AI-Powered Recruiting Tool

According to a Reuters report, Amazon had been building machine learning (ML) programs since 2014 to review job applicants’ resumes. But it is well known that AI has a big bias problem and the company demonstrated this with example in 2015 when it realised that its new system was not rating candidates in a gender-neutral way. That is, its ML specialists had taught their own AI to prefer male candidates over female ones.

This happened because these models were trained to verify applicants by tracking patterns in resumes submitted to the company over a 10-year period.

The Seattle company reportedly disbanded the team a few years later after failing to develop or work to resolve that problem.

Google Photos’ AI Panorama

It is common knowledge now that Google Photos uses AI to throw up enhanced versions of photos taken by users on their smartphones. However, a relatively obscure feature can automatically detect images with the same backgrounds and merge these into a single picture.

In January 2018, Reddit user Alex Harker posted three photos taken at a ski resort, which Google welded into one panoramic image. Just that the resulting image has one giant error. Missing basic compositional basics, Google’s AI Panorama magnified Harker’s friend’s torso to throw up this:

Also read: Top 8 Biggest Failures oF AI In 2018

Facial Recognition Failure In China

Back in November 2018, Chinese police admitted to wrongly shaming a billionaire businesswoman after a facial recognition system designed to catch jaywalkers ‘caught’ her on an advert on a passing bus.

Traffic police in major Chinese cities deploy smart cameras that use facial recognition techniques to detect jaywalkers, whose names and faces then show up on a public display screen. After this went viral on Chinese social media, a CloudWalk researcher stated that the algorithm’s lack of live detection could have been the problem.

Boston Dynamics’s Robot Blooper

SoftBank-owned Boston Dynamics debuted its humanoid robot Atlas at Congress of Future Science and Technology Leaders in 2017. While it displayed impressive dexterity on the stage, it tripped over the curtain and tumbled off the stage just as it was wrapping up.

As funny as it may seem now, the company was somehow spared immediate online ridicule and became viral only after Reddit users caught on with it.

https://www.youtube.com/watch?v=TxobtWAFh8o

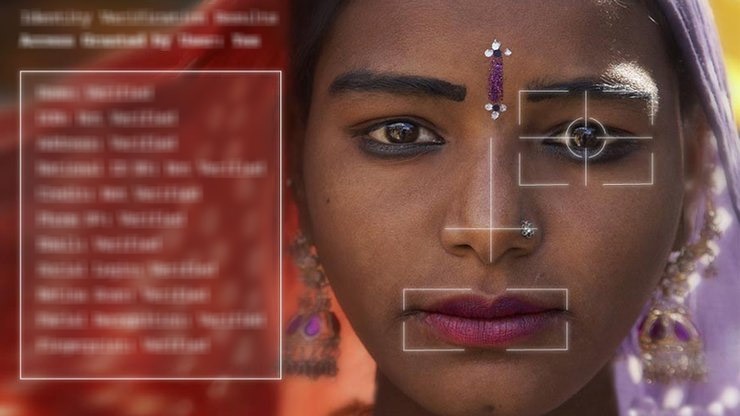

Amazon’s Rekognition

In 2018, Members of US Congress rained down on Amazon after its facial recognition software falsely matched 28 congresspeople with mugshots of criminals. In fact, according to the American Civil Liberties Union (ACLU), nearly 40% of the matches were of people of colour, indicating that the technology is racially biased.

Unfortunately, despite these demonstrated failures, law enforcement agencies are already trying to use such tools to identify subjects.

Also read: Top 5 Biggest Failures oF AI In 2017

LG’s IoT AI Assistant Cloi

At CES 2018, an LG robot created to help users control home appliances repeatedly failed to respond to commands from LG’s US marketing chief David VanderWaal. The IoT AI assistant Cloi simply blinked.

Anchored around ThinQ, LG’s in-house AI software, Cloi’s “disastrous” debut was mercilessly mocked on social media.

AI World Cup 2018 Predictions

The 2018 FIFA World Cup that took place in Russia kept the whole world engrossed – especially AI enthusiasts. This is because, before the start of the World Cup, several researchers had tried to predict its outcome using technology.

To do this, the researchers simulated the event 100,000 times and used three different data modelling techniques. The team used data taken from previous World Cups and analysed them on various parameters.

Unfortunately, they failed to predict the winner. Although one of the teams – Brazil – reached the quarter-finals, two of the best-predicted teams, Spain and Germany, could not even reach the quarter-finals.