While financial and e-commerce companies have already gone completely digital and are using advanced technologies, other industries such as retail and CPG are catching up. Traditional organizations that were hesitant to monitor anything apart from transactional data are now pumping resources to leverage data to optimize customer interaction and provide them with a personalized experience by understanding their preferences and responding quickly. They are also driving operational efficiency and innovating with business models and products to emerge better than their competitors. Much of this could be attributed to the lowered data processing costs and the emergence of newer platforms that have made tracking customer behaviour quite prevalent for these companies.

However, the availability of such a massive amount of raw data has left companies struggling to use it effectively. This data is of no use until one derives insights to make business decisions. As the world consumes more and more data, businesses increasingly require experts to handle large volumes of customer information, conduct competitor research, and product performance results.

Sigmoid is a leading data solutions company enabling Fortune 1000 companies to become data-driven using data engineering, data science, and AI/ML. The company was recently recognised in Deloitte’s Technology Fast 500TM winners list for the second year in a row.

Speaking about Sigmoid’s data engineering capabilities, Mayur Rustagi, Co-founder and CTO, said: “Our goal at Sigmoid has always been customer success. However, when we started working in this space, we realized that most customer projects failed not because their ML models were unsuccessful or the dashboards were not useful, but because they utilized outdated data engineering practices or traditional software engineering practices. Sigmoid has developed data agile processes that enable customers to reduce the risks while building data pipelines and make the project successful.”

In our previous article, Analytics India Magazine delved into how Sigmoid stands firm on the pillars of business consultancy, data science, and analytics. In this article, we will turn the spotlight on its data engineering capabilities and offerings.

Data Engineering: Moving Beyond Just Software Engineering

Software engineering has been popular for the programming languages it offers, object-oriented programming, and developing operating systems. However, as companies are witnessing a data boom, the conventional wisdom of software engineering fails to process big data. With a new set of tools and technologies, data engineering allows companies to collect, generate, store, process, and manage data in real-time or in batches while building data infrastructure.

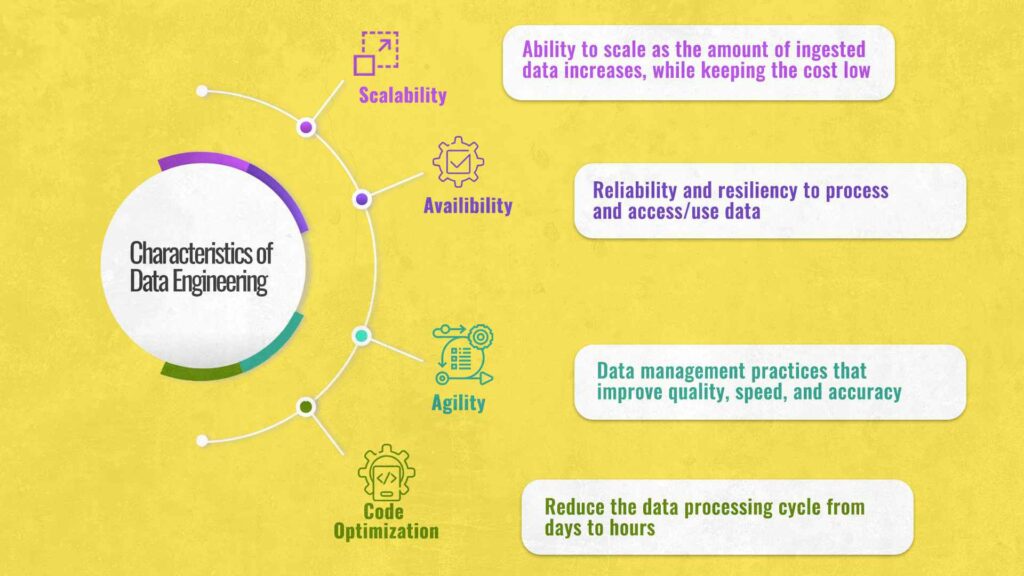

Traditional software engineering practices involve designing, programming, and developing software that is largely stateless. On the other hand, data engineering practices focus on scaling stateful data systems and dealing with different levels of complexity. Additionally, there are also differences in the complexity of the two fields in terms of scalability, optimization, availability, and agility, which are mentioned below:

- Data engineering addresses scalability in the form of three V’s – velocity, variety, and volume of data, which software engineering doesn’t focus on.

- The next difference is the optimization of code. The exponential increase in performance of modern computing enables software engineers to focus on writing cleaner and more understandable code rather than hyper-optimized one. However, for data engineers optimizing code can reduce the data processing cycle from days to hours.

- The third difference between data engineering and software engineering is availability. It means that even if one loses an hour of data processing, the data pipeline would now have to work at two times the speed and much higher volume.

- Lastly, while the traditional software engineering process works in an agile fashion, data engineering approaches the problems at a micro-level, involving practices like DataOps to focus on data management practices that improve quality, speed, and accuracy manifold.

Setting up a data engineering culture is therefore crucial for companies to aim for long-term success. “At Sigmoid, these are the problems that we’re trying to tackle with our expertise in data engineering and help companies build a strong data culture,” said Mayur. With expertise in tools such as Spark, Kafka, Hive, Presto, MLflow, visualization tools, SQL, and open source technologies, the data engineering team at Sigmoid helps companies with building scalable data pipelines and data platforms. It allows customers to build data lakes, cloud data warehouses and set up DataOps and MLOps practices to operationalize the data pipelines and analytical model management.

Building a Data Culture

Transitioning from a software engineering environment to data engineering is a significant ‘cultural change’ for most companies. Being data-driven requires enterprises to focus on data quality, data democratization, and data monetization while taking care of legal implications and privacy. Data engineers enable information collection from different sources and make accurate data available to end-users such as executives, business analysts, data analysts, and data scientists, allowing them to arrive at insights and make critical business decisions.

The data engineering team at Sigmoid designs robust data platforms by breaking data silos to provide it in an accessible format. They are also responsible for creating automated data pipelines, helping transition from on-premise to the cloud in a cost-effective way, and more. The company also helps customers optimize their older data pipelines.

Sigmoid invests heavily in building strong data engineering teams by offering training courses and certifications across fresher, lateral, and manager-level hires. It has an in-house learning and development academy, Takshashila, that nurtures data experts and creates specialists in the field. It focuses on specialized skill sets such as programming languages, SQL, Python, Java/Scala, Data Modeling, Distributed computing, Cloud platforms like AWS, Azure, GCP, and Cloud data warehousing, to name a few.

Dynamic Environment For Data Engineers at Sigmoid

At Sigmoid, data engineers get the opportunity to work on various AI/ML projects for Fortune 500 and Fortune 1000 companies across industries, from building scalable data pipelines to deploying ML models into production. They get to work in different cloud environments, technology stacks, and hybrid teams, enabling them to grow holistically.

The data engineering team at Sigmoid has operationalized over 200 analytics models, managed numerous nodes while processing over 50 petabytes of data on a daily basis. The focus on open source technologies, especially cloud, allows them to explore more avenues while contributing to the community. Sigmoid is careful in the selection of employees and training them in machine algorithms to write optimal codes for building data pipelines.

Sigmoid has achieved several milestones along the journey. Talking about some other impact points that the company has created for its client, Mayur shared a few instances as below:

- Sigmoid’s data engineering team developed the first Google Cloud Platform (GCP) deployment scripts of Spark while working with a customer looking to deploy Spark in a GCP environment, which was not an offering by GCP at the time. The Sigmoid team modified the entire software to work on Google and maintained it for a while.

- Sigmoid helped a client improve profitability by cutting down on operational costs by moving from on-premise infrastructure to cloud in just six months. It created a new ETL framework and streamlined existing databases that improved data accuracy by nearly 2.4 times, ingested over 150 billion rows of data daily from 120+ feeds, and saved $2.5 million annually.

- Sigmoid sorted the data quality issues of a client they were facing due to CRM migration by building scalable architecture to productionize the Multi-Armed Bandit model and automating pipelines in AWS. It led the CRM platform to trigger personalized emails to end customers improving customer satisfaction and achieving sales uplift by 8%.

Exploring a Career in Data Engineering

There is no doubt that data engineering is a high-growth profession. However, it is a new space. To set realistic expectations, Sigmoid looks for candidates who have good coding skills, an understanding of software design and architecture, and are thorough with the technologies they pick. Candidates must also be observant and have good communication skills to ask the right questions to customers.

“There is a huge gap between the expectation and the kind of skills that exist today, and we don’t expect people to know a lot of it or have specialized skills,” said Mayur.

Sigmoid instead focuses on upskilling the right talent with custom training programs and certifications to acquire the technical skills required for data engineering.

Data engineering is challenging the entire landscape of business processes, and there could be no better time for aspiring candidates to take a plunge into this ever-evolving field. Sigmoid presents exciting opportunities for its data engineers to collaborate on various projects throughout the year and learn from a highly diverse group of experts. With its rich clientele, deep thought leadership, and exposure to exciting opportunities, Sigmoid is the perfect place for any data engineer who wishes to leave a mark in this field.