A lot of data and a lot of work goes into training models in machine learning. The cost of the model training also becomes very high if the model becomes complex. Complex machine learning models can only be made with years of experience. Machine learning and AI engineers that do not have the experience will not be able to make the models more efficiently and quickly. This is where transfer learning comes into the frame to help them.

Introducing Transfer Learning

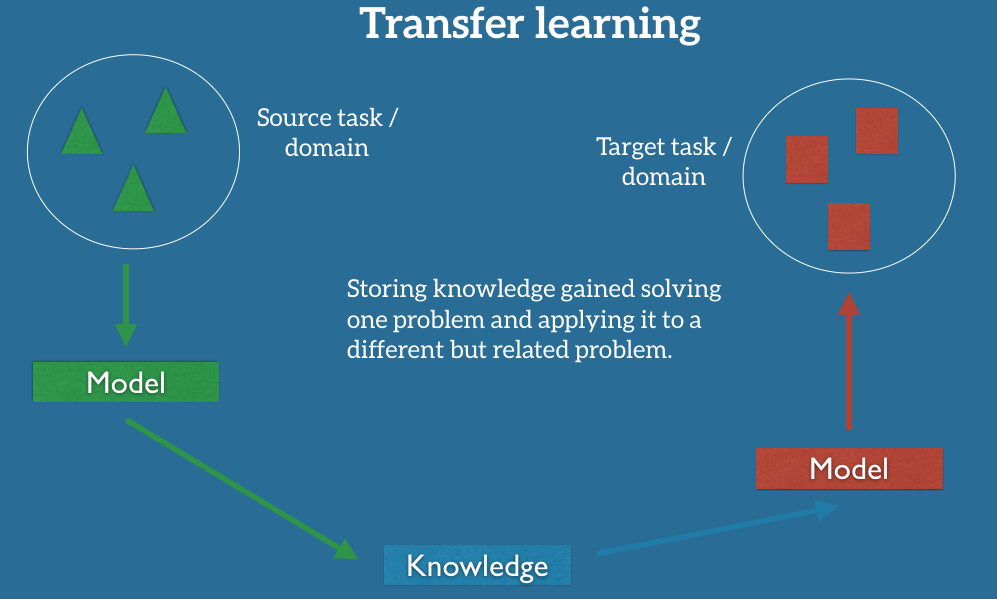

Transfer learning, as the name suggests, is when a machine learning model is used for completing one problem and the same model is then used as a starting point when solving a different problem. It is primarily used to speed up the training process and improve the performance since a lot of time and efforts are spent in training machine learning models. This method is to improve the learning of the model and to basically shorten the time and make the learning process quick for the current task. It can also be regarded as a shortcut to solving machine learning problems.

Two common approaches to learning transfer learning are:

- Develop model: A relative model trained with a large set of data has to be selected and then later, some feature extraction is done and then a model is made. This model made for task 1 can be directly used as the model for task 2. It might also require some modifications to be done to the model used in task 1.

- Pre-trained model: In this approach, a pre-trained model is chosen among a list of models. The selected model can be used for task 2 and also, just like the first approach, may involve slight modifications to be done.

Uses Of Transfer Learning

Transfer learning has so far been used in two spheres one of which is applications where training datasets require images or videos and another is when it requires a text.

Image/Video: Transfer learning can be applied in places when the algorithm has to learn from an image or a video, which means the training dataset will have to be either of these two. Some examples of these are the Google Inception model and the Microsoft ResNet model that have used image datasets and transfer learning. It is common to leverage a deep learning model trained on some large image data set, like ImageNet, and then use it for the new model as a transfer learning.

NLP: In this kind of learning, a large dataset of text is used as the training dataset in the main model and then used for transfer learning. Some examples of this are Google’s word2vec Model and Stanford’s GloVe Model.

Deepmind’s AlphaGo is an expert in the game Go but it fails to play any other game. This is because it is trained to play just one game. Neural networks do not help to adapt to playing different games just like the human brain does. It has to completely forget Go and start afresh to learn a new game. But using transfer learning, strategies used to train one came can help in playing other games as well.

Another example of transfer learning is in sentiment analysis. Twitter feed can be used to train a model for sentiment analysis and the same model can be used for other applications, like movie reviews. In this case, RNN models are trained using logistic regression techniques. The word embeddings and the recurrent weights learned from the source domain are re-used in the target domain to classify sentiments.

When To Use Transfer Learning

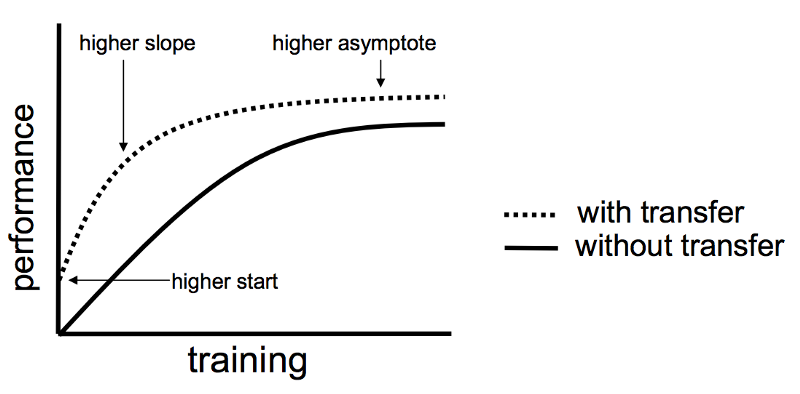

Most of the times, transfer learning is used when there is not enough data for the task being solved. At such times, machine learning engineers and data scientists can pick up a model and use transfer learning to adjust the model to suit the new task. Also, with its definition it is clear that transfer learning cannot be used when the two tasks are not related to each other, they have to be similar. But being related or unrelated is a relative opinion and so one must go by some set of rules to evaluate whether a model can be transferred to another task. Engineers much know what knowledge and data to use from the previous model to upgrade this next model. There are three things to keep in mind while deciding whether transfer learning can be used.

- Higher start: The initial skill (before refining the model) on the source model is higher than it otherwise would be.

- Higher slope: The rate of improvement of skill during training of the source model is steeper than it otherwise would be.

- Higher asymptote: The converged skill of the trained model is better than it otherwise would be.

If these benefits are seen, transfer learning can be applied.

Why Use Transfer Learning

The main objective to use transfer learning is to save time and efforts and have an advantage of the reliability of using tested models. By doing this you save a lot of money involved in a high-cost GPU for retraining the model. The goal is to make evolve machine learning as it as human learning as possible.

At the same time, it has to be noted that while applying transfer learning the model does not lead to a negative transfer, which is when the model to be used for the new task decreases its performance. So it must be made sure that there is a positive transfer when deploying transfer learning to solve problems.

Conclusion

Machine learning expert Andrew Ng had also said: “Transfer Learning leads to Industrialization”. Transfer learning is a powerful tool to achieve impressive state-of-the-art results. Many applications today are in need of machine learning models that can transfer knowledge and adapt to new machine learning problem-solving. There are many innovative research directions that this method can offer.