Think of the things artificial intelligence and machine learning have accomplished in the last few years– real-time translations, outperforming humans at board games, drug discovery etc. Transfer learning, federated learning, reinforcement learning, self-supervised learning etc., are the cutting-edge techniques that made these milestones possible. While transfer learning is an old machine learning technique, federated learning was introduced in 2017 by Google.

Transfer learning

Deep learning models need huge swathes of labelled data to be trained on to learn and work effectively. The process is also time-consuming. Transfer learning can help tackle these challenges.

Suppose you have good knowledge in a certain topic; learning allied topics becomes easier as you can always build on the fundamentals. Transfer learning follows the same principle.

As the name suggests, transfer learning is the process of using the gained knowledge from one model for a different task than it was originally intended for. Essentially, it is the re-use of a pre-trained model on a new task to be solved. As a result, transfer learning can work with fewer data and in a shorter time, saving a lot of computational resources and reducing the cost of building models.

Methodology

So, how does it work? The first step is to select a pre-trained model that acts as the base model from which knowledge will be transferred to the model at hand. There are two ways to use the inputs from the pre-trained model. You can freeze a few layers of pre-trained models and then train the other layers on the new dataset you have for the target model. The second approach is to build a new model by incorporating some features from the layers in the pre-trained model.

The major pre-trained models include Oxford VGG Model, Google Inception Model, Microsoft ResNet Model, Google’s word2vec Model, Stanford’s GloVe Model, Caffe Model Zoo etc.

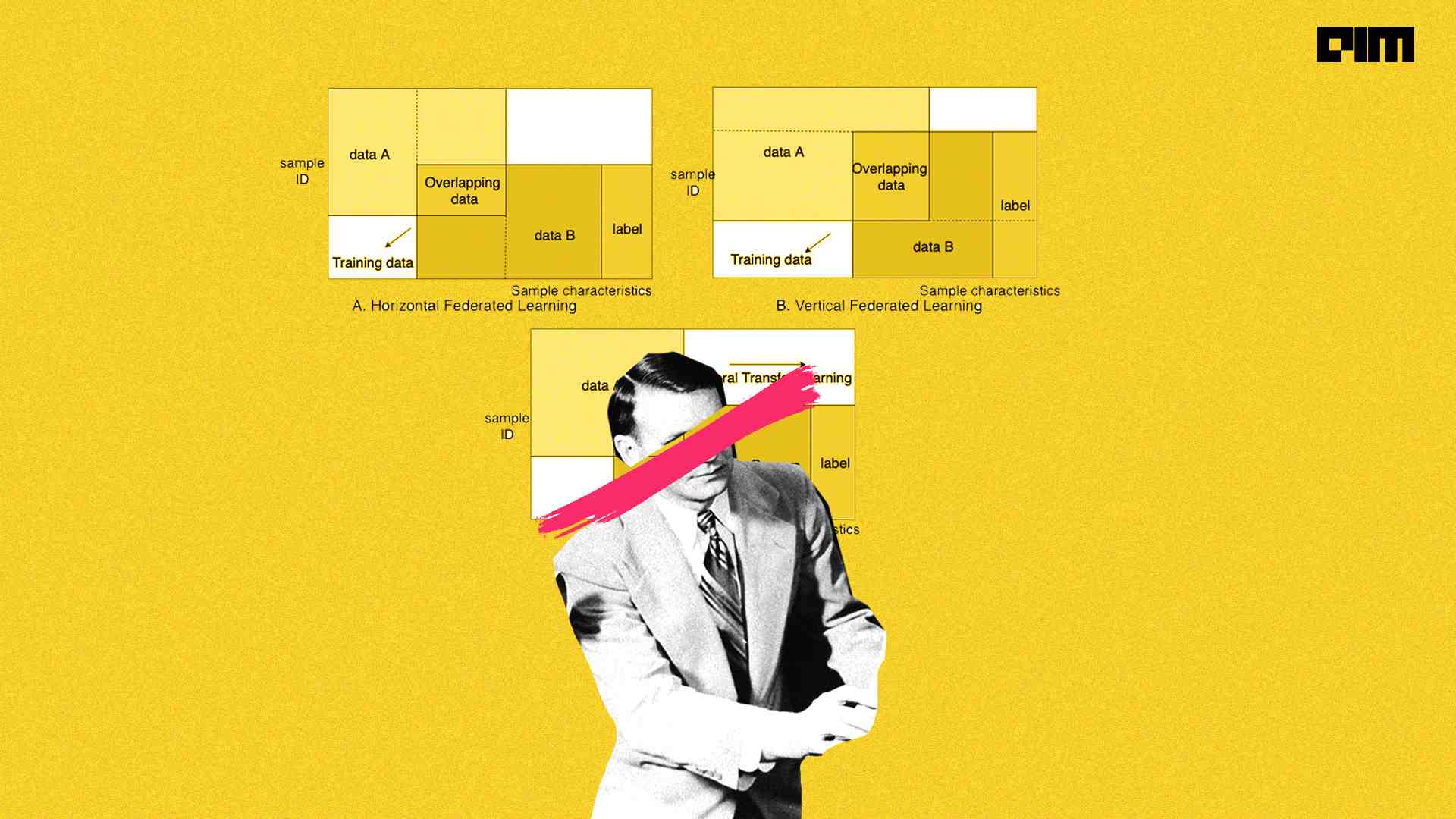

Federated learning

Five years back, Google introduced the concept of federated learning. Federated Learning enables mobile phones to collaboratively learn a shared prediction model while keeping all the training data on device, decoupling the ability to do machine learning from the need to store the data in the cloud. This goes beyond the use of local models that make predictions on mobile devices by bringing model training to the device as well.

“Standard machine learning approaches require centralising the training data into a common store. Traditionally we implemented intelligence by collecting all the data on the server, creating a model and then deploying it. The model and the data are all in a central location,” said Priyanka Vergadia, lead developer advocate, Google.

However, the downside is that the back and forth can hurt the user experience due to network latency, connectivity issues, battery lives, and unforeseen issues. One way to solve this is to have each client independently train the model from the data on the device. That said, each device may not have enough data to render a good model.

How does it work?

The best part of federated learning is decentralised learning, where the user data is never sent to a central server. Federated learning uses techniques from multiple research areas like distributed systems and privacy.

The FL model downloads the current model and computes an updated model on the device itself through edge computing using local data. Then, a consolidated and improved global model is sent back to the target devices. Federated learning has applications in recommender systems, NLP applications, self-driving cars, telecommunications, IoT devices etc.

Applying federated learning requires machine learning practitioners to adopt new tools and a new way of thinking: model development, training, and evaluation with no direct access to or labelling of raw data, with communication cost as a limiting factor.