Judea Pearl has been a prominent figure, an ACM A.M. Turing Award Winner computer science’s highest honour, a person who made it possible to create a viable ecosystem for Artificial Intelligence to thrive. That what we are seeing today is the causal effect of the efforts that he led in the 1980s to allow machines to do the reasoning probabilistically.

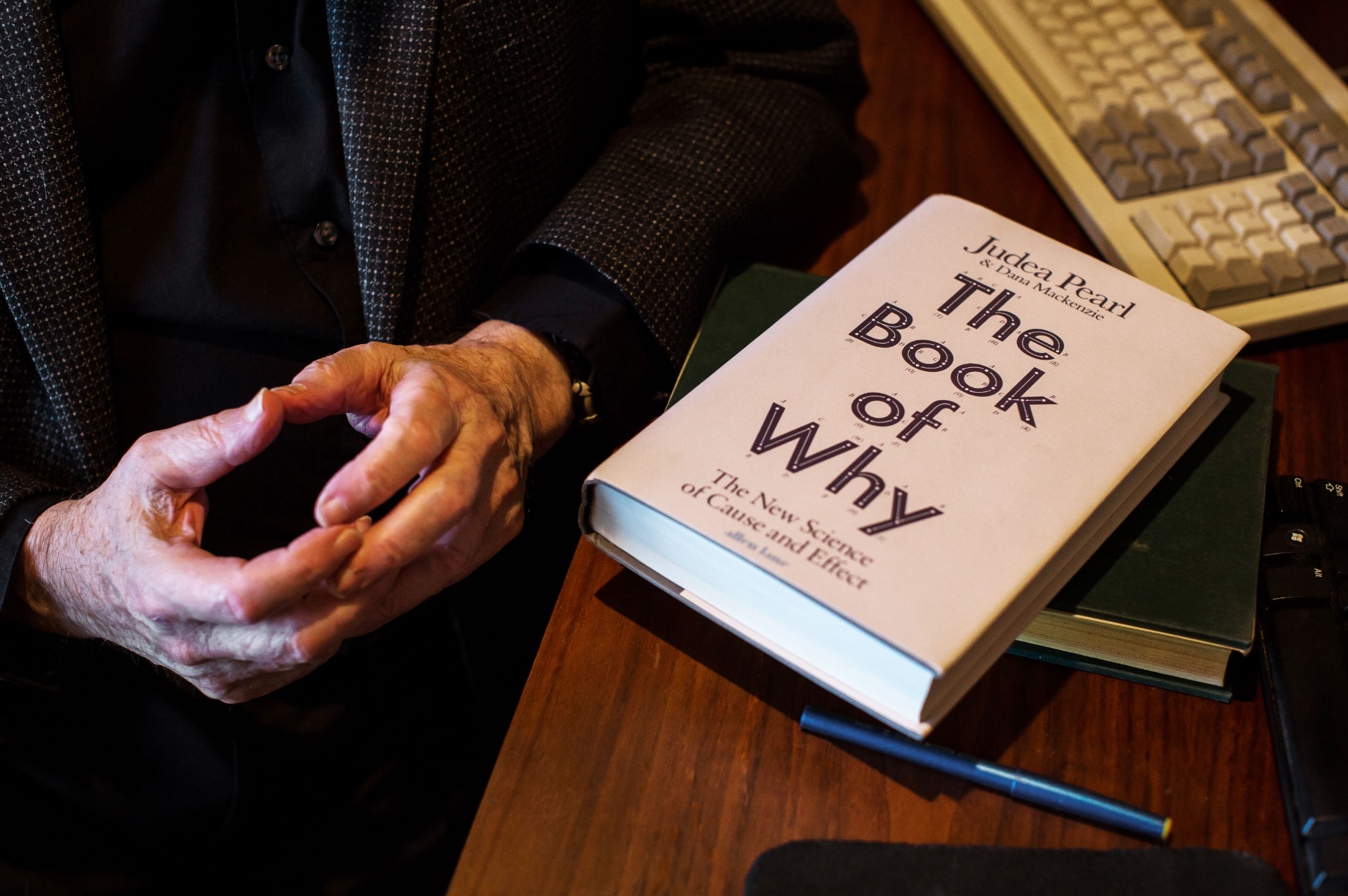

But now he is one of the most critical critics of AI in the world. A book that he authored in the year 2018 The Book of Why: The New Science of Cause and Effect, argues that AI has been handicapped by an incomplete understanding of what intelligence really is.

By using a scheme called Bayesian networks, he figured out how to program machines to associate a potential cause to a set of observable conditions, which was the primary challenge in the field of AI research. For example, when a patient who returned from Africa with a fever and body aches, Bayesian networks made it practical for machines to say that it was malaria.

According to Pearl, the probabilistic association is what is causing and disrupting the efficacy of Artificial intelligence at large. The latest breakthroughs are all around only projecting machine learning and neural networks only.AI use cases are all hyped up around computers that can intelligently master ancient games or drive a car through AI- based automation.

In his own words what he believes is that the state of the art in artificial intelligence today is merely just a souped-up version of what machines could already do a generation ago that is finding hidden regularities in a large set of data. All the impressive achievements of deep learning amount to just curve fitting. The book elaborates a vision for how truly intelligent machines would think and gives a completely different angle to analyse and judge Artificial Intelligence at large.

What The Turing Award Winner Proposes?

- Pearl argues to replace reasoning by association with causal reasoning. Instead of the mere ability to correlate fever and malaria, machines need the capacity to reason that malaria causes fever.

- Pearl is of the opinion that once this kind of causal framework is in place, it becomes possible for machines to ask counterfactual questions to inquire how the causal relationships would change given intervention which Pearl views as the cornerstone of scientific thought.

- Pearl also proposed a formal language in which to make this kind of thinking possible a 21st-century version of the Bayesian framework that allowed machines to think probabilistically must be developed.

- Pearl expects that causal reasoning could provide machines with human-level intelligence so that they could be able to communicate with humans more effectively to achieve the status as moral entities with a capacity for free will.

Judea Pearl’s breakthroughs in the 1980s made him an AI pioneer. Now he believes the field has gotten too caught up in the very methods he helped devise.https://t.co/iZgb3aDsRB pic.twitter.com/IkZvxggO0S

— Quanta Magazine (@QuantaMagazine) May 15, 2018

In Conclusion

Humans must find a way to equip computer machines with a newer model of an adaptable ecosystem. Humans should not expect the computer machine to exist in that reality and behave in an intelligible manner when it’s not given the much-needed access to a comprehensive model of analysing and understanding the reality.

Pearl in the book overall justifies the need of why that AI has been handicapped by an incomplete understanding of what intelligence really is. Causal reasoning is a cornerstone in explainable-AI. The prime reason to stress upon this factor is that of his belief that in future Robots will attain some amount of free will while making decisions, a fact he says that cannot be denied.

All these will come to public visibility the day a when a robot starts defying or ignoring software components consistently and starts selectively to be listening to certain sets of software components only. The other components that will be ignored, will be the ones that are maintaining norms of behaviour that have been programmed into them or are expected to be there based on past learning. So, it’s a moral as well as an ethical duty of the global AI industry to start finding solutions to build intelligent machines and teach them to identify and analyse the real cause and its subsequent effect.