In June 2018, social media giant Facebook open-sourced DensePose, a tool which was internally built by their artificial intelligence team. The tool has the ability to extract a 3D mesh model of a human body from two-dimensional RGB images. Facebook is also releasing the underlying code and dataset that DensePose was trained upon. The training set is called DensePose COCO. It will prove to be an invaluable tool for many computer graphics and computer vision researchers as the dataset contains image-to-surface correspondences annotated on 50,000 persons from the COCO dataset.

This particular tool can have a great impact on researchers and engineers working on scanners and 3D printing applications. The core team behind this project includes Rıza Alp Güler from INRIA, CentraleSupélec, Natalia Neverov and Iasonas Kokkino from FAIR. The research team states, “We involve human annotators to establish dense correspondences from 2D images to surface-based representations of the human body… If done naively, this would require by manipulating a surface through rotations — which can be frustratingly inefficient. Instead, we construct a two-stage annotation pipeline to efficiently gather annotations for image-to-surface correspondence.”

Getting Complete Surface-Based Image Interpretation

The problem solved by DensePose is two-fold and deals with

- Narrow problem of human understanding

- Multi-view or single-view registration

The tool can be used to extract a 3D mesh model of a human body from 2D RGB images, but with several changes, it might be possible to use the same algorithms for different applications. The researchers state that human understanding research primarily aims at a very small set of features like joints, elbows and humans.

The researchers also state that these kinds of approaches may only be good for limited applications like action or gesture recognition. This approach also reduces image interpretation. The team states that because of these limitations they wanted to improve upon the present algorithms, “We wanted to go further. Imagine trying new clothes on via a photo, or putting costumes on your friend’s photos. For these tasks, a more complete, surface-based image interpretation is required.”

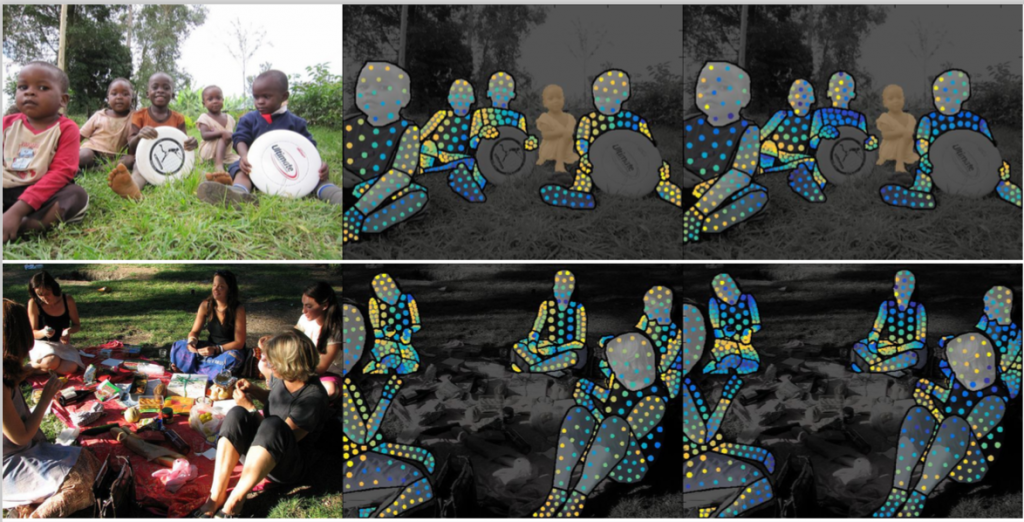

The system thus tries to understand human images in terms of surface-based models. Finding relations and correspondence between surfaces can be very useful. The researchers work shows that we can calculate dense correspondences between 2D RGB images and 3D surface models for the human body. The researchers take into account more than 5,000 nodes rather than only 10-20 joints. The speed up thus acquired can be used in the applications of virtual and augmented reality. The open sourced system can handle thousands of human images simultaneously on a single GPU. As stated above the researchers have released DensePose COCO to compliment this. Image-to-surface correspondences form the ground truth for training.

The DensePose Network

The researcher claim to have built a very novel and deep architecture for the task. They also make a claim that due to Caffe the neural network is as fast as Mask-RCNN. As the researchers say, “We build on FAIR’s Detectron system and extend it to incorporate dense pose estimation capabilities. As in Detectron’s Mask-RCNN system, we use Region-of-Interest Pooling followed by fully-convolutional processing. We augment the network with three output channels, trained to deliver an assignment of pixels to parts, and U-V coordinates”.

The strategy of the research project is to find dense correspondence by dividing the surface into many parts. The system determines for every pixel:

- which surface part it belongs to,

- where on the 2D parameterization of the part it corresponds to.

Architecture of DensePose network

The system can be trained in a supervised fashion. But it is claimed that better results can be achieved by “inpainting” the knowledge learned from supervision into non annotated regions of the human body. This is achieved by training a CNN-based “teacher network” to reconstruct ground truth values given the images.

Facebook says, “Detectron is a high-performance codebase for object detection, covering both bounding box and object instance segmentation outputs.” The team has designed the tool to be flexible for rapid implementation and evaluation. Detectron is used by the FAIR team on numerous state-of-the-art research projects. The commitment to open sourcing state of the art technologies is commendable. The DensePose GitHub repository also hosts trained models that can be readily used by researchers.

The researchers think that making DensePose open and accessible to all will make a huge difference. And they are right because there are not many datasets and tools available in the field of multi-view registrations and 3D model building. The data is very important and will be a treasure for many computer vision researchers in the field. Facebook feels that the release of the dataset will also bring engineers and researchers working in the fields of Computer Vision, Computer Graphics and Augmented Reality. Some of the applications mentioned by FB for such a technology are “creating whole-body filters or learning new dance moves from your cell phone.”