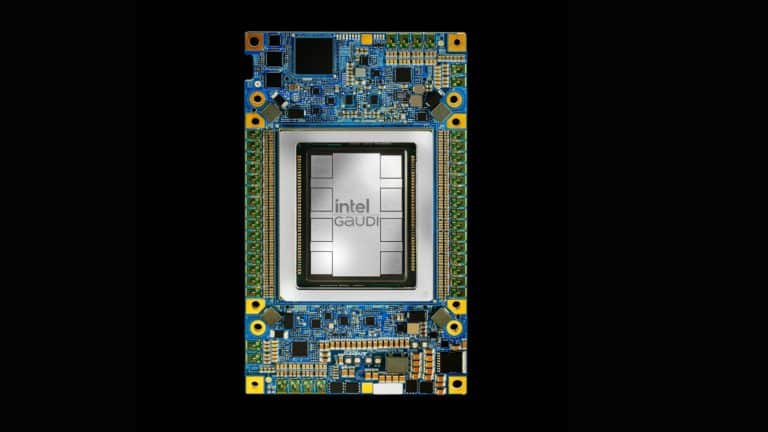

In this age of AI, applications such as computer vision are gaining immense popularity. But developing applications and solutions that emulate human vision can be an intimidating task. Not anymore as Intel® brings an OpenVINO™ toolkit, which is based on convolutional neural networks (CNN) and extends workloads across Intel® hardware (including accelerators) to maximise performance. OpenVINO™ can play a big role in accelerating the execution time for developers while ensuring efficiency like never before. Click here to know more.

In the webinar titled Accelerating AI Inference Using Intel® OpenVINO™ Toolkit, Intel® experts will give a thorough insight on how developers can use the pre-built models available in the OpenVINO™ package for inference. It will also allow developers to learn about optimising an existing model that they have created using any of the popular AI frameworks and convert them to a format understandable by OpenVINO™ and get the best Inference performance on Intel® hardware accelerators. Find out more details here.

What can developers learn?

The objective of this webinar is to introduce the developers to Intel®’s OpenVINO™ tool kit. Attendees can learn how to:

- Accelerate and deploy CNNs on Intel® platforms with the Deep Learning Deployment Toolkit (DLDT)

- Develop and optimise classic computer vision applications built with the OpenCV library or OpenVX API

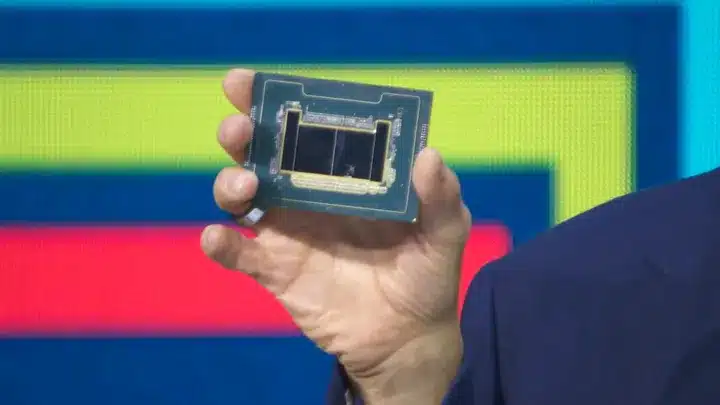

- Harness the performance of Intel®-based accelerators: CPUs, integrated GPUs, FPGAs, Vision Processing units, and Image Processing Units

Whom will you learn from?

The webinar would be conducted by Intel® experts who have been leading the initiatives of OpenVINO™ at Intel®.

Lakshmi Narasimhan: He leads the consulting team for Compute Performance and Developer Products division within Intel®. In this role, he is responsible for working with the developer ecosystem to help them get the best performance from Intel® hardware across IoT, Client, AI and HPC segments. He has been working with Intel® for the last 15 years and has led several organizations across Client, IoT and Software teams.

Hemanth Kumar G: He is a Technical Consulting Engineer in Embedded-IOT, Intel®, India. He has around 12 years of experience in industry and academia. He possesses expertise in Intel® Visual product named OpenVINO™ & Intel® Media product named MediaSDK. He educates and collaborates with the customers to provide technical solutions.

Agenda

| Time | Agenda |

| 11:00 AM – 11:05 AM | Welcome Note & Introduction |

| 11:05 AM – 11:25 AM | Webinar on “Accelerate AI Inference using OpenVINO™Toolkit” |

| 11:25 AM – 11:35 AM | Demo on Intel® OpenVINO™ tool kit |

| 11:35 AM – 11:45 AM | AI success story with Intel® |

| 11:45 AM – 12:00 PM | Open for Q&A |

When: 8th May

Time: 11:00 AM – 12:00 PM IST

Duration: 60 minutes