|

Listen to this story

|

Presently, we have a multitude of LLMs available, encompassing both closed and open variants. However, just a few months ago, the number of LLMs was limited, predominantly confined to corporate-owned AI labs with restricted access.

As a result, last year, a group of over 1000 researchers from 60+ countries and 250+ institutions came together to create BLOOM, a 176 billion parameters LLM that can generate text in 46 natural languages and dialects and 13 programming languages.

The purpose of BLOOM was to help advance research work on large language models. But ChatGPT changed the whole dimension, bringing a paradigm shift in AI research. Despite BLOOM being available for quite some time, we have seen models such as LLaMA have a wider impact on open source. Hence, we wonder, in this fast changing AI world, has BLOOM lost a bit of relevance?

Not a chatbot model

ChatGPT changed the whole game with its ability to converse like a human. Soon, Microsoft, which has invested billions in OpenAI, integrated ChatGPT with Bing, prompting Google to follow suit. Most recently, in this year’s I/O event, Google also announced PaLM2, a new LLM.

However, BLOOM, on the other hand, is not a chatbot but a webpage/blog/article completion model. While models like GPT-3 and GPT-4 or LamDA can answer questions, BLOOM, on the other hand, does not work like that.

For example, one cannot ask BLOOM the question ‘Who is the CEO of Google?’ Instead, the desired approach would be to input a sentence like ‘The CEO of Google is’ followed by a dash, and the model would then complete the sentence with the appropriate information.

In a field that has been heavily influenced by chatbots, a model like BLOOM may have experienced a diminished relevance.

Hardware Capacity

When BLOOM was released, BLOOM Training co-lead Teven Le Scao

said, “BLOOM is a demonstration that the most powerful AI models can be trained and released by the broader research community with accountability and in an actual open way, in contrast to the typical secrecy of industrial AI research labs.”

However, even though BLOOM is accessible to all researchers; however, to run it, one needs significant hardware capacity. Very few research labs or researchers in the whole world have access to such hardware. In comparison, the GPT-3 API provided by OpenAI is better suited for product development, offering a more accessible option.

Furthermore, in the present day, there is a wider availability of open-source LLMs that do not demand substantial hardware capacity to operate. This accessibility to open-source models has democratised the utilisation of LLMs.

Did LLaMA steal the limelight?

With the release of LLaMA, we have witnessed the true power of open-source. Since the codes for the model developed by Meta were leaked on GitHub, developers have discovered methods to reduce the memory requirements for the model.

Soon came Alpaca, which significantly lowered training and inference costs to only USD 600. This cost is notably lower compared to the multimillion-dollar investments typically associated with training such models.

In short, LLaMA and Alpaca have achieved the objectives that were initially envisioned for BLOOM. Alpaca, in particular, facilitated the democratisation of LLMs by making LLaMA accessible to a wider audience.

Through a reduction in fine-tuning costs to a few hundred dollars and by open-sourcing the model, Alpaca empowered developers worldwide to enhance the functionality of this LLM and utilise its power in their projects.

BLOOM is multilingual

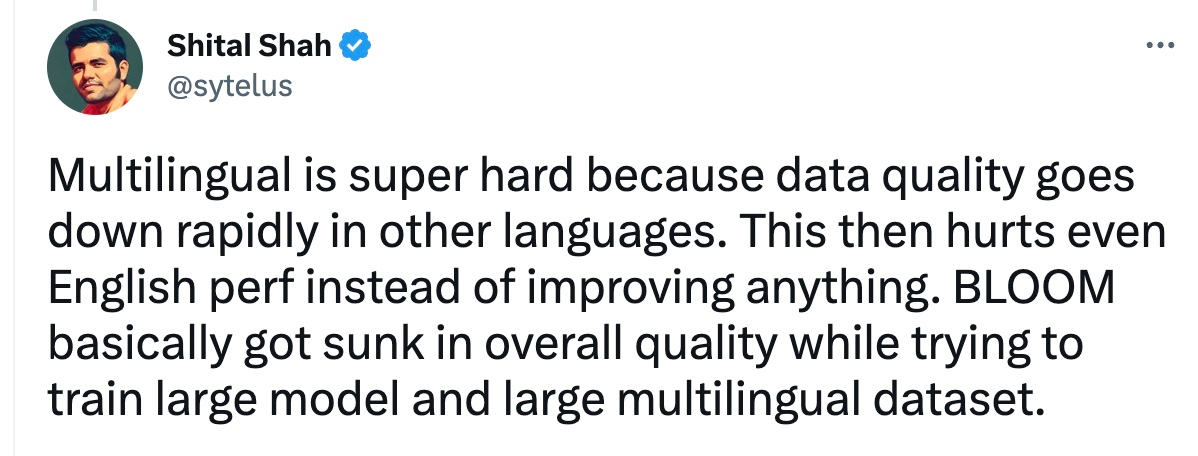

Another major reason why we have not seen a wider adoption of BLOOM might be the fact that it’s multilingual. It was the first ever multilingual large language model. It means only just over 30% of its training data was in English.

However, most of the popular benchmarks that exist for evaluation are specifically based on the English language. Further, most of the text models out there are also trained in English, hence, evaluating these models becomes a difficult task.

Reinforcement learning with human feedback

ChatGPT was not the first LLM chatbot to emerge either. Prior to its release, Meta introduced Galactica. However, the model was taken down within two days due to its tendency to produce nonsensical and absurd outputs.

But what OpenAI did differently with ChatGPT was reinforcement learning with human feedback (RLHF). This technique allows AI models to learn from human feedback, making them more accurate and effective in a variety of tasks.

However, BLOOM was not trained with human feedback. In terms of accuracy, BLOOM also lags behind other closed source models in the Stanford University’s HELM benchmark. The model also performs poorly on toxicity metrics and moderately on biases.

Also, most importantly, recently, Stability AI released the world’s first open source LLM trained with RHLF. Called StableVicuna, the model has 13 billion parameters and can perform a variety of natural language processing tasks, including question answering, language translation, and text completion, among others.

Conclusion

Nonetheless, BLOOM has not become completely obsolete either. The model has many use cases, especially for languages other than English. It can also serve as a great translation model because of its rich multilingual corpus.

Besides, BLOOM was a research process and was never developed with the intention to be commercially exploited and be usable by the general public.