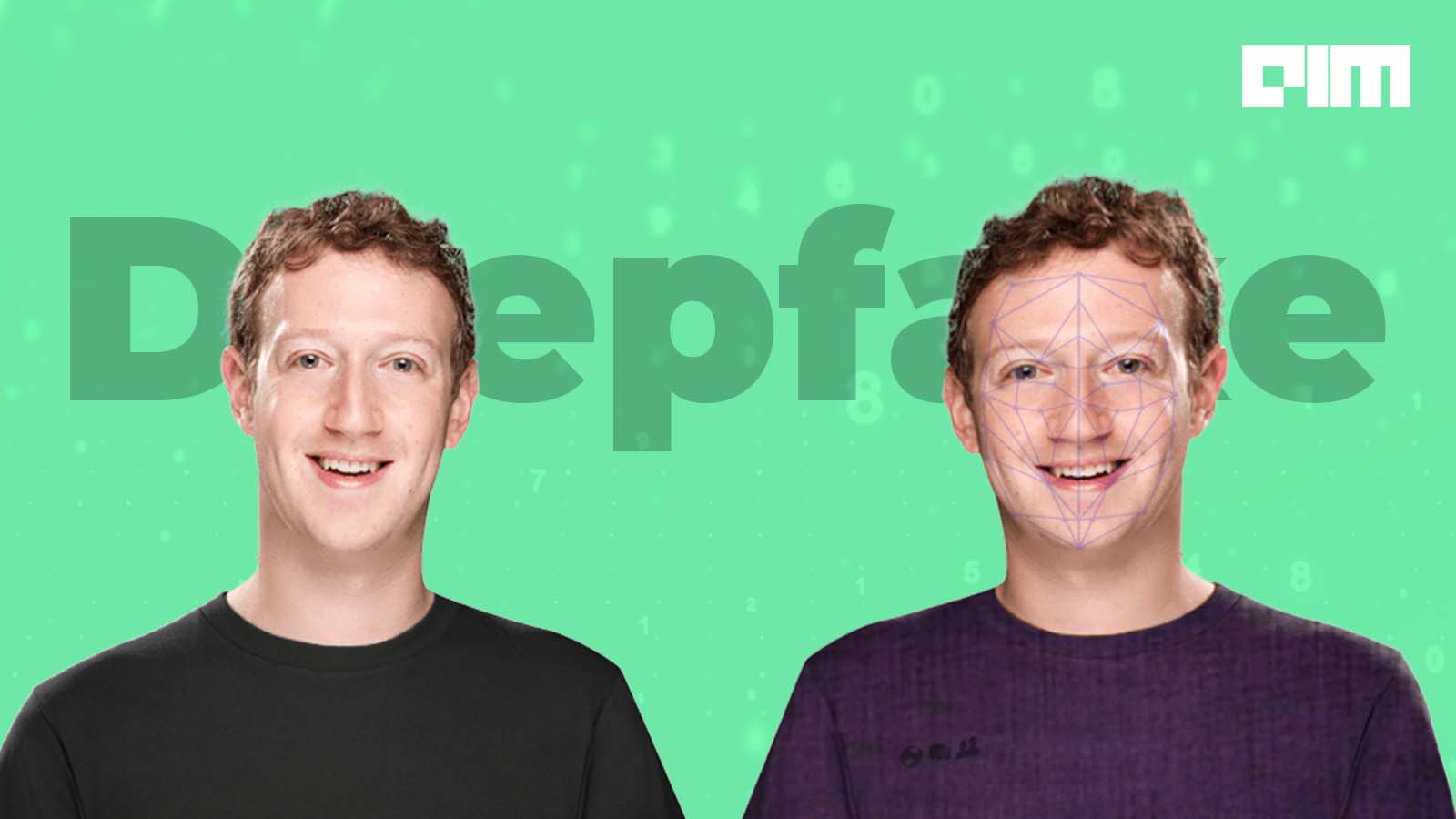

The advancement of artificial intelligence is skyrocketing — starting from creating good for the society in industries like healthcare, BFSI and retail to name a few, to creating massive threat by generating indistinguishable fake images and videos on the internet. Such advancement of AI has now made it possible for anyone on the internet to use deep learning technology to create fake replicated images of famous personalities and use it for spreading misinformation. And this is what is called deepfake media.

As a matter of fact, 2019 and 2020, so far, has witnessed many such instances where deep fakes have managed to fool people on the internet. One of the renowned cases was during this year’s Indian election campaign where BJP politician Manoj Tiwari leveraged the technology of deepfake to create a video criticising the Delhi government in multi-language. Other significant instances involved creating fake Tom Hanks photos or replacing the real Iron Man with Tom Cruise. In more severe cases, it is also being weaponised to create pornography without consent.

Also Read: Deepfake Fiascos Of 2020 That Made Headlines

These instances have made us realise that this sophisticated technology is indeed scary and thus became a worldwide concern for many. This has only been possible with the significant advancement of GANs and the readily available tools on the internet. According to last year’s report, there are approximately 14,678 deepfake videos online, and it hasn’t stopped growing since then.

While these deepfakes have been creating havoc on the internet, a new threat has come into the picture, where people are using artificial intelligence to generate faces of fake humans for spreading misinformation. In this scenario, wrongdoers, instead of making fake images of famous personalities or celebrities, are creating images of non-existing humans altogether, from scratch. These fake human images are then being used to either create a deceiving impression of the crowd or to manipulate opinions of many on the internet.

In fact, while we are talking about it, these computer-generated fake faces have already been identified in a pro-China propaganda campaign, as well as being used by Russian spies for spreading misleading information on social media. Such a proliferation of these fake faces has indeed created a grave concern for the public, where the technology can be more dangerous.

Also Read: Latest Model That Might Replace GANs To Create Deepfakes

The New Game Of AI-Generated Fake Human Faces

These AI-generated images of fake humans, similar to deep fakes, are also created using generative adversarial networks, where one network is used to generate content, aka generator, whereas the other network compares the generated content and keeps on improving it until it has entirely separated the new content from the real one.

Explaining the process, an Indian-based US developer Shivkanth B, told the media — firstly an input is fed to the model that generates new content based on the algorithm; secondly another model compares the new content to the set of known-real content to decide if the content is real or fake.

This same technology has, in fact, been used by a website — This Person Does Not Exist, which presents computer-generated photos of fake human faces every time the user refreshes its page. The website leverages NVIDIA’s popular StyleGAN2 to create these hyper realistic human faces that can easily fool the public on the internet.

On this note, Ben Nimmo the director of investigations at a social media analytics company, Graphika tweeted that how this whole concept was far fetched a year ago, however currently it is ubiquitous everywhere.

… and recent ones used GAN-generated images.

— Ben Nimmo (@benimmo) September 22, 2020

Again.

A year ago, this was a novelty. Now it feels like every operation we analyse tries this at least once. pic.twitter.com/iu2HSuZ53I

Such an advancement in GANs has made it impossible to ensure whether the images of the people on the internet are real or fake.

Along with this, the advent of state-of-the-art GPT-3 has made this concept even easier for the public. According to Shivkanth, the combination of GAN along with a language model would allow users to write simple sentences like “generate a left-facing middle-aged white male with short brown hair and blue eyes” to create these fake faces.

Although it still requires high-quality inputs for the outcome to be more accurate, Shivkant believes that it will be soon for users to be able to create “hyper real videos in the coming months,” as stated to the media.

Wrapping Up

While the concerns over these AI-generated misinformation rises, Max Rizzuto, a research assistant at the Atlantic Council’s Digital Forensic Research Lab (DFRLab) stated to the media that awareness of these computer-generated fake human faces is the first step to combat such deep fakes. He said, once the people are informed about these fabrications, they can easily spot the abnormalities in these images.

To start with, one can easily spot how these fake images have the subject’s head tilted while their nose and teeth remained straight in the position. Secondly, the technology also struggles with blending in the background with the foreground image, creating an unpleasant view for the spectators. Thirdly, and the most important giveaway is the eyes of the subject, which fails to move, despite where the subject is facing. Such pointers would easily help in spotting these fake human faces.

On the other hand, many researchers are also working on tools and technologies that can help in detecting these fabricated images. Rizzuto believes that despite the field is constantly evolving many researchers are constantly working against these deepfake media. In fact, in the near future, he is hoping to see a massive diminishing of such fabrications.