Among various logical gates, the XOR or also known as the “exclusive or” problem is one of the logical operations when performed on binary inputs that yield output for different combinations of input, and for the same combination of input no output is produced. The outputs generated by the XOR logic are not linearly separable in the hyperplane. So In this article let us see what is the XOR logic and how to integrate the XOR logic using neural networks.

Table of Contents

- What is XOR operating logic?

- The linear separability of points

- Why can’t perceptrons solve the XOR problem?

- How to solve the XOR problem with neural networks?

- Summary

What is XOR operating logic?

Let us try to understand the XOR operating logic using a truth table.

From the below truth table it can be inferred that XOR produces an output for different states of inputs and for the same inputs the XOR logic does not produce any output. The Output of XOR logic is yielded by the equation as shown below.

| X | Y | Output |

| 0 | 0 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

Output= X.Y’+X’.Y

The XOR gate can be usually termed as a combination of NOT and AND gates and this type of logic finds its vast application in cryptography and fault tolerance. The logical diagram of an XOR gate is shown below.

Are you looking for a complete repository of Python libraries used in data science, check out here.

The linear separability of points

Linear separability of points is the ability to classify the data points in the hyperplane by avoiding the overlapping of the classes in the planes. Each of the classes should fall above or below the separating line and then they are termed as linearly separable data points. With respect to logical gates operations like AND or OR the outputs generated by this logic are linearly separable in the hyperplane

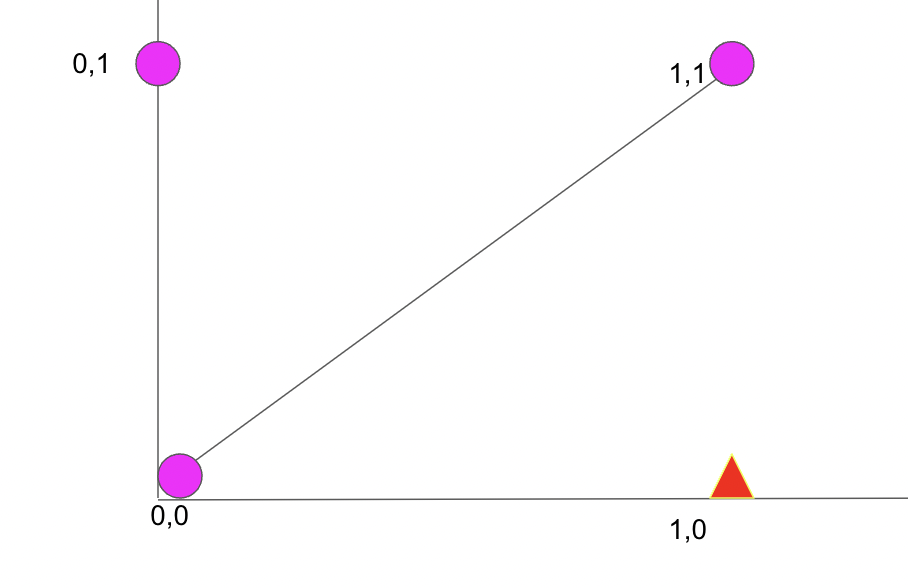

The linear separable data points appear to be as shown below.

So here we can see that the pink dots and red triangle points in the plot do not overlap each other and the linear line is easily separating the two classes where the upper boundary of the plot can be considered as one classification and the below region can be considered as the other region of classification.

Need for linear separability in neural networks

Linear separability is required in neural networks is required as basic operations of neural networks would be in N-dimensional space and the data points of the neural networks have to be linearly separable to eradicate the issues with wrong weight updation and wrong classifications Linear separability of data is also considered as one of the prerequisites which help in the easy interpretation of input spaces into points whether the network is positive and negative and linearly separate the data points in the hyperplane.

Why can’t perceptrons solve the XOR problem?

Perceptrons are mainly termed as “linear classifiers” and can be used only for linear separable use cases and XOR is one of the logical operations which are not linearly separable as the data points will overlap the data points of the linear line or different classes occur on a single side of the linear line.

Let us understand why perceptrons cannot be used for XOR logic using the outputs generated by the XOR logic and the corresponding graph for XOR logic as shown below.

In the above figure, we can see that above the linear separable line the red triangle is overlapping with the pink dot and linear separability of data points is not possible using the XOR logic. So this is where multiple neurons also termed as Multi-Layer Perceptron are used with a hidden layer to induce some bias while weight updation and yield linear separability of data points using the XOR logic. So now let us understand how to solve the XOR problem with neural networks.

How to solve the XOR problem with neural networks?

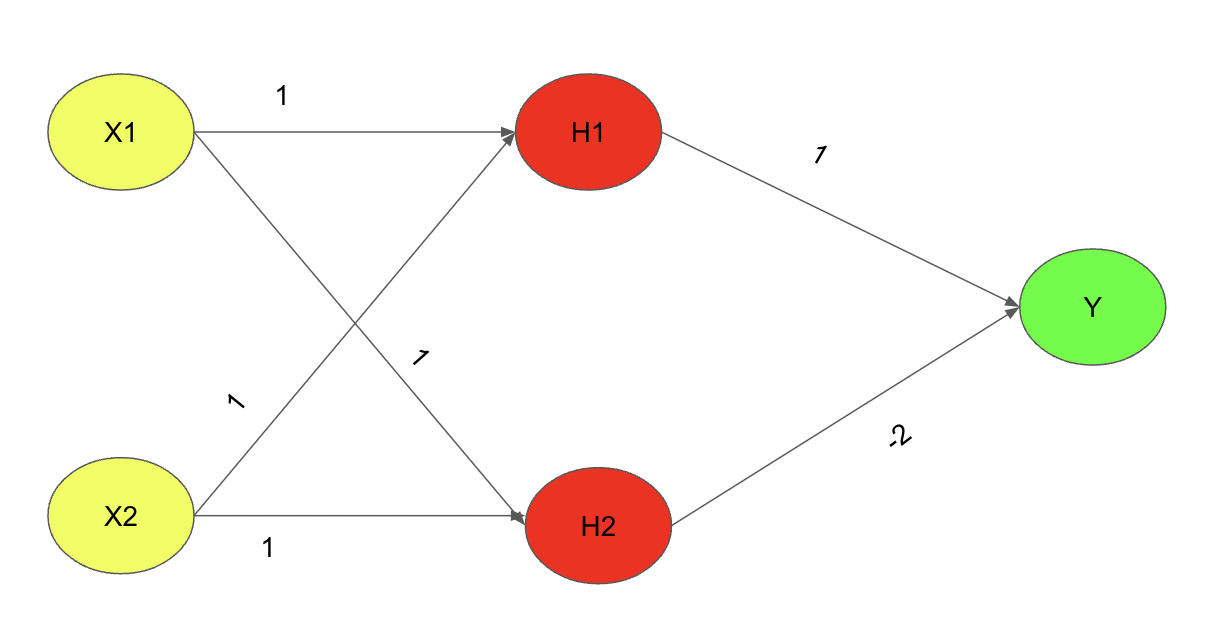

The XOR problem with neural networks can be solved by using Multi-Layer Perceptrons or a neural network architecture with an input layer, hidden layer, and output layer. So during the forward propagation through the neural networks, the weights get updated to the corresponding layers and the XOR logic gets executed. The Neural network architecture to solve the XOR problem will be as shown below.

So with this overall architecture and certain weight parameters between each layer, the XOR logic output can be yielded through forward propagation. The overall neural network architecture uses the Relu activation function to ensure the weights updated in each of the processes to be 1 or 0 accordingly where for the positive set of weights the output at the particular neuron will be 1 and for a negative weight updation at the particular neuron will be 0 respectively. So let us understand one output for the first input state

Example: For X1=0 and X2=0 we should get an input of 0. Let us solve it.

Solution: Considering X1=0 and X2=0

H1=RELU(0.1+0.1+0) = 0

H2=RELU(0.1+0.1+0)=0

So now we have obtained the weights that were propagated from the input layer to the hidden layer. So now let us propagate from the hidden layer to the output layer

Y=RELU(0.1+0.(-2))=0

This is how multi-layer neural networks or also known as Multi-Layer perceptrons (MLP) are used to solve the XOR problem and for all other input sets the architecture provided above can be verified and the right outcome for XOR logic can be yielded.

Summary

So among the various logical operations, XOR logical operation is one such problem wherein linear separability of data points is not possible using single neurons or perceptrons. So for solving the XOR problem for neural networks it is necessary to use multiple neurons in the neural network architecture with certain weights and appropriate activation functions to solve the XOR problem with neural networks.